Inference acceleration that delivers 100 times faster processing at one-tenth the operational cost of today’s top solutions has caught the attention of a major AI research organization. The move signals a potential shift in how large-scale AI models are deployed, with implications for both performance and economic viability.

The focus is on a UK-based startup whose fusion architecture leverages a novel approach to parallel processing. Unlike traditional GPU or TPU designs, this system combines hardware-level optimizations with software-defined workflows, aiming to break the efficiency barriers that have long constrained AI workloads. The technology’s ability to scale inference without proportional increases in power consumption or latency is what makes it stand out.

Performance and Economic Viability

The startup’s prototype has demonstrated benchmark results that, if validated at scale, could redefine the cost-performance equation for AI inference. Key metrics include

- A 100-fold improvement in throughput compared to existing state-of-the-art accelerators.

- Operational costs reduced by a factor of ten relative to current industry benchmarks.

These gains are not hypothetical; they stem from a fusion of hardware innovations and algorithmic optimizations tailored for large language models. The architecture avoids the bottlenecks seen in GPU-based systems, where memory bandwidth and thermal constraints often limit scaling. Instead, it integrates processing, caching, and data movement into a unified pipeline, reducing overhead while maintaining flexibility.

Strategic Implications for the AI Ecosystem

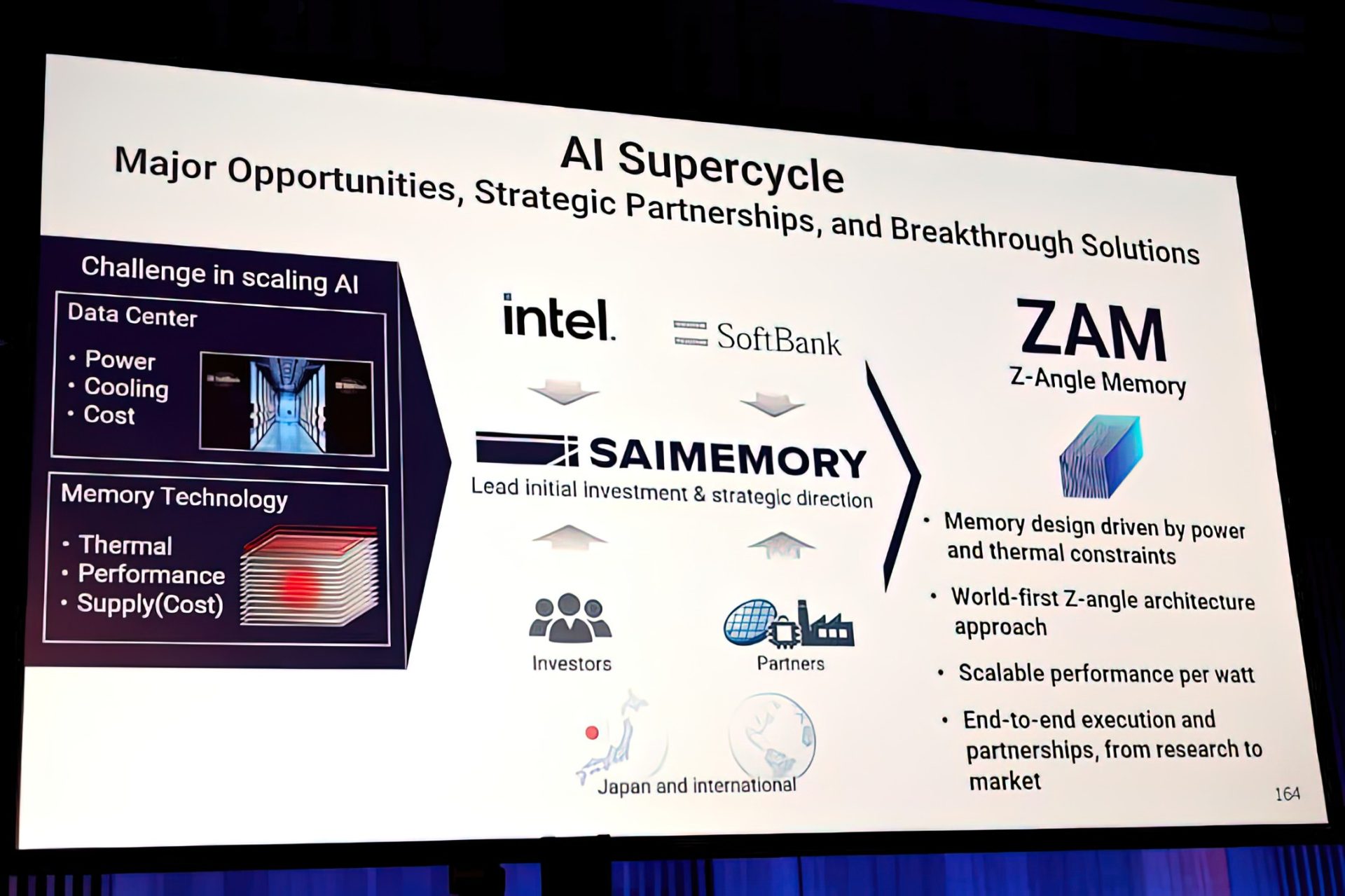

For organizations invested in AI infrastructure, this development represents more than just a technical breakthrough—it’s a strategic opportunity to rethink operational models. The promise of 100x faster inference at one-tenth the cost could lower the barrier for deploying complex models across industries, from cloud providers to edge devices. However, adoption hinges on proving reliability and scalability in real-world environments.

One challenge lies in balancing theoretical gains with practical constraints. While benchmarks show impressive results, sustained performance under varied workloads remains untested. Additionally, the economic model—how this cost reduction translates into pricing for end users—is still evolving. Early indicators suggest a shift toward more modular, hardware-software co-designed systems, but whether this will disrupt or merely supplement existing architectures is unclear.

The startup’s fusion approach also raises questions about the future of AI acceleration. If successful, it could challenge the dominance of established players in the market, forcing them to adapt or risk being outmaneuvered. For PC builders and data center operators, this means evaluating new options that prioritize efficiency without sacrificing performance.

At this stage, what is confirmed is a clear technical path toward more efficient inference. What remains uncertain is how quickly this can be integrated into existing workflows and whether the economic model will hold as workloads grow. The stakes are high, but the potential rewards—both in speed and cost—are undeniable.