The phrase ‘We’re in the first innings’ has become shorthand in data centers, but its weight extends far beyond supply-chain jitters. It signals a structural realignment where memory, long an enabler, is now the bottleneck that dictates every layer of AI deployment—from model precision to facility design.

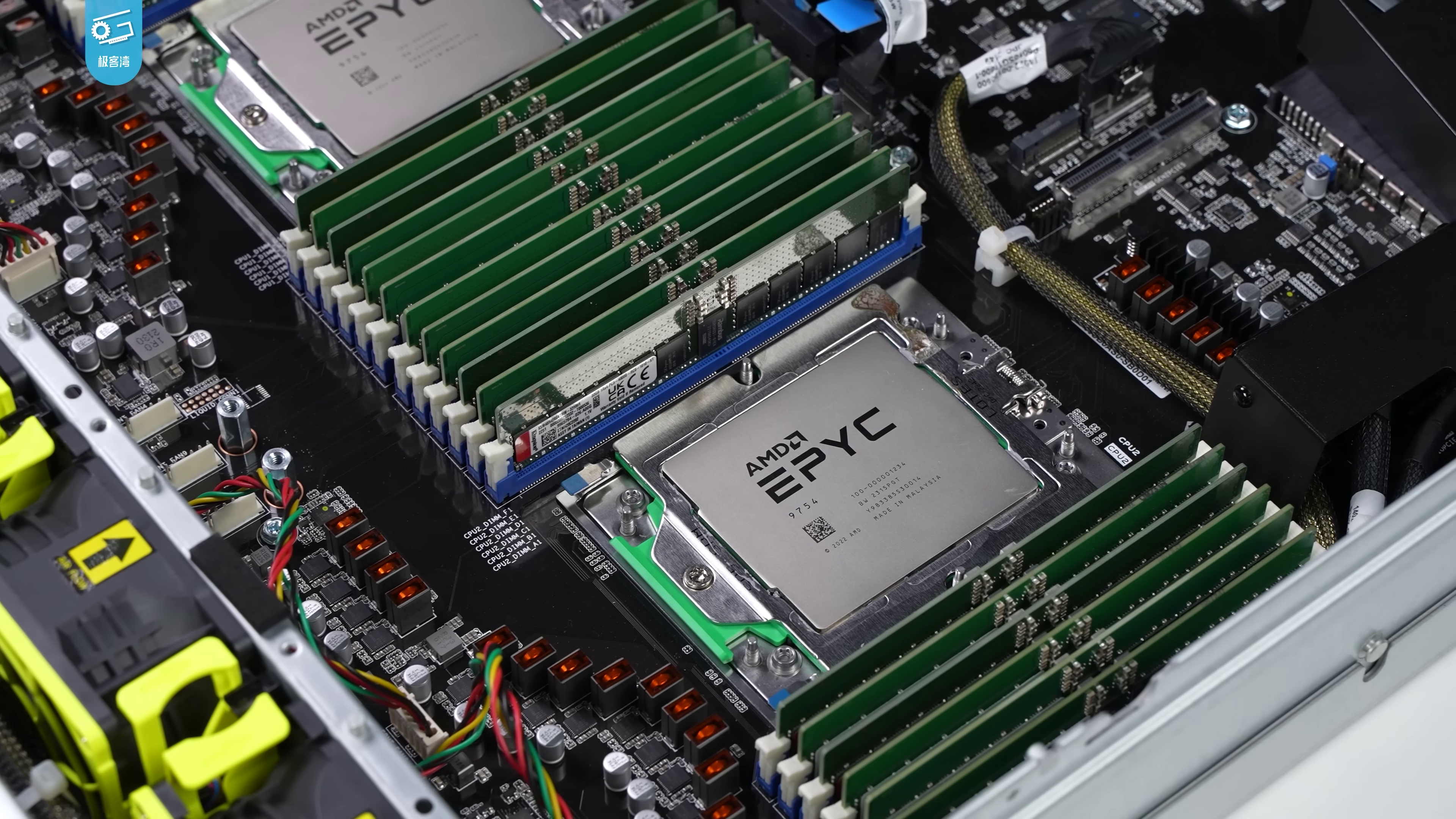

DRAM and NAND are no longer just components; they have become the scarce resource that will determine whether next-generation AI clusters can scale at all. With these two segments projected to absorb over half of the industry’s total addressable market, every other part of a server—CPU, GPU, SSD—must compete for a shrinking allocation. The outcome is not merely higher prices but a fundamental reconfiguration of how AI systems are engineered.

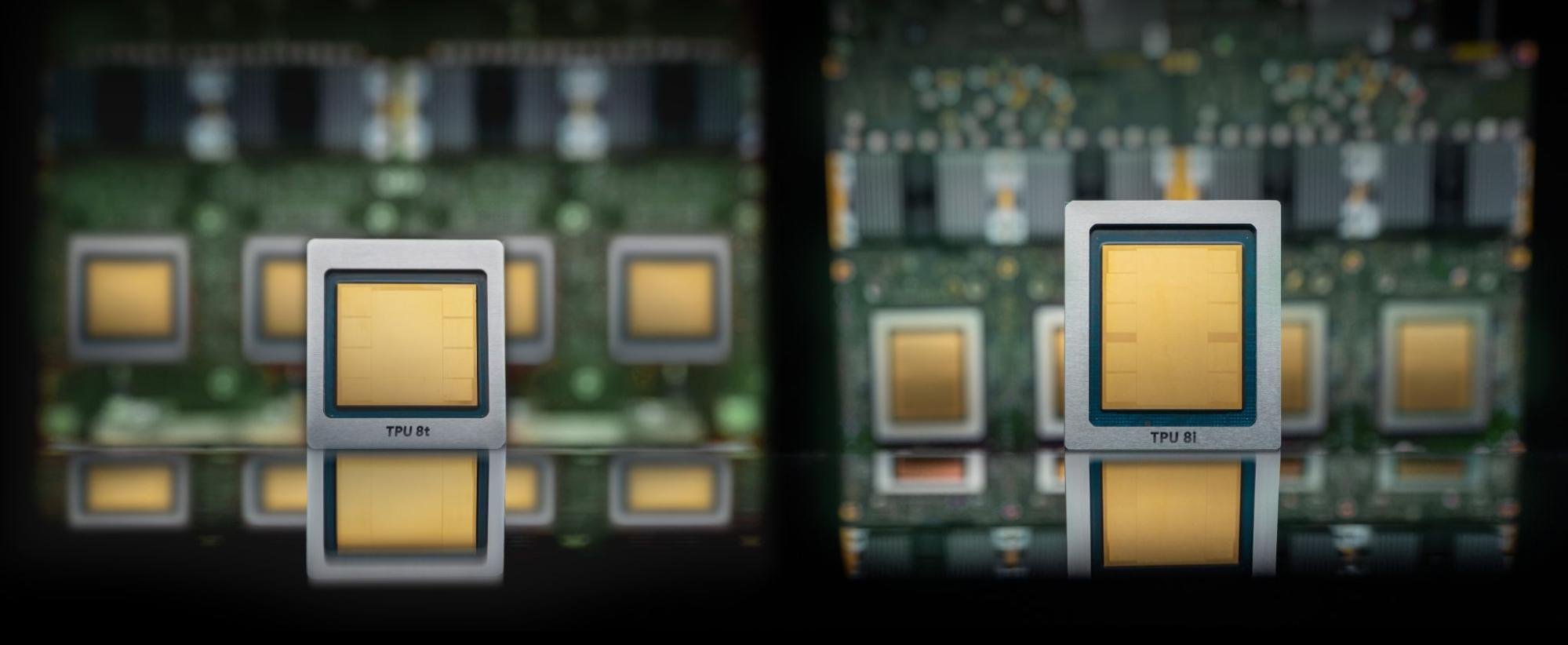

Visible changes are already cascading through the ecosystem. High-bandwidth memory (HBM) stacks that were once designed to handle 128 GB of data are now being pushed toward 512 GB, yet thermal constraints force aggressive throttling even at those densities. Similarly, NAND flash—once a stable backbone for storage—is being cycled beyond its rated endurance, shortening the lifespan of SSDs in high-performance environments.

The Cost-Performance Tightrope

For data center operators, the immediate challenge is balancing performance gains against escalating costs. A 50% increase in memory capacity doesn’t just translate to more concurrent threads or larger batch sizes; it also demands a corresponding leap in power infrastructure. An AI node that previously consumed 1,500 watts may now require 2,200 watts or more, depending on the mix of inference and training tasks.

This shift is forcing operators to reconsider every aspect of their deployment strategy. Liquid cooling is no longer optional; it’s a necessity in many configurations. Some facilities are being retrofitted mid-cycle, while others are opting for distributed architectures that spread heat across larger footprints. The result is a trade-off between density and efficiency—one that will shape the economics of AI at scale.

A Supply Chain Under Strain

- HBM prices remain elevated through 2025, reflecting both supply constraints and yield challenges in advanced process nodes.

- NAND prices have stabilized but still reflect a market tightened by production losses, particularly in high-density 3D NAND.

- Vertical scaling of memory chips is being pursued to reduce footprint, but this comes with higher power draw per bit, complicating thermal management.

The industry’s response has been twofold: vertical integration to shrink memory footprints and horizontal expansion to distribute heat. Both strategies introduce new cost pressures, pushing operators toward more modular or hybrid architectures that can adapt to fluctuating memory availability.

Long-Term Implications

Micron’s warning is not just about inventory; it’s a signal that memory will soon become the defining constraint in AI infrastructure. If demand continues on its current trajectory, the industry will face a choice: accept smaller models with lower precision or invest heavily in cooling and power to sustain larger ones.

This reality will force buyers to rethink their hardware stacks sooner rather than later. The days of memory being an afterthought are over. It is now the variable that will determine whether AI can scale efficiently—or if it will become a bottleneck that stifles innovation.