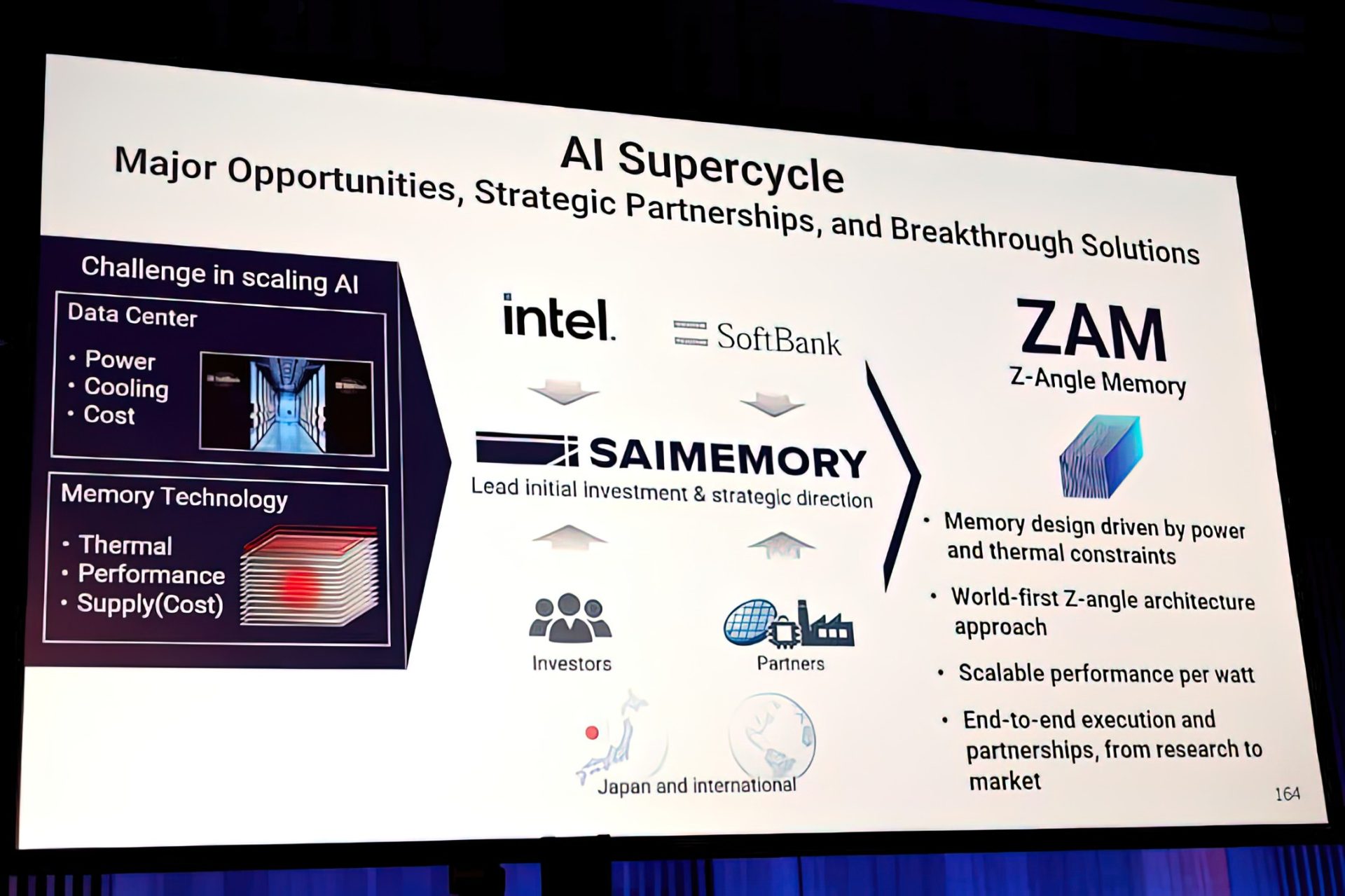

PC builders working on AI-driven projects are facing a critical choice: stick with established HBM memory or adopt Intel’s ZAM architecture, which delivers double the bandwidth at a fraction of the power draw.

The ZAM (Zero Area Memory) technology from Intel promises 2x the bandwidth of HBM4—1.5 TB/s per stack—while also supporting larger capacities than current HBM solutions. Unlike traditional HBM, which stacks memory vertically and generates significant heat, ZAM uses a lateral design that reduces thermal constraints, making it more scalable for high-performance systems.

For AI workloads, this means faster data processing without the need for aggressive cooling or complex thermal management. The shift could also ease supply chain pressures, as ZAM is expected to be produced on Intel’s existing 3nm process line, avoiding the bottlenecks seen with HBM manufacturing.

The technology is still in development, but early benchmarks suggest it could outperform HBM4 in both speed and efficiency. If adopted widely, ZAM could become a standard for AI accelerators, GPUs, and high-end CPUs, potentially reducing reliance on stacked memory solutions.

For now, the focus remains on availability. Intel has not yet announced pricing or release timelines, but industry analysts expect ZAM to enter production in late 2025. Until then, PC builders will need to weigh the trade-offs between current HBM options and the promise of ZAM’s performance gains.