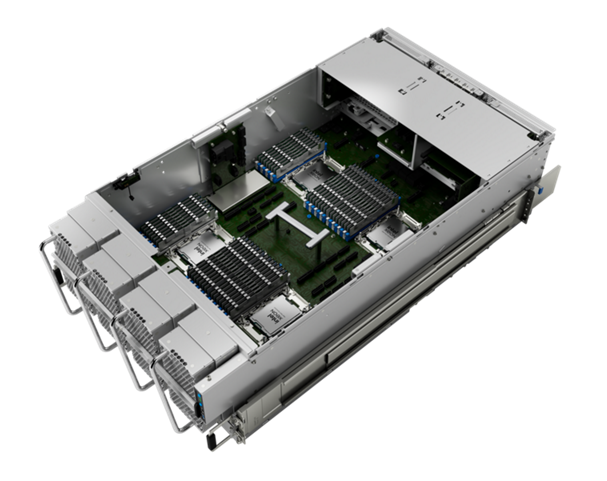

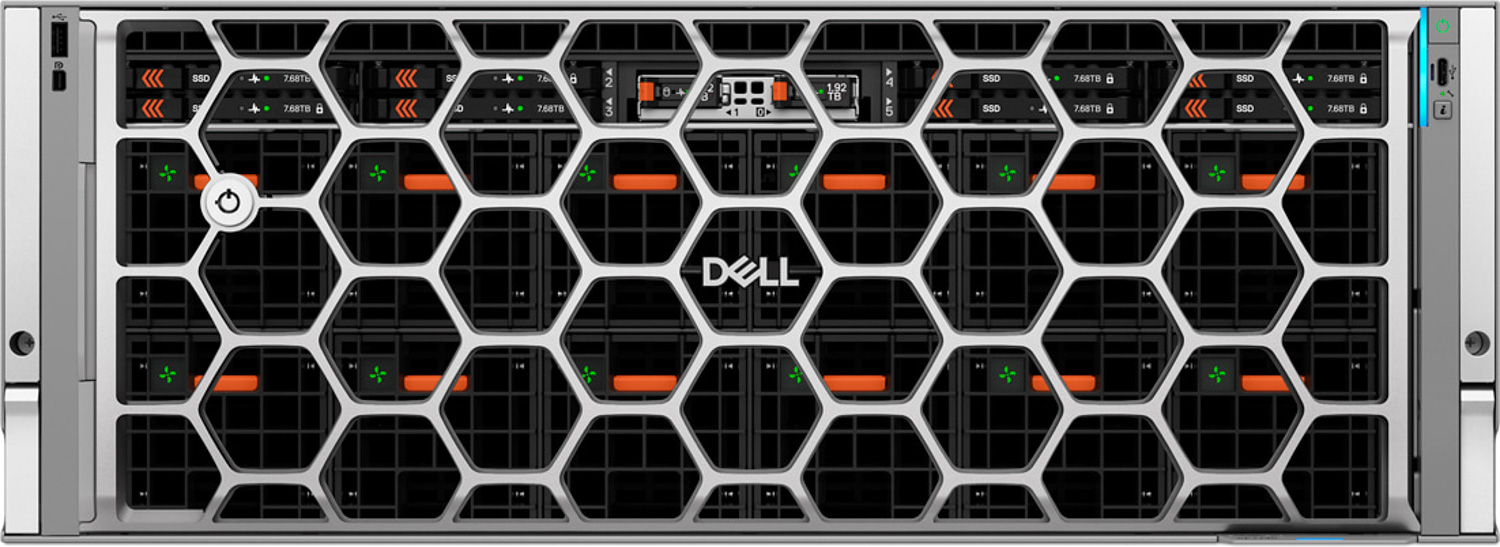

A developer running a large in-memory database on a single server suddenly needs twice the memory capacity. The HPE Compute Scale-up Server 3250 arrives as an answer—packed with up to 12TB of DDR5 RAM, it pushes the boundaries of what a single-node system can handle while keeping power draw and cost per GB in check.

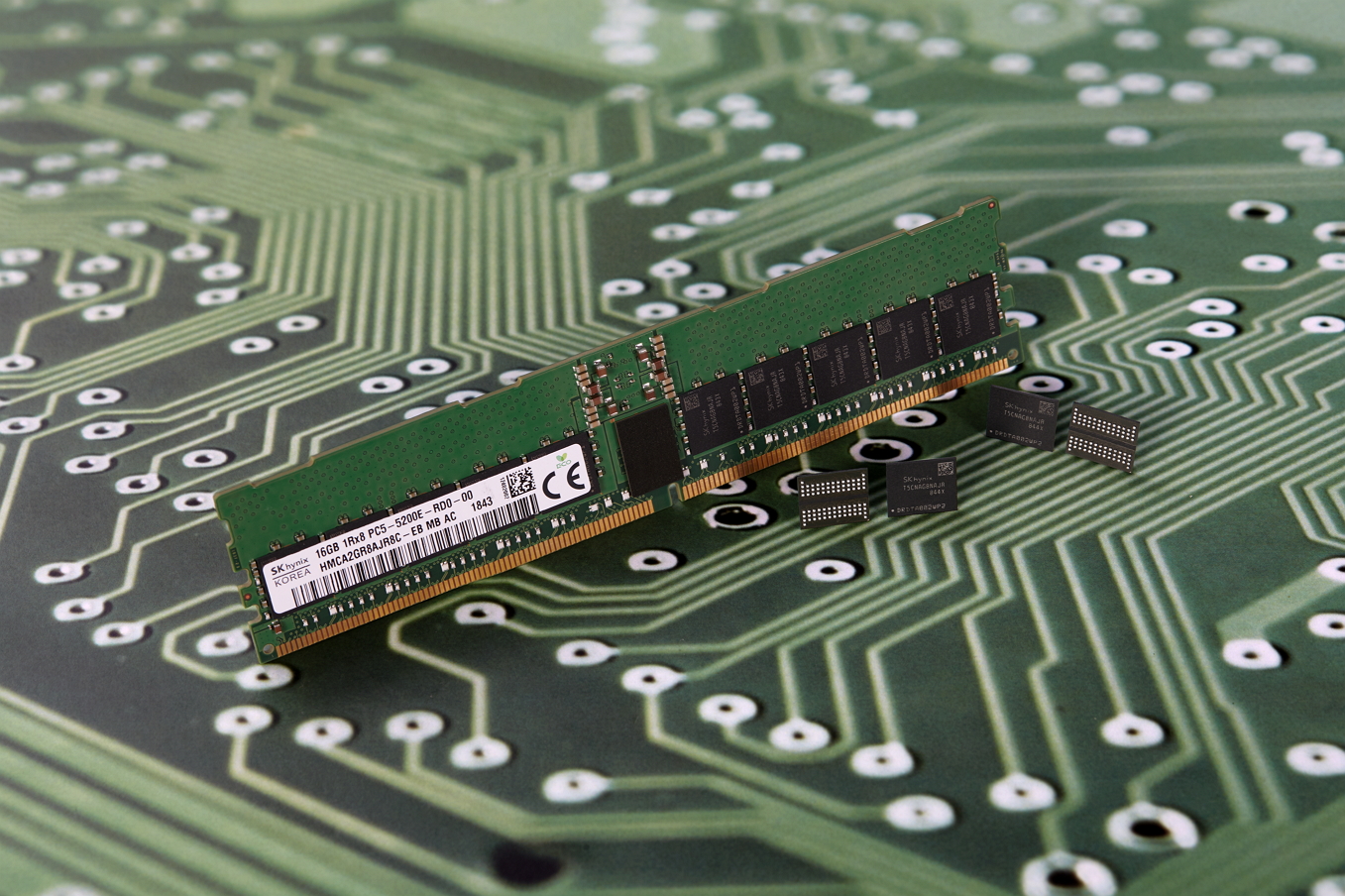

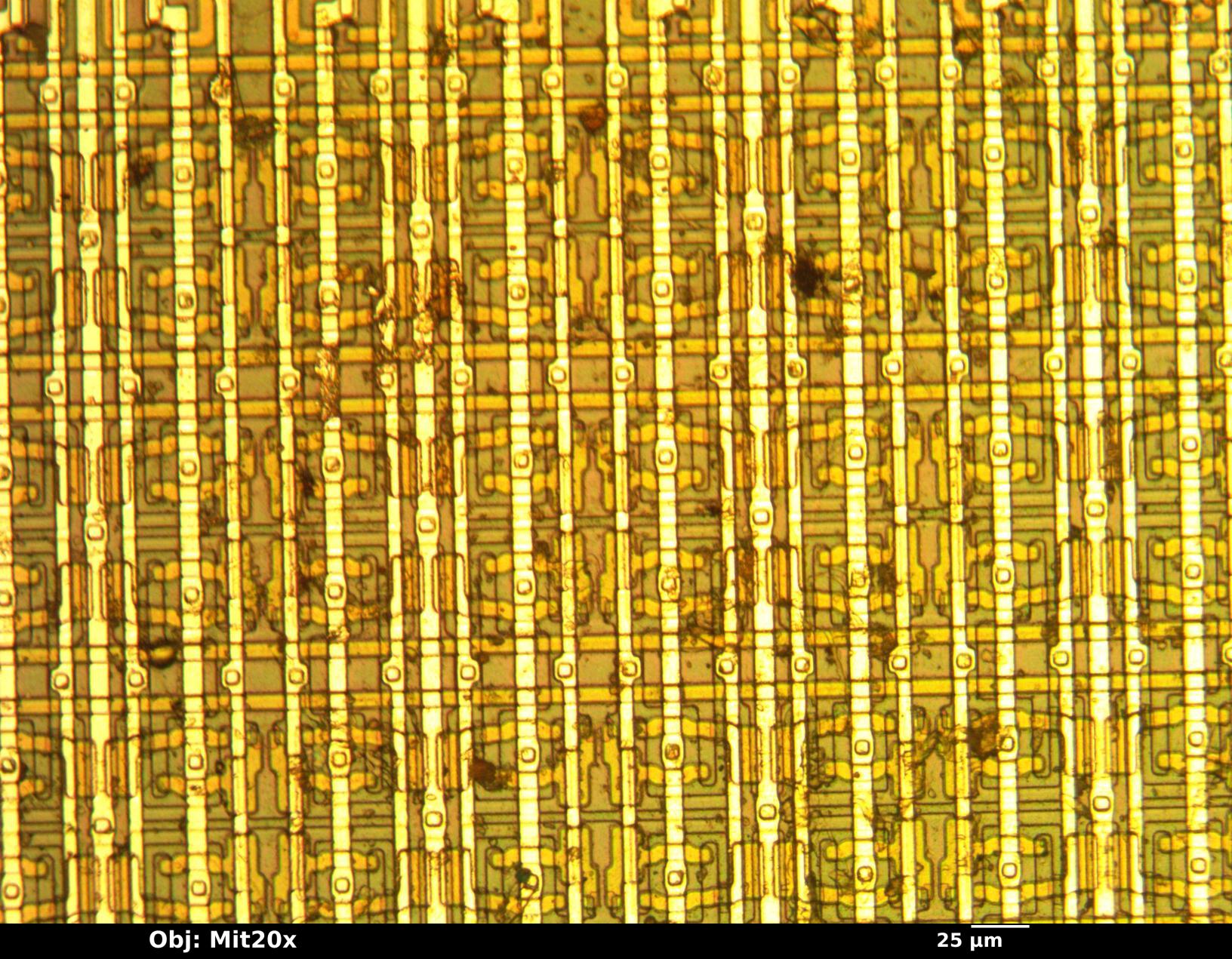

This isn’t just another server refresh. The 3250 is built for workloads that demand massive memory footprints: AI training, real-time analytics, and high-performance computing (HPC) simulations. It supports up to four Intel Xeon Scalable processors—either the 5th Gen (Emerald Rapids) or 4th Gen (Sapphire Rapids)—and scales RAM capacity from 1TB to 12TB in increments of 384GB per socket, using HPE’s latest 12-channel memory architecture. That means a single server can now host datasets that once required distributed setups, potentially cutting operational costs while improving performance.

Specs and Scalability

- Up to 12TB DDR5 RAM (384GB per socket, 12 channels)

- Four Intel Xeon Scalable processors (5th Gen or 4th Gen)

- Dual-socket design with HPE’s ProLiant Gen10+ platform

- Supports NVMe SSDs and PCIe 5.0 for storage and acceleration

The server also introduces a new power-efficient design, claiming up to 30% better power utilization than previous generations at full load. That’s no small feat in an era where data centers are under pressure to balance performance with sustainability.

Why This Matters for Developers

The 3250 directly challenges the assumption that large-scale in-memory workloads require distributed systems or expensive custom hardware. By packing so much memory into a single node, HPE aims to simplify deployment while reducing the overhead of managing clusters. For AI researchers, this could mean faster iteration on models without the complexity of distributed training setups.

But there’s a reality check: not all workloads will benefit from this scale-up approach. Some AI tasks still rely on distributed computing for scalability beyond a single node’s memory limits. The 3250 is optimized for scenarios where memory bandwidth and capacity are the bottlenecks, not compute density.

HPE positions this as part of a broader shift toward more efficient, consolidated infrastructure—one that aligns with trends in edge AI and high-performance computing. Whether it becomes a standard-bearer depends on how quickly developers adopt single-node scale-up over distributed alternatives.

The 3250 will be available in select regions starting this quarter, with pricing expected to follow a per-GB cost model typical of HPE’s enterprise servers. For now, the big question remains: can it deliver on its promise of reducing operational costs without sacrificing performance in real-world deployments?