For years, developers chasing high-performance computing faced an unspoken rule: if your workflow demanded CUDA, your hardware had to bend. NVIDIA’s proprietary framework was the gold standard for AI and machine learning, locking users into its ecosystem with little alternative. But that barrier just collapsed—thanks to an unexpected player.

A Reddit user recently demonstrated something remarkable: using an AI tool called Clawdbot, they translated an entire CUDA backend into AMD’s ROCm framework in under 30 minutes. No complex manual rewrites. No specialized translation environments like Hipify. Just a seamless swap that preserved the core logic of the code. The result? A crack in NVIDIA’s once-impenetrable CUDA moat—and a surge in demand for Apple’s Mac Mini, a machine that suddenly feels like the perfect fit for AI-driven development.

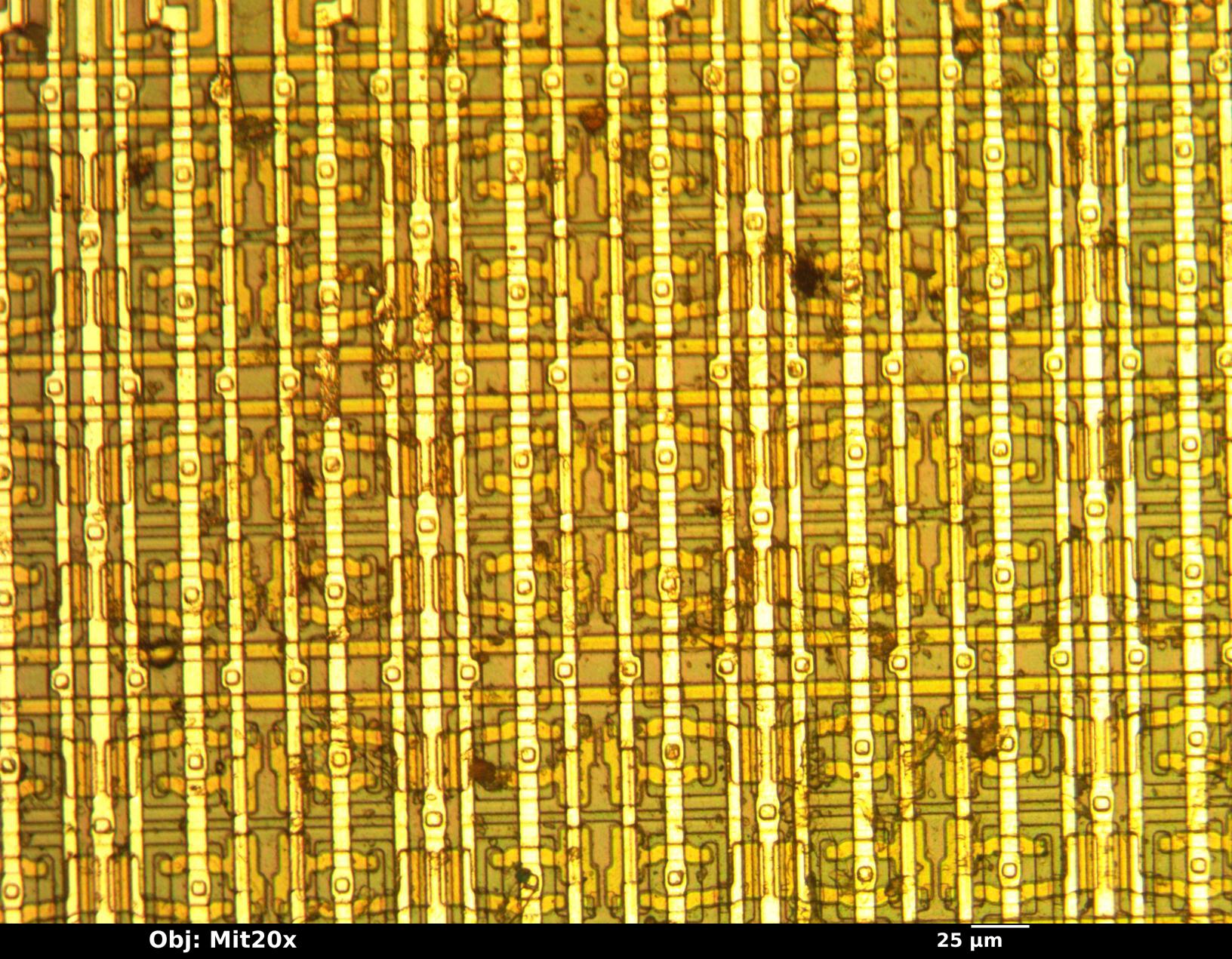

The implications are immediate. Apple’s compact, energy-efficient M-series chips have long been praised for their unified memory architecture, where CPU and GPU share the same cache—a design that eliminates bottlenecks found in traditional GPUs like NVIDIA’s RTX 4090, which maxes out at 24GB of dedicated VRAM. Compare that to the M4 Pro Mac Mini, which offers a staggering 64GB of unified memory, and the advantage becomes clear: Apple’s silicon isn’t just competitive for light ML tasks—it’s now a viable powerhouse for serious workloads, including image processing, where CUDA was once indispensable.

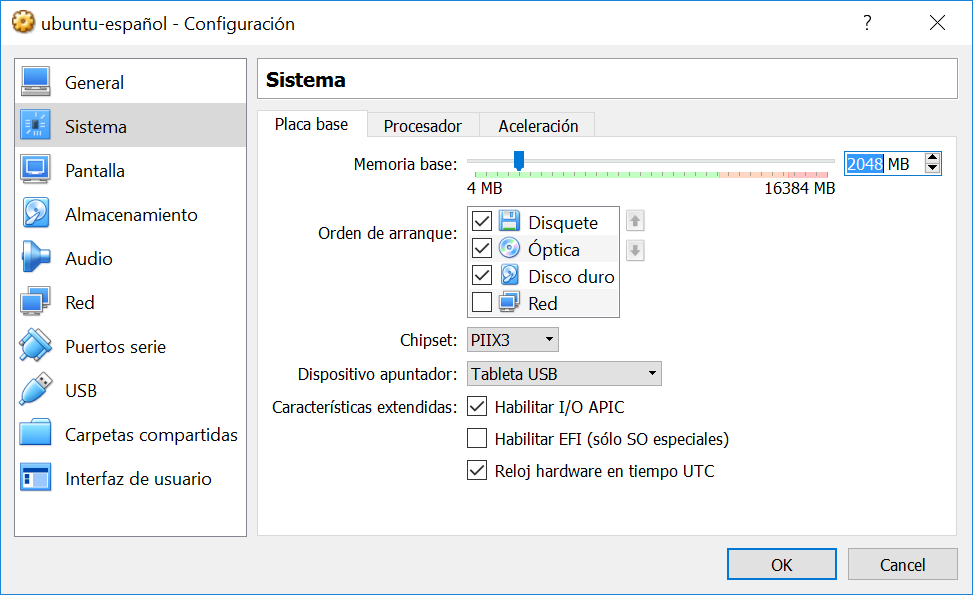

But the real game-changer isn’t just the hardware. It’s the software. With Clawdbot’s ability to automate CUDA-to-ROCm conversions, developers no longer need to choose between Apple’s ecosystem and NVIDIA’s tools. macOS Tahoe 26.2 reinforced this shift by adding Thunderbolt 5 support to its MLX platform, enabling bandwidth speeds of 80Gb/s—far surpassing the 10Gb/s limits of traditional Ethernet clusters. Combined with Metal Performance Shaders (MPS), Apple’s framework now rivals CUDA in performance for frameworks like PyTorch and TensorFlow, all while running natively on Apple silicon.

The fallout is already visible. Social media buzz suggests Apple’s Mac Mini inventory is moving at record speeds, with coders—particularly those in the ‘vibe coding’ community—rushing to adopt the machine. The irony? Apple, a company that historically avoided NVIDIA’s GPU ecosystem, is now benefiting from a tool that dismantles NVIDIA’s strongest advantage. For developers, the message is simple: if your workflow can run on ROCm, it can run on Apple. And with Clawdbot handling the heavy lifting, the transition is faster than ever.

What does this mean for the future? For one, it accelerates Apple’s push into AI and ML markets, where its unified memory architecture and MPS framework are now more attractive than ever. NVIDIA still dominates in high-end AI training, but for inference tasks and smaller-scale projects, Apple’s Mac Mini is suddenly a compelling alternative—especially at a fraction of the cost. The RTX 4090, for instance, starts at $1,599, while the M4 Pro Mac Mini sits at a mere $799. For developers working with 1080p image processing or lightweight AI models, the choice becomes obvious.

There’s also a broader cultural shift. Tools like Clawdbot democratize access to high-performance computing, reducing the time and expertise required to switch ecosystems. A developer who once spent weeks porting CUDA code can now do it in minutes. That efficiency gap—between those who know about tools like Clawdbot and those who don’t—could reshape industries, much like the early days of cloud computing. The question now isn’t just whether Apple’s Mac Mini can handle AI workloads. It’s whether the rest of the industry is ready to adapt.

One thing is certain: Apple’s Mac Mini has found a new audience. And for the first time, CUDA isn’t the gatekeeper it once was.