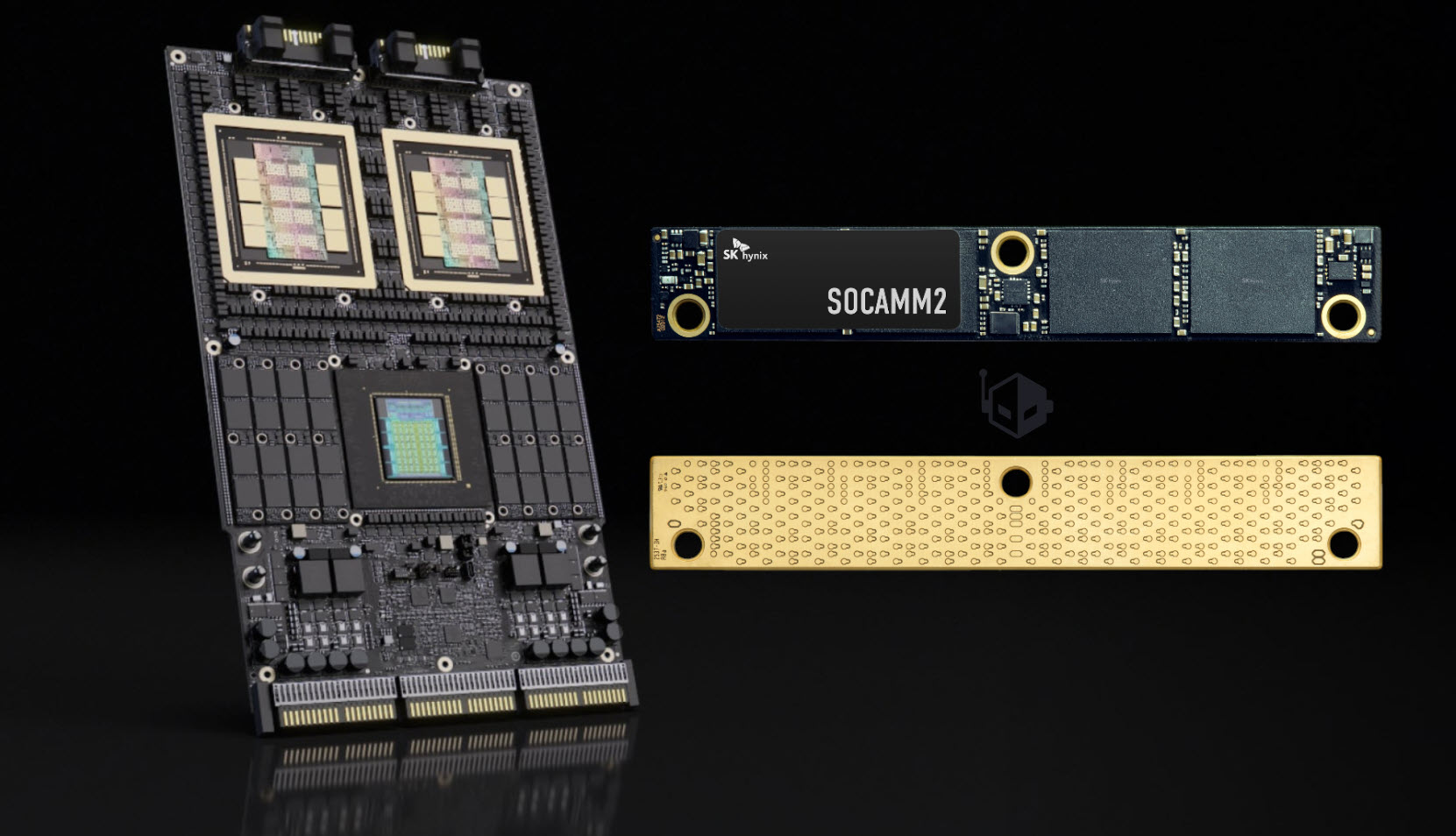

NVIDIA’s next-generation AI chip, codenamed Vera Rubin, has taken a significant step forward with the mass production of a specialized memory module that could redefine training speeds in high-end data centers.

The module, developed by SK Hynix and designed specifically for NVIDIA’s architecture, packs 192 GB of capacity into a single unit while delivering twice the bandwidth of previous generations. This leap is critical for AI workloads, where memory throughput often becomes a bottleneck—especially in models that rely on massive datasets.

Why Bandwidth Matters

Traditional HBM (High Bandwidth Memory) modules typically offer around 1.2 TB/s of bandwidth per stack. The new SOCAMM2 module, however, pushes this to approximately 2.4 TB/s—nearly doubling the data transfer rate without increasing power draw significantly. For AI training, this means faster iterations through large datasets, which translates to quicker model convergence and potentially lower operational costs.

Engineering Tradeoffs

The jump in bandwidth isn’t just about raw speed; it’s also a result of careful engineering tradeoffs. SK Hynix had to balance higher data rates with thermal constraints, ensuring the module remains stable even when pushed to its limits. This is particularly important for NVIDIA’s Vera Rubin, which is designed for large-scale deployments where heat management can be as critical as performance.

Looking Ahead

While this module is a major milestone, it’s just one piece of the puzzle for NVIDIA’s AI strategy. The Vera Rubin chip itself is expected to leverage this memory to deliver breakthroughs in efficiency, particularly in scenarios where power consumption is a priority. For data center operators, this could mean more effective cooling requirements and longer runtimes without compromising performance.

At a Glance

- Capacity: 192 GB (single module)

- Bandwidth: ~2.4 TB/s (double previous generation)

- Use Case: AI training accelerators, large-scale data centers

- Key Benefit: Faster training cycles, lower power overhead

The module’s mass production marks a turning point for NVIDIA’s AI roadmap. With this building block in place, the focus now shifts to how Vera Rubin will integrate it into its architecture—something that could set new benchmarks for efficiency and speed in the coming years.