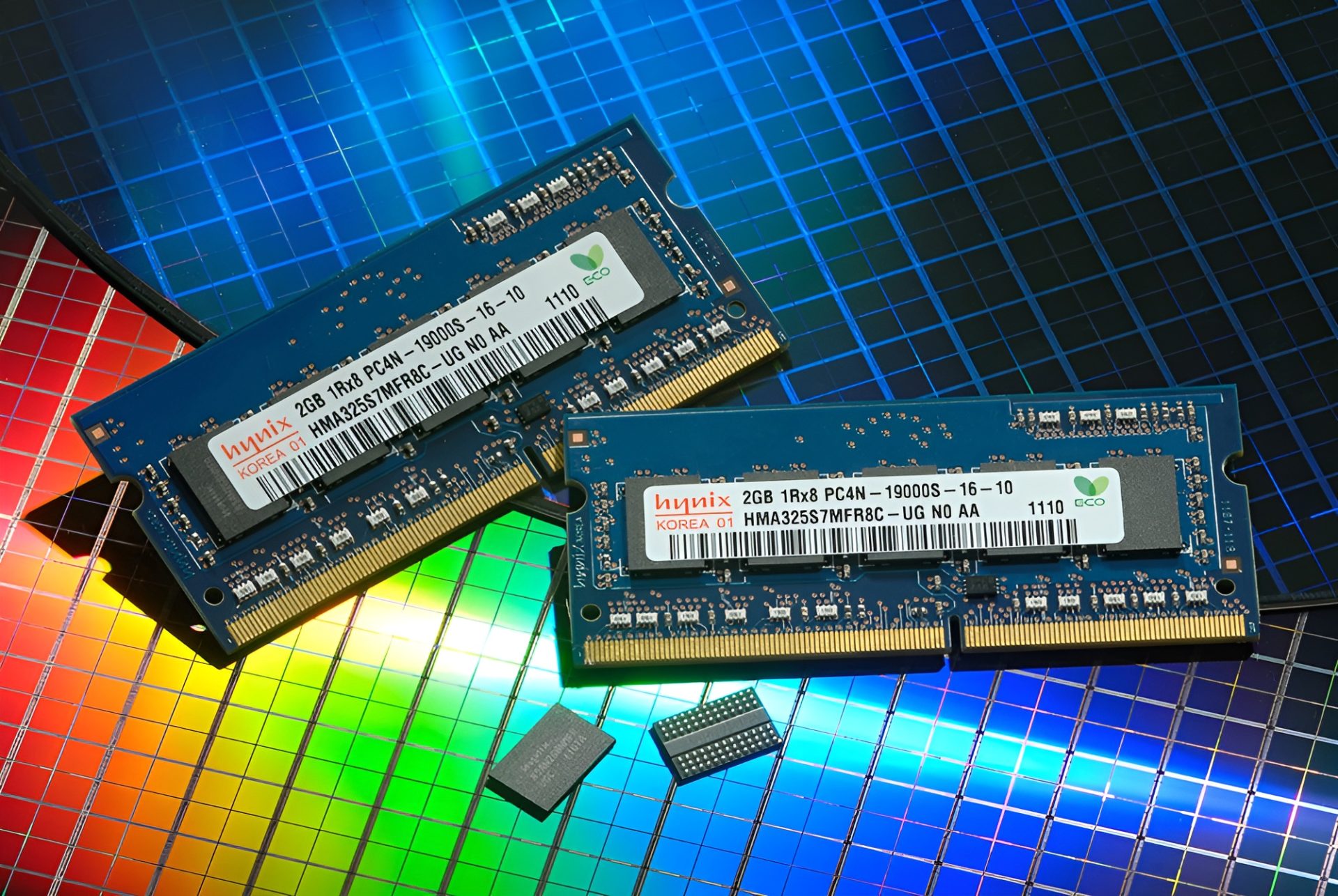

AMD's market share in the data center has grown to nearly 40 percent, surpassing Intel for the first time in Q1. The change reflects deeper trends: hyperscalers are prioritizing CPUs over GPUs for AI workloads, a shift that could reshape how cloud providers build and scale infrastructure.

The surge comes as AI models demand more compute power, but not always from graphics processors. Traditional CPUs—long seen as the backbone of general workloads—are now being repurposed for tasks once dominated by GPUs. This rebalancing isn't just about raw performance; it's about efficiency, cost, and how workloads are distributed across data center hardware.

Hyperscalers like Microsoft Azure, Amazon Web Services, and Google Cloud have quietly accelerated their adoption of AMD's EPYC processors. The shift is driven by a mix of factors: better price-to-performance ratios on AMD's part, and a growing realization among cloud providers that AI workloads don't always need GPU acceleration to be efficient.

For creators building applications or training models on these platforms, the implications are twofold. First, the cost of CPU-based AI workloads may drop as hyperscalers pass savings onto users. Second, developers will need to adapt their code to leverage AMD's architecture more effectively, a process that could take time but may pay off in long-term efficiency gains.

The shift also raises questions about AMD's roadmap. The company has been aggressive in expanding its data center footprint, but can it sustain this momentum without alienating GPU-focused AI researchers? For now, the focus remains on CPUs—but the future of AI in the cloud may depend on how well both CPU and GPU vendors can integrate.

The most important change is clear: hyperscalers are no longer treating CPUs and GPUs as distinct categories for AI. The era of 'one size fits all' hardware is ending, and the data center is becoming more specialized—and more competitive.