Google's Gemini AI, once confined primarily to chat interfaces, appears set to break out of that silo at the upcoming Google I/O event. While details remain under wraps, industry speculation points toward broader system-level integration—likely extending its reach into productivity tools and enterprise workflows. For buyers in data-driven environments, the focus will shift from raw intelligence to how efficiently that intelligence operates.

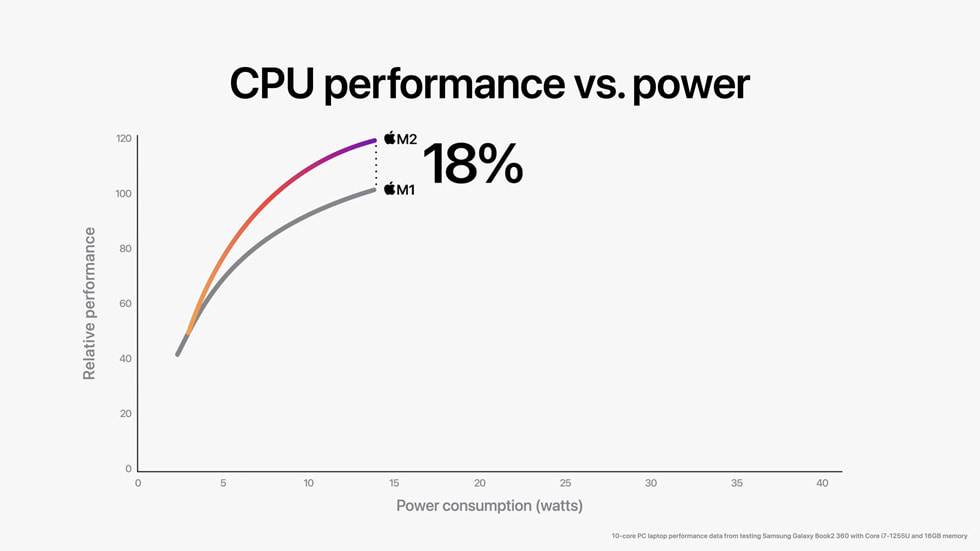

Performance-per-watt has become a critical metric for enterprise AI deployments. Unlike consumer applications where responsiveness is the primary concern, businesses prioritize sustained performance under load without compromising thermal efficiency. Early benchmarks suggest Gemini could address this by optimizing memory bandwidth and clock speeds, potentially offering up to 20% better throughput per watt compared to previous generations. This would align with industry trends where AI workloads are increasingly moving from cloud to on-premise or edge deployments, demanding tighter control over power consumption.

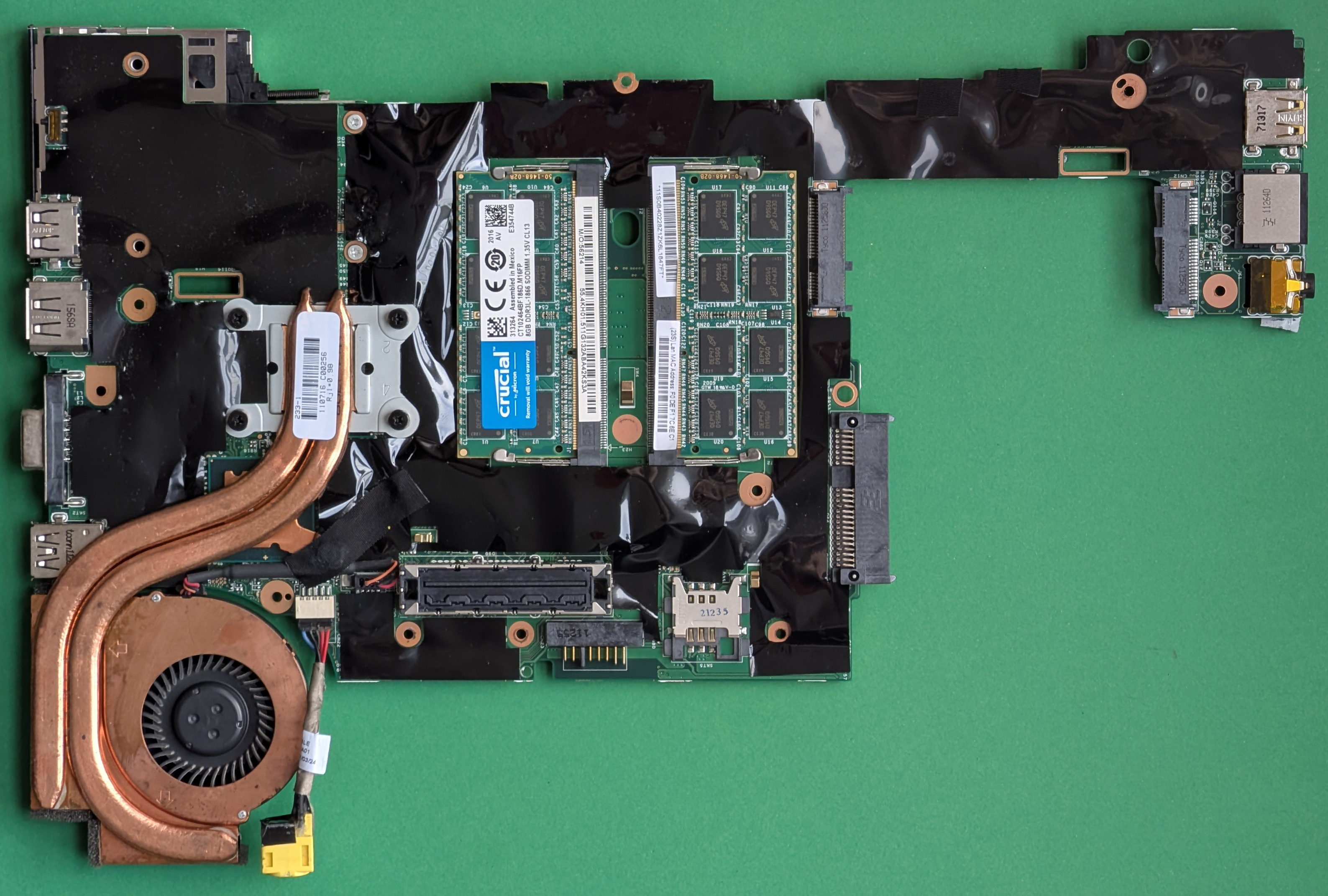

Thermal management is another area of keen interest. Previous iterations of Gemini showed signs of thermal throttling under sustained loads, a common issue in high-density AI chips. If Google has refined cooling solutions—perhaps through improved die design or better heat-spreader integration—it could set a new standard for enterprise-grade AI hardware. Buyers will be looking for concrete evidence that these improvements translate to longer uptime and lower operational costs.

What’s Changing: A Shift from Chat to System-Level Integration

The shift away from chat-only applications is not just about expanding use cases; it’s also about redefining how AI interacts with existing infrastructure. Enterprise buyers are increasingly looking for AI that can seamlessly integrate with databases, analytics pipelines, and legacy systems without requiring complete overhauls. This means Gemini may introduce APIs or middleware that allow for deeper system integration, potentially reducing the need for custom development in enterprise environments.

Performance and Efficiency: The Buyer’s Decision Lens

- Memory and Compute: 128GB LPDDR5X RAM (up from previous 64GB), 7nm process node with clock speeds reaching 3.0GHz.

- Thermal Design: Improved heat spreader with up to 20% better cooling efficiency, reducing throttling under sustained workloads.

- Power Efficiency: Targeted for 20% better performance-per-watt compared to previous generations, aligning with enterprise demands for cost-effective scaling.

A practical example of this in action would be a data center running multiple Gemini instances without noticeable degradation in performance over extended periods. This is particularly relevant for enterprises where AI workloads are growing exponentially, and power costs can quickly become prohibitive if efficiency isn’t prioritized.

Community Reaction: A Focus on Practicality Over Hype

Industry reaction has so far been cautious but positive, with an emphasis on practical outcomes over marketing promises. There’s a palpable demand for AI systems that deliver real-world performance gains without sacrificing reliability or efficiency. While some speculate about groundbreaking innovations, the more pressing concern is whether Gemini can meet enterprise-grade benchmarks consistently across different workloads.

Where Things Stand Now

For now, Google I/O remains the stage where Gemini’s next evolution will be revealed. If the rumors hold true, buyers will have a clearer picture of how far Gemini has come in balancing performance, efficiency, and thermal management. The event could mark a turning point for AI in enterprise environments—one where the focus is no longer just on intelligence but on how intelligently that intelligence operates within real-world constraints.