As demand for AI workloads surges, two industry leaders are combining forces to build the infrastructure needed to support it. NVIDIA has announced a $2 billion investment in CoreWeave, reinforcing their collaboration to accelerate the deployment of AI factories—massive data centers optimized for training and deploying AI models.

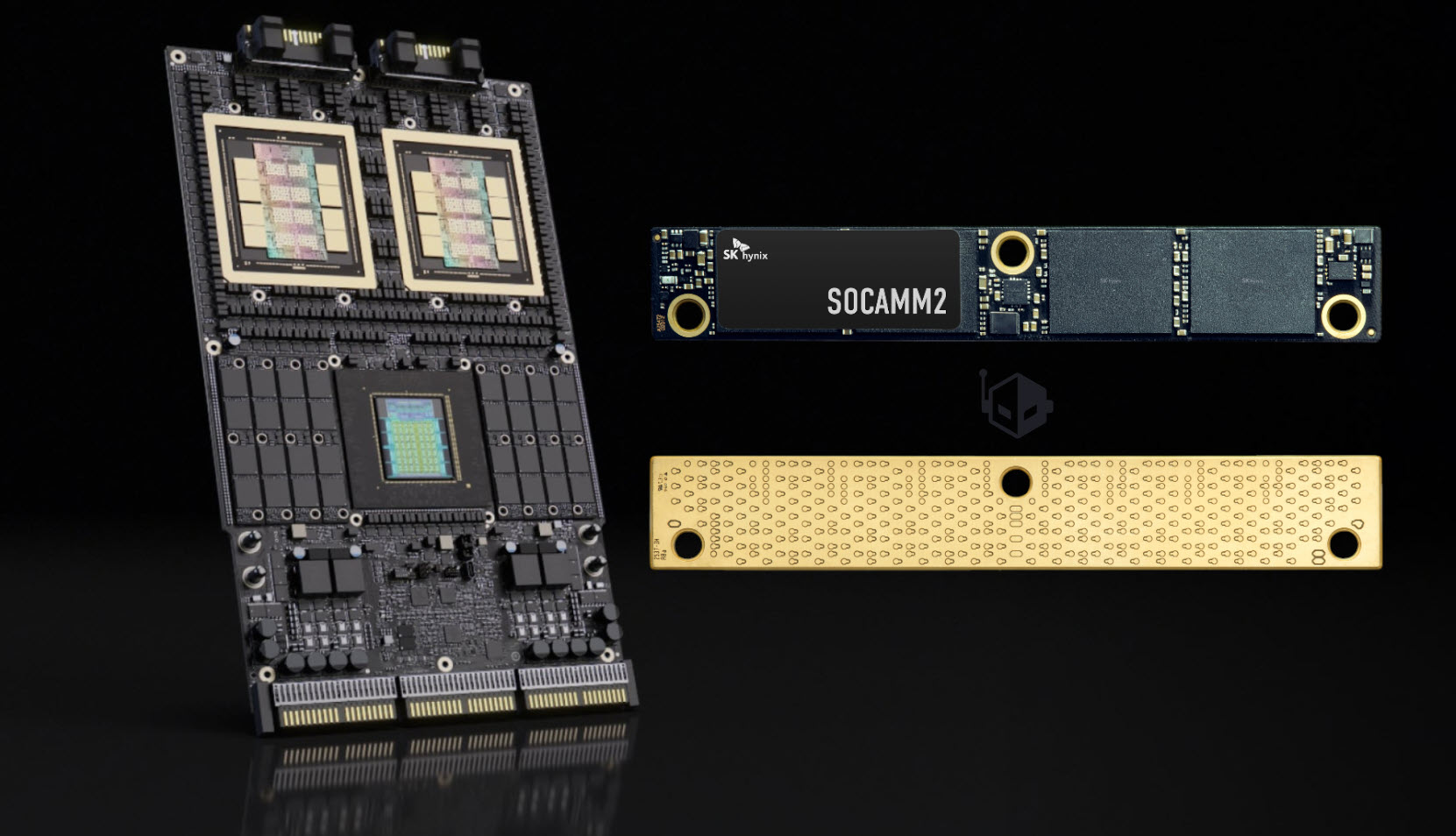

The partnership marks a significant expansion of their existing relationship, with CoreWeave adopting NVIDIA’s CPU and storage platforms, including the Rubin platform, Vera CPUs, and Bluefield storage systems. This move is designed to ensure CoreWeave’s AI-native software—such as SUNK and Mission Control—works seamlessly with NVIDIA’s latest architectures, potentially integrating these solutions into NVIDIA’s broader reference frameworks for cloud providers and enterprises.

NVIDIA’s financial backing will also help CoreWeave secure critical resources—land, power, and infrastructure—to scale its AI factories more rapidly. By leveraging NVIDIA’s hardware expertise and CoreWeave’s operational efficiency, the two companies aim to meet the growing need for AI compute capacity, which is projected to exceed 5 gigawatts by 2030.

The collaboration addresses a core challenge in AI development: the need for scalable, high-performance infrastructure that can handle the computational demands of large-scale models. Traditional data centers often struggle with inefficiencies in power, cooling, and software integration, slowing down AI innovation. By combining CoreWeave’s AI-optimized cloud platform with NVIDIA’s accelerated computing technology, the partnership aims to create a more streamlined and cost-effective solution for enterprises and cloud service providers.

Key components of the expanded partnership include

- Early adoption of NVIDIA’s latest hardware, including the Rubin platform, Vera CPUs, and Bluefield storage systems, to ensure CoreWeave’s infrastructure remains cutting-edge.

- Testing and validation of CoreWeave’s AI-native software, with potential integration into NVIDIA’s reference architectures for cloud partners.

- Accelerated procurement of land, power, and infrastructure, leveraging NVIDIA’s financial strength to scale AI factories more efficiently.

The investment reflects NVIDIA’s confidence in CoreWeave’s ability to execute at scale, particularly as AI transitions from research labs to large-scale production environments. With NVIDIA’s Blackwell architecture already recognized for its cost efficiency in inference workloads, the partnership positions CoreWeave to deliver high-performance AI infrastructure tailored to enterprise and cloud needs.

Both companies will focus on deploying multiple generations of NVIDIA’s infrastructure across CoreWeave’s platform, ensuring compatibility with evolving AI workloads. The goal is to create a unified ecosystem where software, hardware, and operations are designed in tandem, reducing friction for customers deploying AI models at scale. This alignment is critical as AI systems move from experimental phases into widespread adoption across industries.

For enterprises and cloud providers, the partnership could mean faster access to high-performance AI infrastructure, lower operational costs, and greater flexibility in deploying complex AI workloads. The collaboration also underscores the growing importance of specialized AI factories—dedicated data centers built specifically to meet the unique demands of machine learning and generative AI.

The $2 billion investment, made at $87.20 per share, signals a strategic bet on CoreWeave’s growth trajectory. As AI becomes increasingly central to business operations, partnerships like this will play a key role in determining who can deliver the infrastructure needed to support the next wave of innovation.