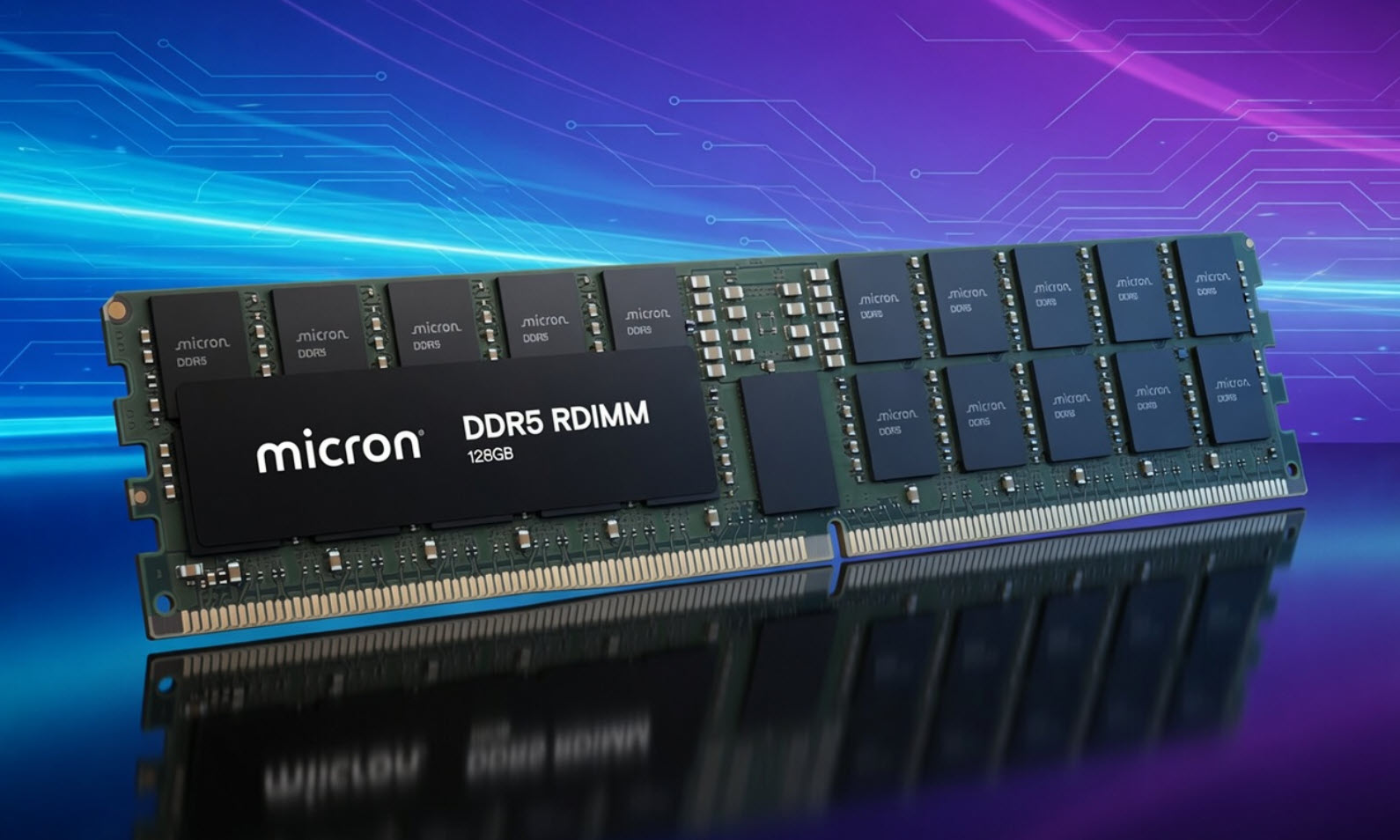

For data centers and AI applications, memory speed and capacity are no longer just incremental improvements—they’re leaps. Micron’s latest move underscores this shift: 256 GB DDR5 RDIMMs now operate at 9200 MT/s, a 40% jump over today’s top modules. This isn’t just faster memory; it’s a redefinition of what AI systems can handle.

Traditionally, server memory has followed a path of gradual evolution: slightly more capacity, modest speed bumps, and incremental efficiency gains. But AI workloads demand something different—a break from the past where every cycle counts, and latency is measured in nanoseconds rather than milliseconds. Micron’s new modules don’t just fit into this narrative; they accelerate it.

What These Modules Mean for AI

The 256 GB DDR5 RDIMMs are designed to address the growing hunger of large language models and high-performance computing tasks. Unlike consumer-grade memory, these modules are built for registered DIMM (RDIMM) form factors, which are optimized for stability and reliability in server environments. The 9200 MT/s clock speed means more data can move through the system per second, reducing the time it takes to train models or process complex queries.

Key Specifications

- Capacity: 256 GB (double the previous generation)

- Speed: 9200 MT/s (40% faster than current modules)

- Form Factor: DDR5 RDIMM (registered DIMM for server stability)

- Voltage: 1.1V (standard for DDR5)

The increase in speed and capacity is particularly notable when compared to the previous generation of DDR5 modules, which maxed out at around 6400 MT/s with half the capacity. This shift isn’t just about raw performance; it’s about enabling new architectures that were previously impossible or prohibitively slow.

For buyers, the immediate question is what this means for pricing and availability. While Micron hasn’t announced a specific launch date, industry trends suggest these modules will first appear in high-end AI data centers before trickling down to enterprise servers. Pricing will likely reflect their specialized nature, with costs significantly higher than consumer DDR5 kits.

Looking Ahead: What’s Next for Memory?

The timeline for widespread adoption isn’t clear yet, but the trajectory is obvious. As AI models grow larger and more complex, memory systems will need to keep pace—not just in speed, but in scalability. Micron’s move today sets a new baseline, but it won’t be the last. The next wave of innovation could bring even higher capacities or new form factors tailored for AI-specific workloads.

For now, buyers should watch for updates on when these modules will hit the market and how they’ll integrate with existing server architectures. The race to optimize memory for AI isn’t just about speed; it’s about rethinking how data moves through systems entirely. And that’s a challenge worth following.