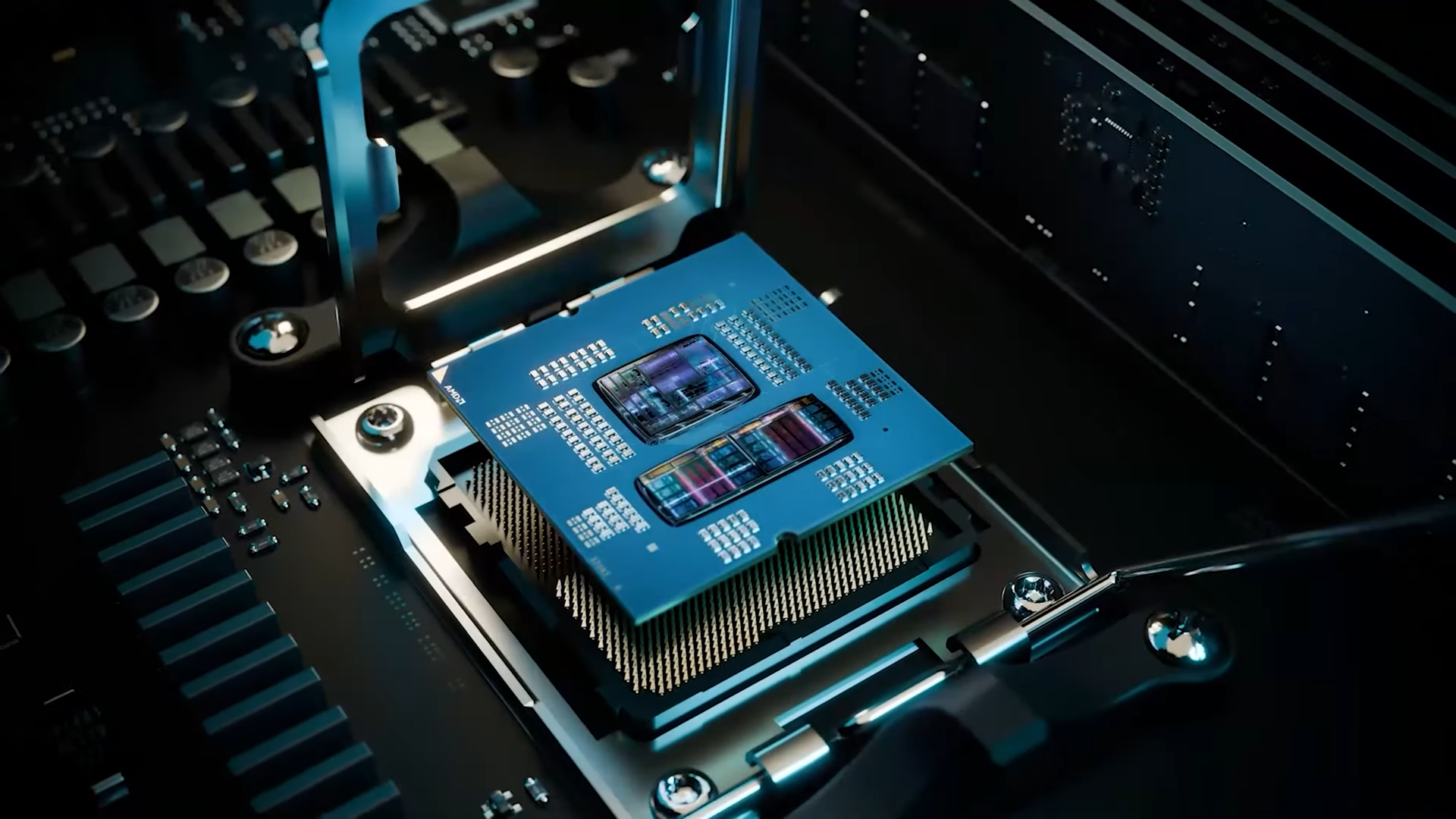

The Intel Xeon 6 series marks a significant evolution in cloud infrastructure by integrating Advanced Matrix Extensions (AMX) directly into its architecture. Unlike previous generations that focused primarily on clock speed improvements, this processor introduces hardware-level acceleration specifically designed for AI workloads. This integration allows data-intensive tasks to execute more efficiently without the need for separate acceleration units, such as GPUs or FPGAs.

For cloud providers, the implications are substantial. The Xeon 6 series enables homogeneous server clusters to handle AI workloads with unprecedented efficiency. This shift simplifies deployment models by eliminating the complexity of managing heterogeneous hardware stacks, which often require careful optimization and maintenance. As a result, performance scales more predictably with server count, reducing operational overhead while maintaining high throughput.

The processor itself is built on a foundation that balances power and efficiency. Each Xeon 6 series chip supports up to 24 threads across its 12 cores, with a base clock speed of 2.0 GHz that can dynamically adjust based on workload demands. It also supports DDR5 memory at speeds up to 4800 MT/s, ensuring high bandwidth for data-intensive applications. The thermal design power (TDP) is capped at 120W, making it well-suited for dense data center environments where power consumption and cooling are critical factors.

However, the true innovation lies in AMX. This hardware extension offloads tasks like matrix multiplication from the CPU’s general-purpose cores, which could significantly enhance performance for machine learning inference and large-scale analytics. While this feature promises to democratize AI capabilities for businesses without deep technical expertise, its effectiveness hinges on software optimization. Not all applications will immediately benefit from AMX, and some may require updates or rewrites to fully leverage these new instructions. This dependency on software readiness introduces a potential barrier to adoption, particularly for smaller businesses that lack the resources to invest in optimization.

Cost is another factor to consider. Although the Xeon 6 series reduces the need for separate acceleration cards in many scenarios, the long-term savings will depend on how quickly software vendors adopt these capabilities. For smaller enterprises, the transition could offer lower upfront costs but may come with a learning curve when integrating new workflows into existing infrastructure. The financial benefits will likely materialize over time as more applications are optimized for AMX, but this transition period could pose challenges.

The broader impact of the Xeon 6 series is undeniable. It signals a shift where AI acceleration becomes a standard feature rather than an optional add-on. Cloud providers can now deploy homogeneous server clusters without compromising on performance, while businesses gain the flexibility to scale their workloads without needing to overhaul their infrastructure. This move could set a new benchmark for enterprise computing, where AI-optimized hardware is no longer a luxury but a necessity for maintaining competitive advantage.

For early adopters, the rewards could be substantial. The Xeon 6 series lays the groundwork for a future where AI acceleration is seamlessly integrated into server architectures, reducing complexity and improving efficiency. However, the success of this transition will depend on the pace of software adoption and the willingness of businesses to adapt their workflows. As the industry moves forward, the Xeon 6 series stands as a testament to Intel’s commitment to reshaping cloud infrastructure in an AI-driven world.