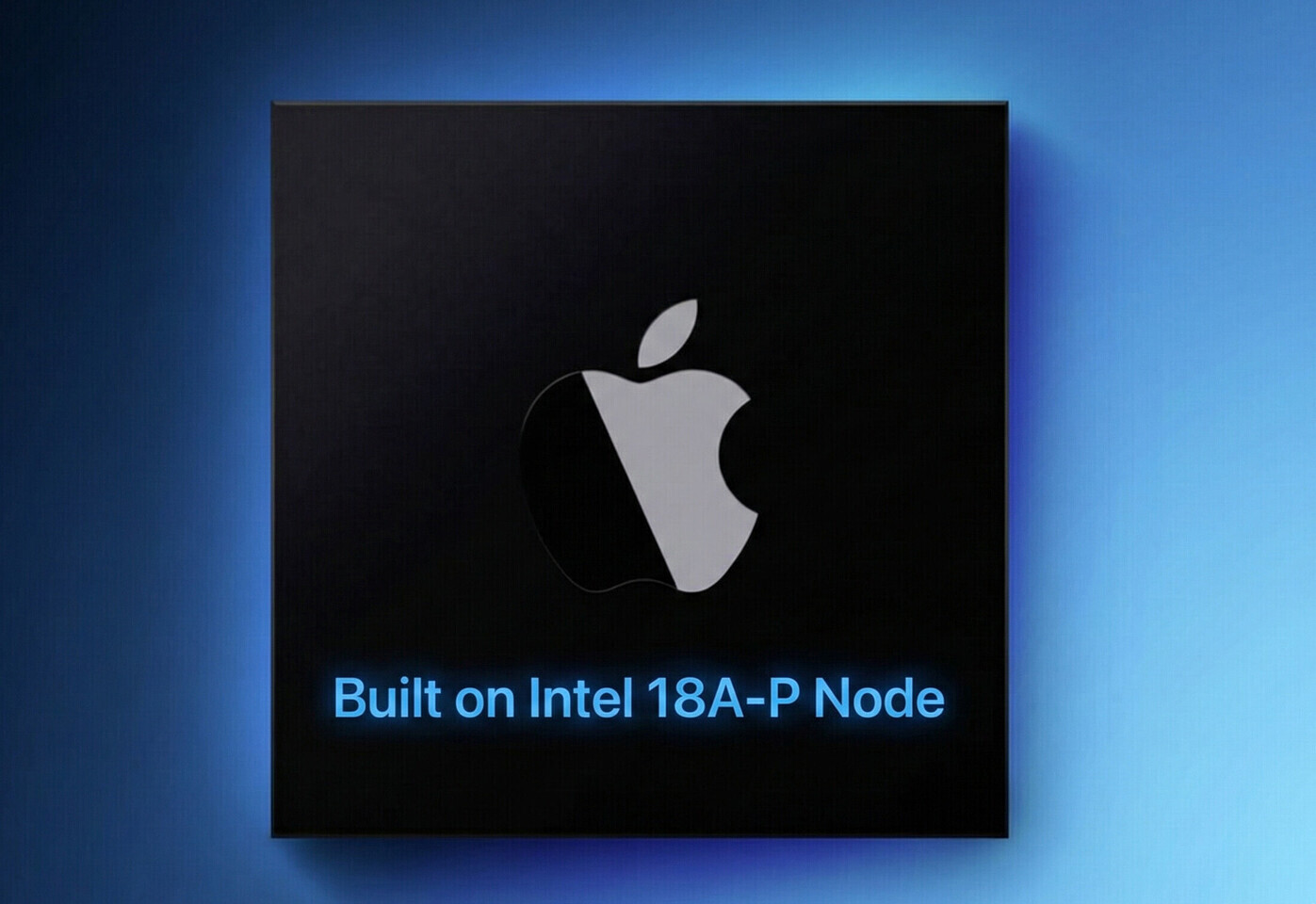

Intel’s latest move into quantum computing signals a potential inflection point in processor design. The tech giant has invested in a company working on a 10,000-qubit quantum processor—a figure that dwarfs current systems by three orders of magnitude.

The announcement arrives at a time when quantum computing is transitioning from academic experiments to practical applications. A system with this many qubits could unlock breakthroughs in AI training, cryptography, and material science. However, the path from lab bench to market remains uncertain, with challenges in error correction and scalability still unresolved.

Key Specifications

- Qubits: 10,000 (target)

- Performance: 100x current leading systems (estimated)

- Use Cases: AI acceleration, cryptography, scientific simulation

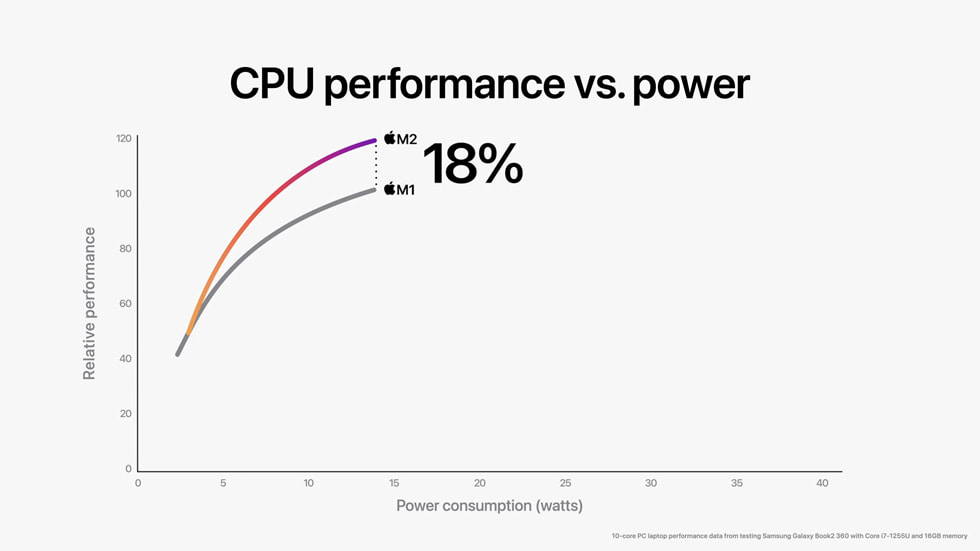

The 10,000-qubit target suggests a focus on fault-tolerant architectures, which are critical for reliable quantum computation. Current leading systems, like IBM’s 433-qubit Osprey, operate at scales where noise and decoherence limit practical use. A processor with this many stable qubits could bridge that gap—but achieving it will require advances in hardware design, cooling, and control electronics.

Market Implications

For gamers and high-performance computing users, the implications are less immediate but no less significant. Quantum processors may eventually accelerate AI-driven graphics rendering or optimize complex simulations for virtual reality. However, adoption will depend on software development and industry standards—a process that could take a decade.

The investment also reflects Intel’s strategy to stay ahead in high-performance computing. While the company has historically led in classical CPUs and GPUs, quantum is an emerging frontier where early movers may set the standard. Whether this initiative translates into consumer products remains an open question, but it underscores the broader shift toward quantum-ready infrastructure.

For now, the focus is on research and development. The timeline for commercialization is unclear, but if successful, this could redefine what’s possible in computational power—just as classical processors did decades ago.