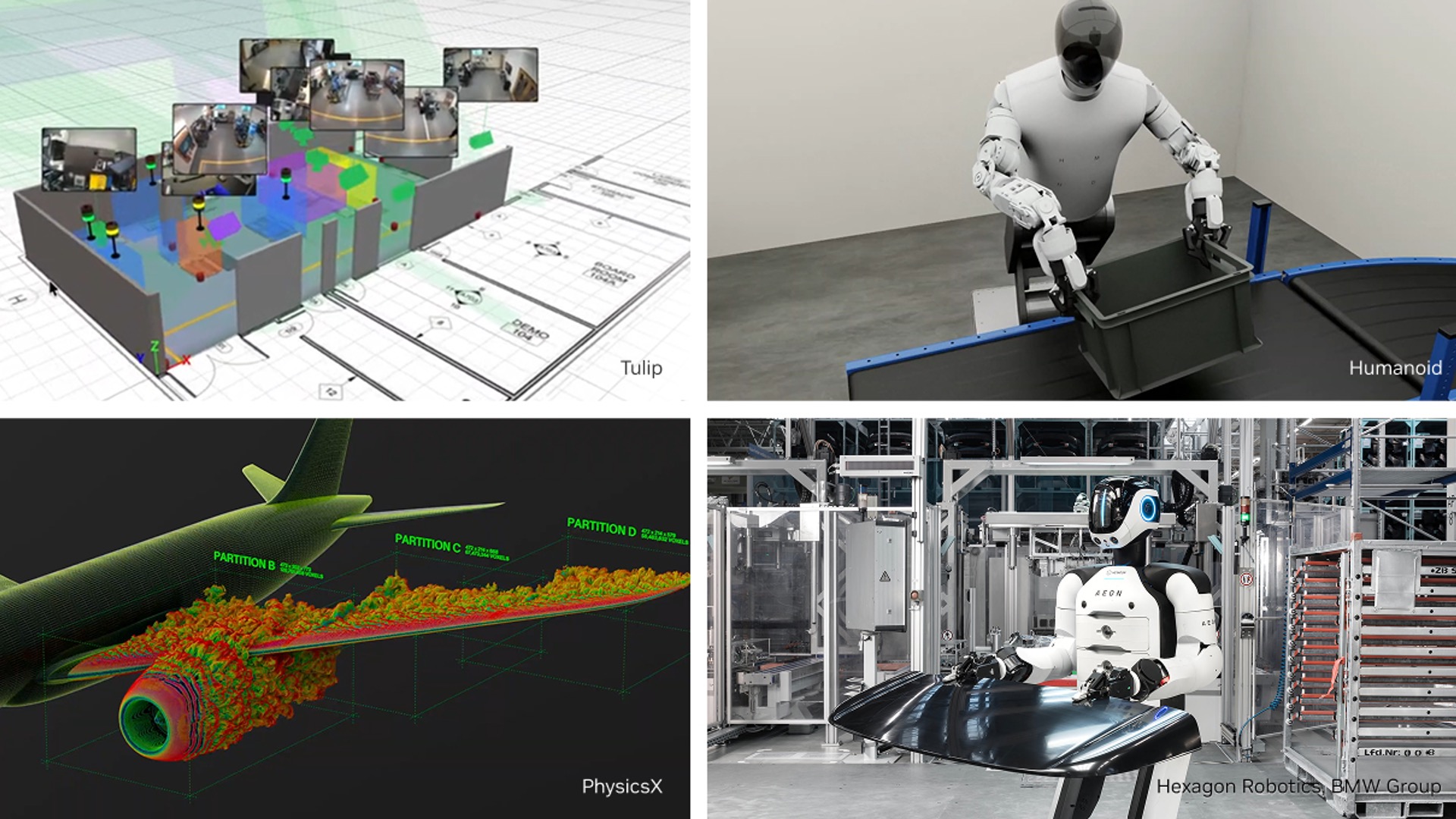

Osaka, Japan — Giga Computing, the high-performance computing arm of GIGABYTE, is set to redefine large-scale AI and scientific computing with the launch of its **XN24-VC0-LA61** server at SCA/HPC Asia 2026. Powered by NVIDIA’s GB200 NVL4 platform, this system combines liquid cooling, Arm-based Grace CPUs, and Blackwell GPUs to deliver unprecedented performance for quantum-HPC hybrid workloads.

The server has been selected for RIKEN’s FugakuNEXT project, the successor to Japan’s flagship supercomputer, Fugaku. By integrating NVIDIA’s CUDA-Q platform, the XN24-VC0-LA61 will accelerate research into quantum-GPU convergence, enabling advanced simulations and large language model training.

At a glance

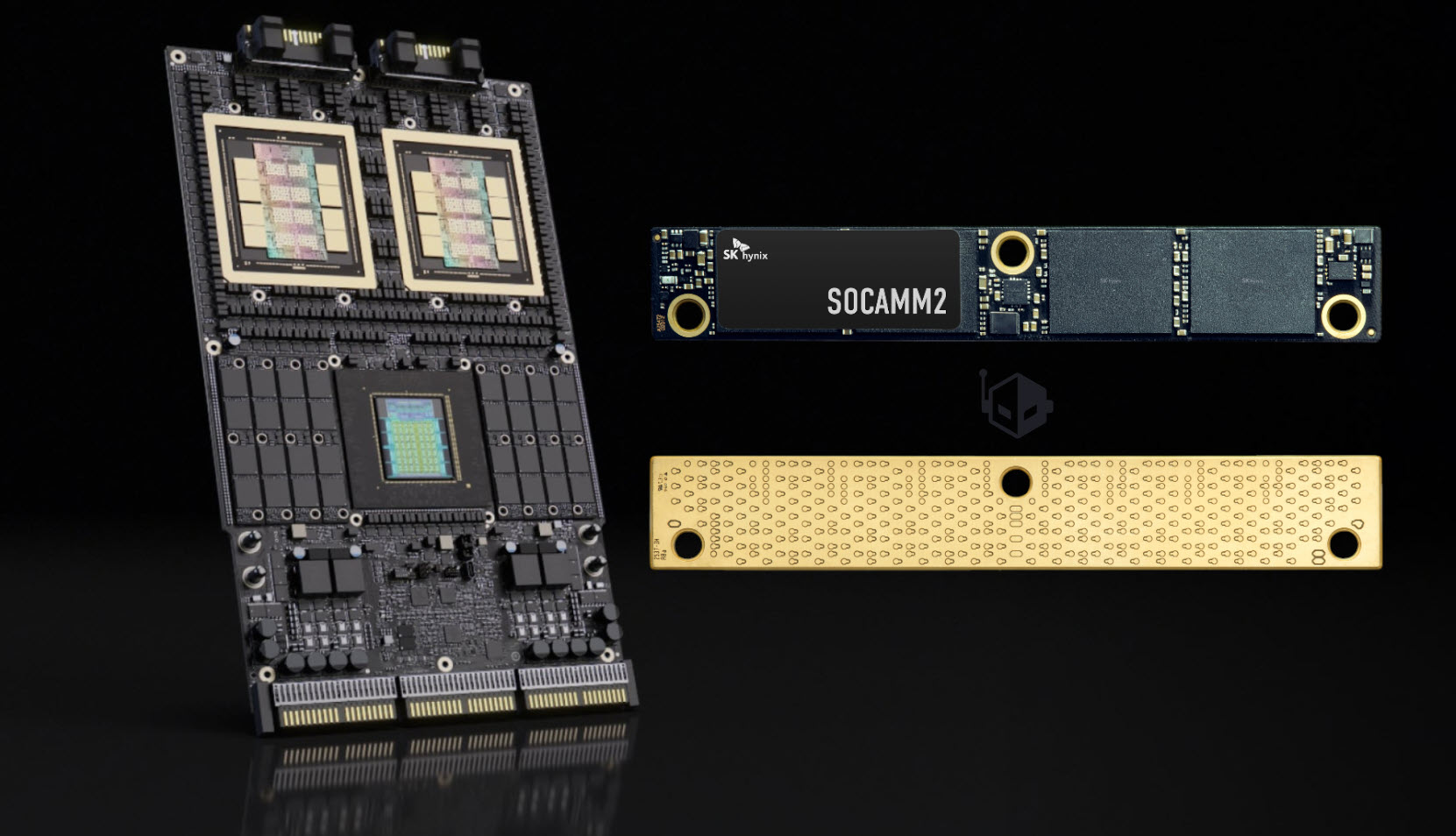

- Architecture: NVIDIA GB200 NVL4 platform with 2× Grace CPUs (Arm) + 4× Blackwell GPUs

- Memory: 480 GB LPDDR5X ECC CPU memory; up to 186 GB HBM3E GPU memory

- Cooling: Direct Liquid Cooling (DLC) in a 2U dual-processor design

- Networking: 800 Gb/s InfiniBand or 400 Gb/s Ethernet via NVIDIA ConnectX-8 SuperNIC

- Storage: 12× PCIe Gen 5 NVMe bays; optional BlueField DPUs for offloaded compute

- Deployment: Modular scalability for AI infrastructure without full rack requirements

- Key project: Selected for RIKEN’s FugakuNEXT quantum-HPC hybrid platform

Why it matters

The XN24-VC0-LA61 represents a leap in modular HPC design, offering organizations the flexibility to deploy Blackwell-class computing without immediate rack-scale infrastructure. Its liquid-cooled architecture and hybrid CPU-GPU setup make it ideal for high-density AI training, scientific simulations, and quantum-adjacent research.

Beyond FugakuNEXT, Giga Computing will showcase a full portfolio at SCA/HPC Asia, including

- GIGAPOD: Rack-scale AI solution with 32 GPU servers, optimized for LLM workloads

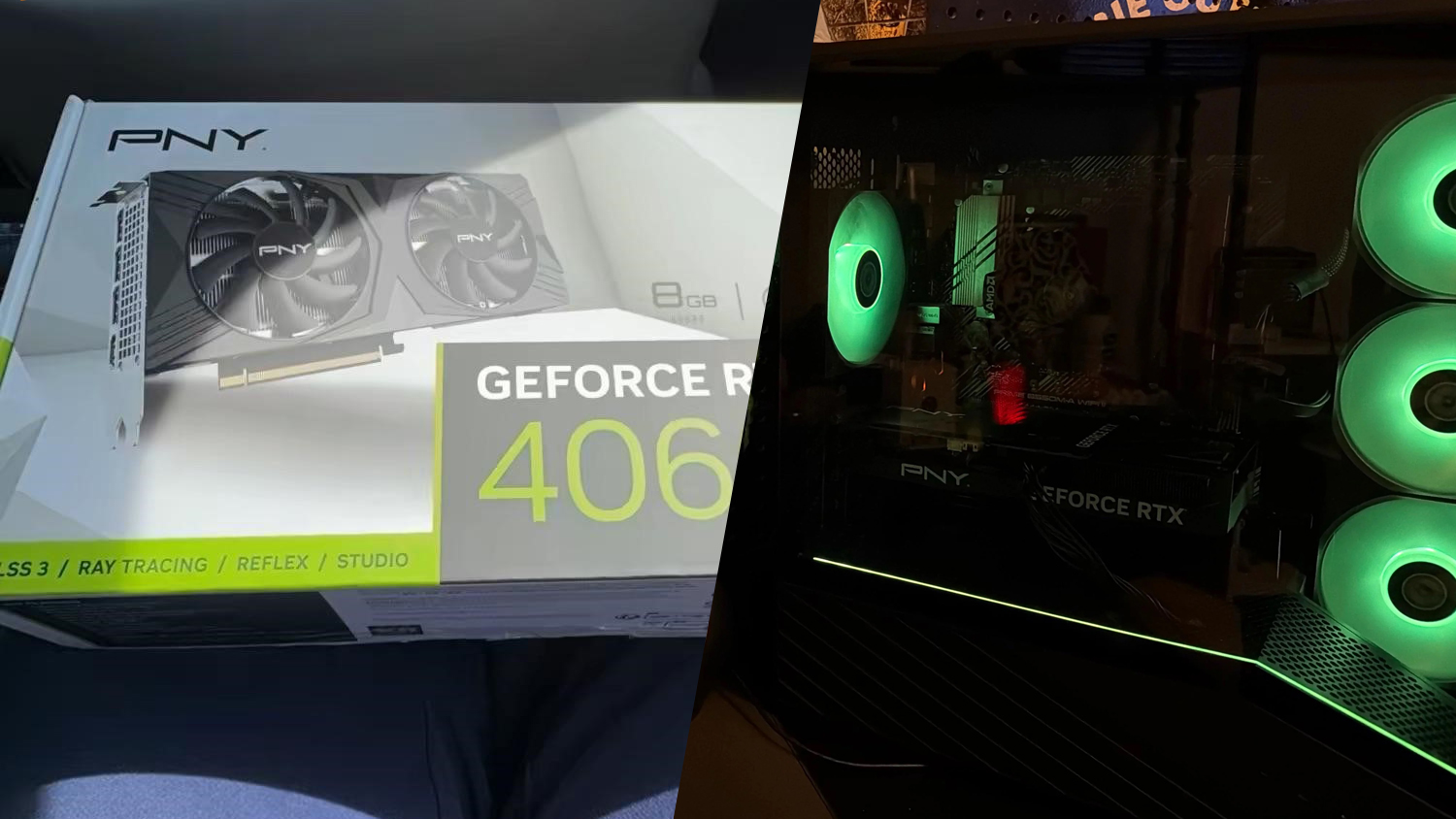

- XL44-SX2-AAS1: Air-cooled modular server supporting up to 8× RTX PRO 6000 Blackwell GPUs

- AMD EPYC Nodes: OCP ORV3-compliant compute units for hyperscale data centers

At the event, Giga Computing will host an **AI/HPC Partner Seminar** on January 28 to discuss deployment strategies and industry trends alongside ecosystem partners.