The DDR6 memory standard is moving from lab bench to production lines faster than expected. By late 2028 or early 2029, the first DDR6 modules could hit the market, promising a significant boost in bandwidth for enterprise workloads—if compatibility and cost can be tamed.

This isn’t just another memory speed bump. DDR6 is designed to address real bottlenecks in today’s high-performance servers and AI accelerators. The catch? It requires new motherboard designs, updated BIOS support, and a careful balancing act between raw performance gains and the practical limits of current infrastructure.

Why DDR6 Matters

DDR6 doubles down on what made DDR5 a game-changer for enterprise: higher data rates, lower latency, and more efficient power use. But it goes further by introducing key features that could reshape how memory is managed in data centers.

- Up to 128 GB per DIMM (vs. 64 GB in DDR5), allowing for massive single-module capacity without stacking.

- Lower operating voltage (0.9V vs. 1.1V in DDR5) and improved power efficiency, critical for large-scale deployments where heat is a constant concern.

- On-die ECC (error-correcting code), reducing the need for external controllers and cutting down on latency-sensitive error checks.

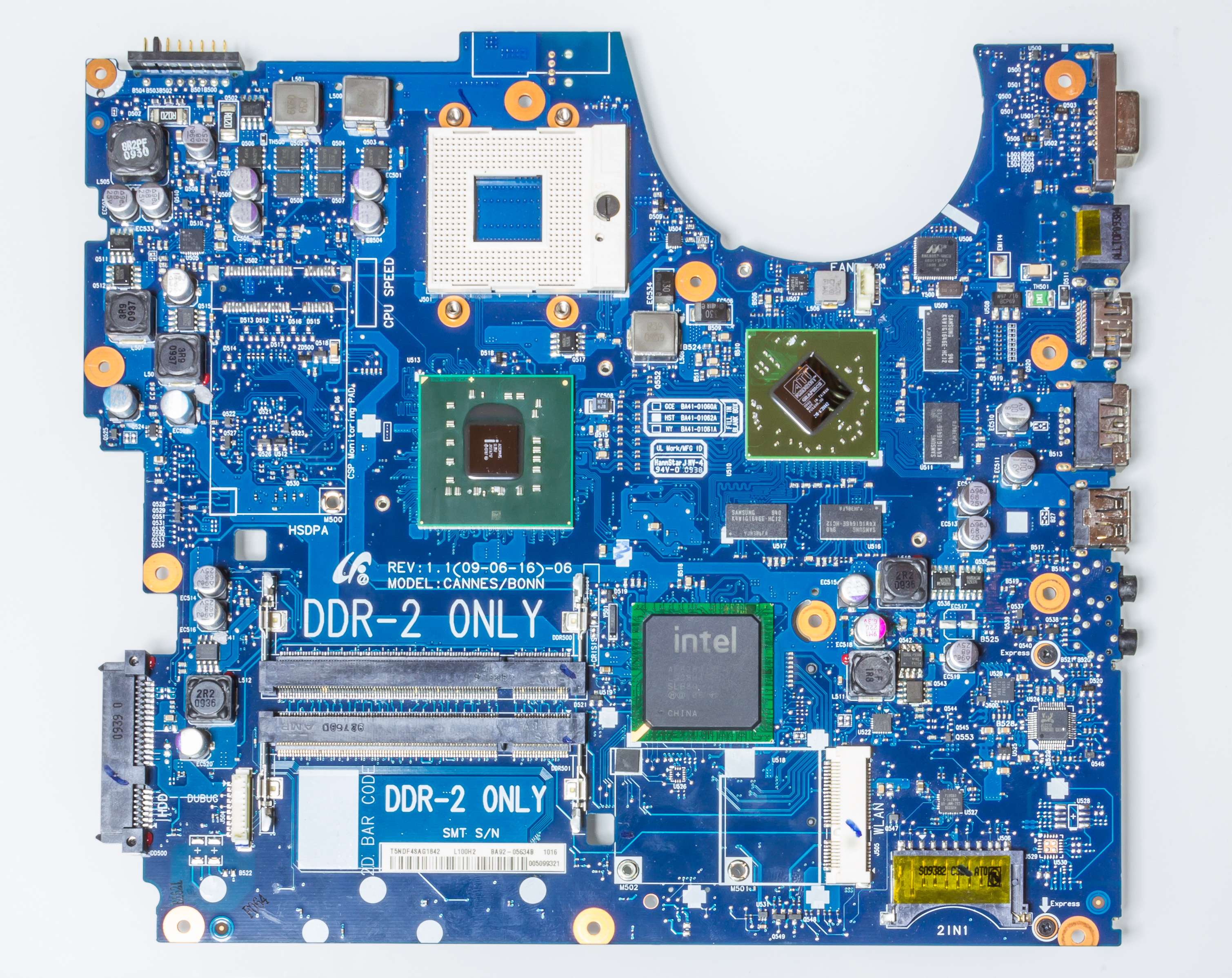

That’s the upside—here’s the catch. DDR6 isn’t just a drop-in replacement. It demands new socket designs, updated chipset support, and careful planning from OEMs to avoid stranded assets. Early adopters will need to weigh whether the performance leap justifies the risk of platform lock-in.

Who’s Leading the Charge?

Samsung is widely expected to be first to market with production-ready DDR6 modules, leveraging its decades-long lead in memory technology. SK Hynix and Micron are close behind, each bringing their own optimizations to the table—whether it’s lower latency profiles or specialized packaging for high-density modules.

But timing remains fluid. Industry reports suggest commercial availability could stretch from late 2028 into early 2029, depending on yield improvements and adoption by server manufacturers. For enterprises already planning next-gen infrastructure, this window is both an opportunity and a potential stumbling block.

The Enterprise Tradeoff

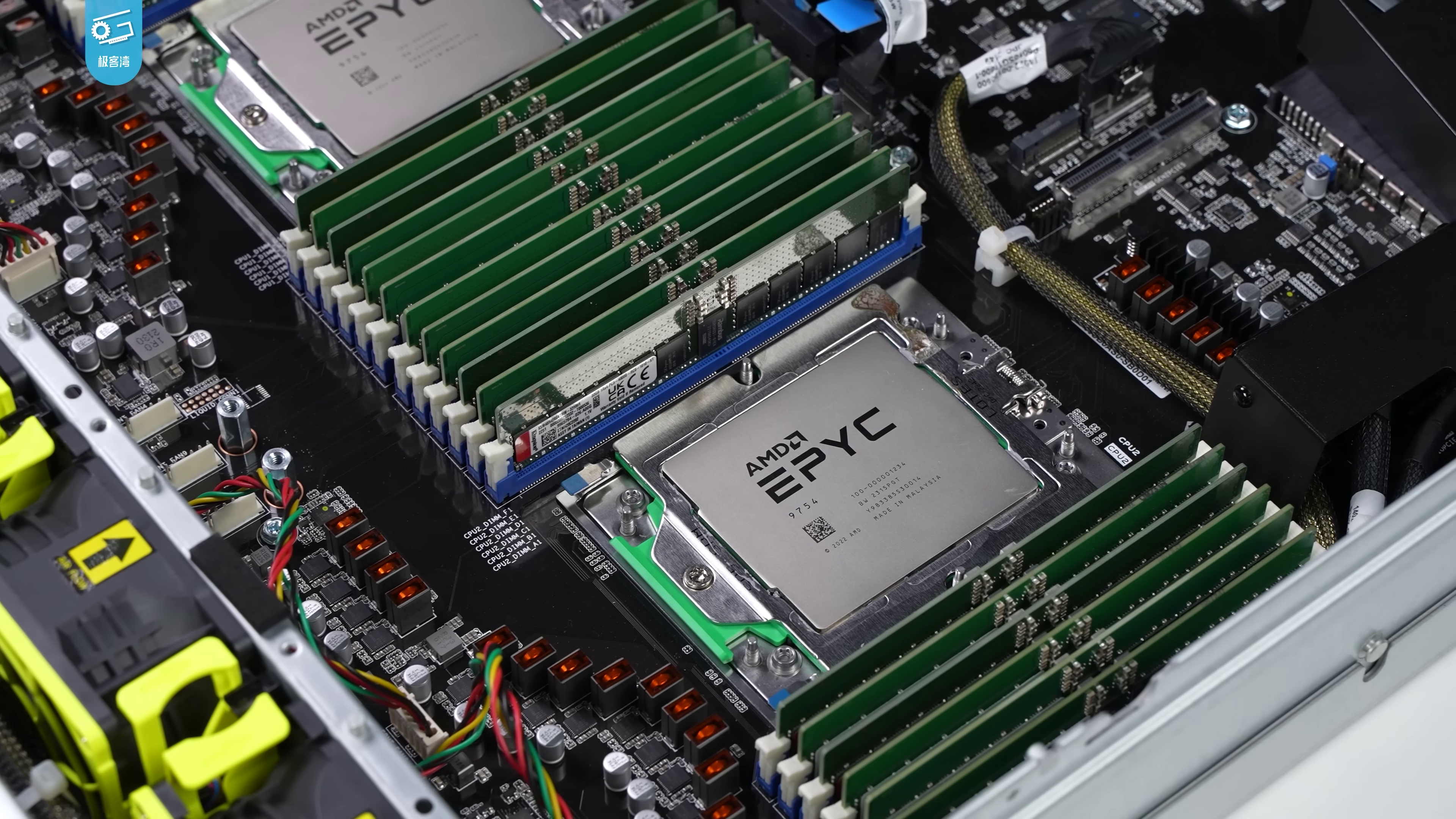

For data center operators, DDR6 represents a clear path to higher throughput—especially for AI training workloads, where memory bandwidth has become the single biggest constraint. But the real decision isn’t just about speed; it’s about ecosystem readiness.

Will your existing server fleet support DDR6 without costly upgrades? Can you afford to wait for the next generation of CPUs that are optimized for its performance? And how will vendor lock-in play out if only a few manufacturers back this standard early?

The answers aren’t simple, but one thing is clear: DDR6 isn’t just another memory upgrade. It’s a pivot point for enterprise infrastructure—one that demands careful navigation between cutting-edge performance and the realities of today’s data center environments.