The memory crunch in the AI sector isn’t just about shortages—it’s reshaping how companies build and run their infrastructure. With hyperscalers and GPU manufacturers scrambling for DRAM, suppliers like SK hynix are caught between meeting soaring demand and the long lead times of expanding production. The result? A market where customers are already optimizing their setups to work with less memory, even as the underlying hardware struggles to keep up.

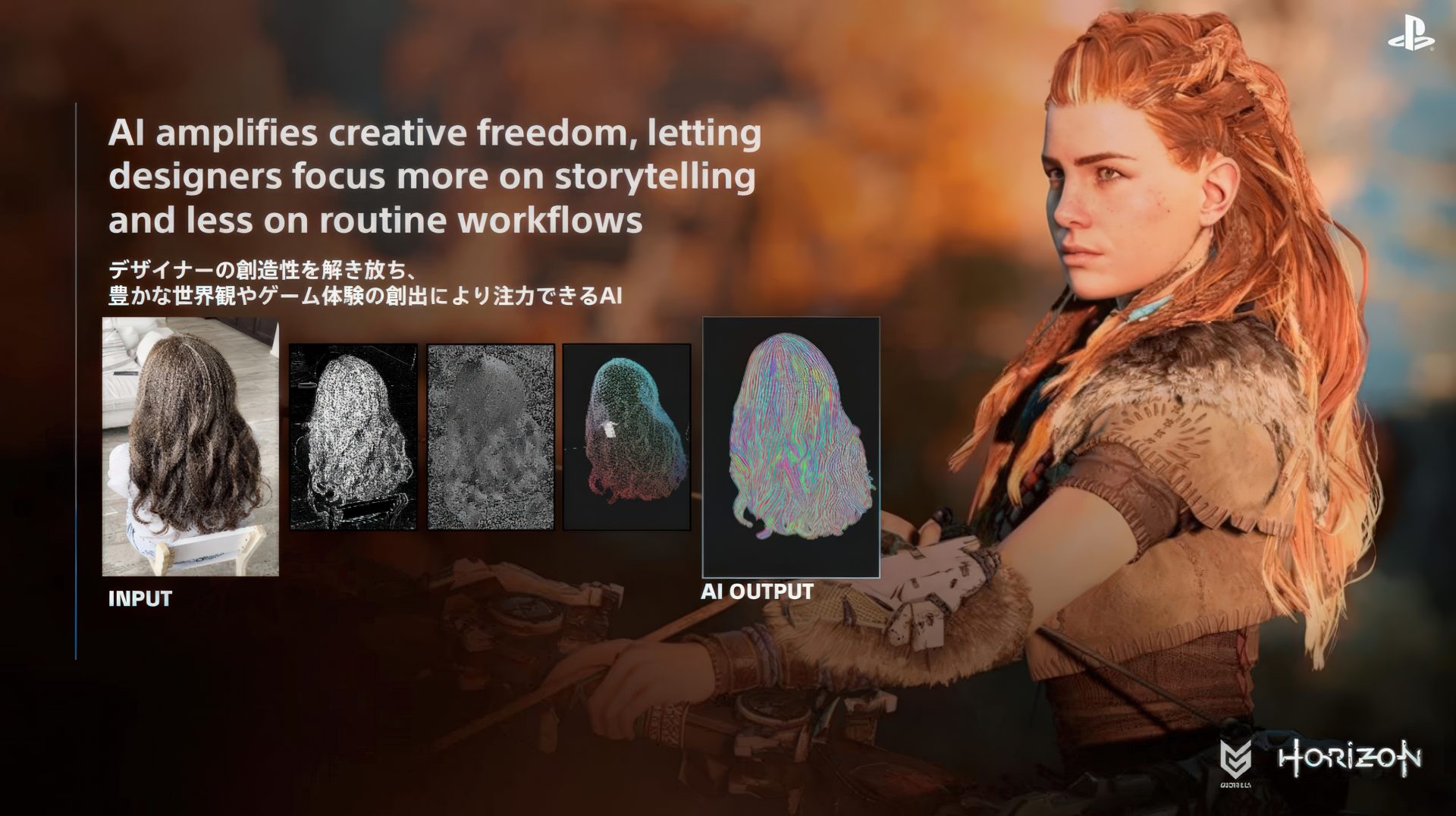

This tension is forcing a fundamental shift: AI systems that once relied on massive amounts of RAM to handle large models may soon operate more efficiently, using techniques like lower-precision data formats. Yet, history suggests that any gains in efficiency will quickly be swallowed by new demands—more tokens processed, more complex models, more users. The question now is whether suppliers can expand capacity fast enough to avoid a prolonged period of constrained supply.

Key specs and constraints

- Memory demand: AI data centers require hundreds of gigabytes of system memory per model, with training runs pushing tens of trillions of parameters. Hyperscalers are securing DRAM months in advance, but supply remains tight.

- Supply chain lag: New semiconductor fabs take years to build, even as SK hynix has ordered 20 Low-NA EUV machines from ASML for future expansion. Current capacity additions won’t resolve the shortage until well into 2028.

- Optimization tradeoffs: Smaller precision formats and software tweaks reduce memory needs, but increased efficiency often leads to higher overall workloads as systems adapt to new capabilities.

The balance between hardware constraints and software innovation will determine whether the industry can sustain AI growth without prolonged disruptions. For now, the pressure is on both sides: suppliers must expand capacity while customers learn to do more with less—at least for a while.