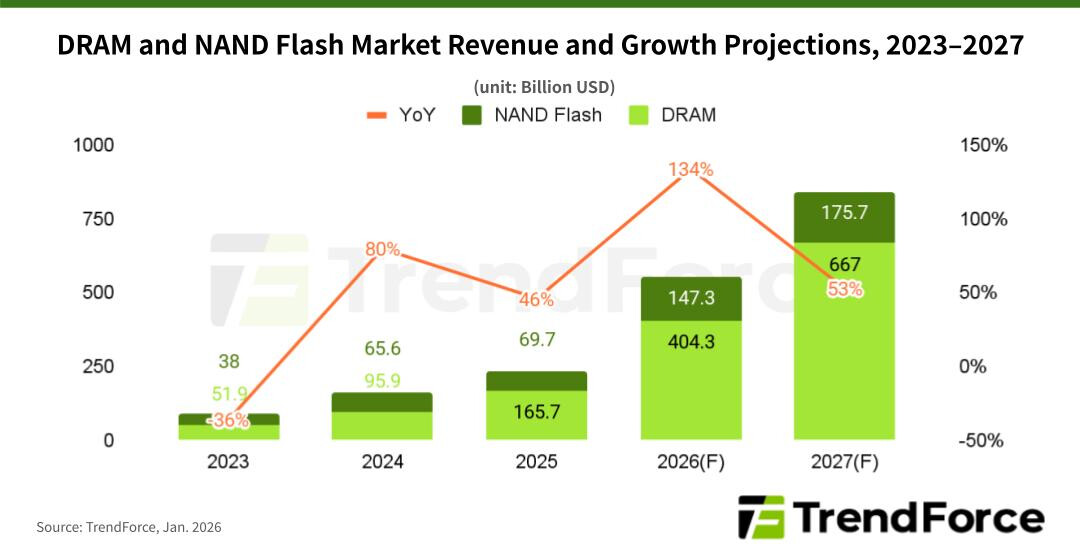

The memory market is undergoing a seismic shift, one that could redefine how tech companies budget for hardware. AI isn’t just driving demand—it’s creating a structural imbalance between supply and need, with DRAM and NAND Flash prices climbing at rates unseen in decades. By 2027, the global memory market is projected to hit $842.7 billion, a 53% year-over-year leap, as data-hungry AI systems demand more bandwidth, capacity, and speed than ever before.

At the heart of the disruption is the evolution of AI architecture. Early models focused on training massive datasets, but today’s AI systems—particularly those using retrieval-augmented generation (RAG)—require rapid, random access to vast vector databases. This has turned memory into a bottleneck, with DRAM prices surging 53-58% in late 2025 alone. Even with prices already elevated, demand from cloud service providers (CSPs) remains unshaken, pushing first-quarter 2026 increases toward 60%, with some categories nearing double their 2024 levels.

DRAM, the backbone of high-performance computing, is the most affected. Revenue for the segment is expected to reach $404.3 billion this year, up an staggering 144% from 2024. NAND Flash, though growing at a slower pace, is also seeing unprecedented pressure. Enterprise SSDs, critical for AI inference tasks, are seeing quarterly price hikes of 55-60%, with 2026 revenue projected to jump 112% year-over-year to $147.3 billion.

Why is this happening? Traditional memory markets—like consumer electronics—have struggled to keep up. Geopolitical tensions and supply chain constraints in early 2025 initially dampened growth, but as AI adoption accelerated, CSPs ramped up capital expenditures, creating a sudden surge in procurement. The result? A market where suppliers hold all the leverage.

NVIDIA’s recent announcements at CES 2026 underscore the magnitude of the change. The company highlighted how AI is reshaping the entire computing stack, with generative AI systems now requiring frequent, high-speed access to data. This isn’t just about training models anymore—it’s about real-time decision-making, where latency and bandwidth matter as much as raw capacity.

For businesses, the implications are clear: memory costs are no longer a line item but a strategic consideration. The days of treating DRAM and NAND as interchangeable components are over. High-performance computing, AI servers, and enterprise storage are all competing for the same constrained supply, driving prices higher and forcing companies to rethink their infrastructure investments.

The outlook remains bullish. With no signs of supply easing, memory prices are expected to stay elevated through 2027, reinforcing memory’s role as the silent enabler of AI’s next wave. The question isn’t whether prices will drop—it’s how long the market can sustain this level of growth before new production capacity comes online.

Key Specs & Market Projections

- DRAM Revenue (2025): $404.3 billion (144% YoY growth)

- NAND Flash Revenue (2026): $147.3 billion (112% YoY growth)

- Global Memory Market (2026): $551.6 billion

- Global Memory Market (2027): $842.7 billion (53% YoY growth)

- DRAM Price Surge (Q4 2025): 53-58% (DDR5 demand-driven)

- NAND Flash Price Surge (Q1 2026): 55-60% (enterprise SSD demand)

- AI-Driven Memory Demand: DDR5, DDR6, high-IOPS enterprise SSDs

The surge in memory prices reflects a broader trend: AI isn’t just consuming more resources—it’s redefining what those resources look like. For enterprises, this means tighter budgets for AI infrastructure, while for consumers, it could translate to higher costs for high-end GPUs like the upcoming GeForce RTX 50-series, which may see prices escalate further due to memory constraints. With DDR6 memory arriving in 2027 at speeds of 8,800-17,600 MT/s, the memory market is entering a phase where only the most efficient architectures will thrive.

One thing is certain: the memory market’s transformation is far from over. As AI continues to evolve, so too will the hardware that powers it—and those who can adapt will be the ones to benefit.