The modern customer has just one need that matters: Getting the thing they want when they want it. The old standard RAG model embed+retrieve+LLM misunderstands intent, overloads context and misses freshness, repeatedly sending customers down the wrong paths.

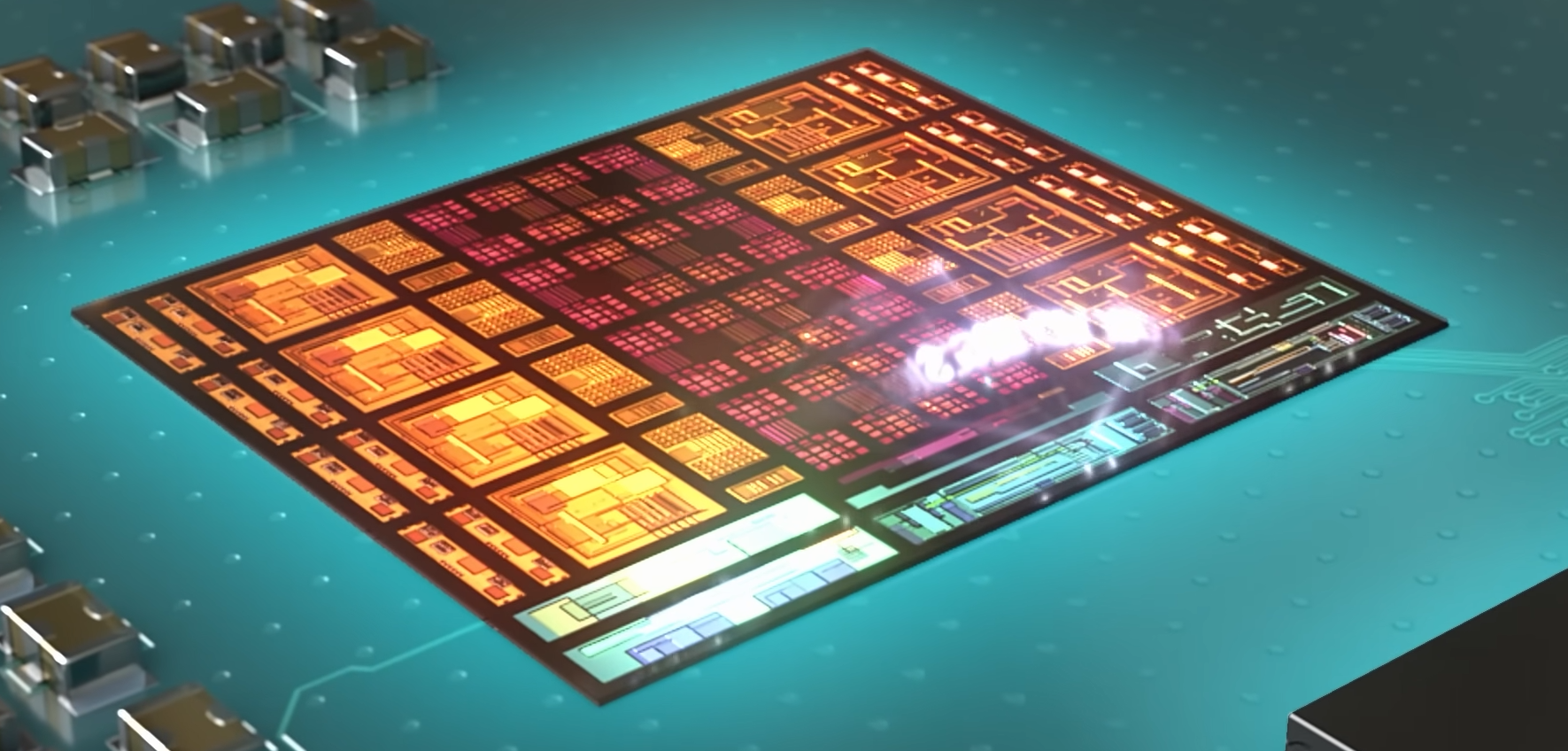

Instead, intent-first architecture uses a lightweight language model to parse the query for intent and context, before delivering to the most relevant content sources (documents, APIs, people).

Enterprise AI is a speeding train headed for a cliff. Organizations are deploying LLM-powered search applications at a record pace, while a fundamental architectural issue is setting most up for failure.

A recent Coveo study revealed that

The problem isn’t the underlying models. It’s the architecture around them.

After designing and running live AI-driven customer interaction platforms at scale, serving millions of customer and citizen users at some of the world’s largest telecommunications and healthcare organizations, I’ve come to see a pattern. It’s the difference between successful

It’s a cloud-native architecture pattern that I call Intent-First. And it’s reshaping the way enterprises build AI-powered experiences.

The $36 pillion problem

Gartner projects the global conversational AI market will balloon to

Then production happens.

A major telecommunications provider I work with rolled out a RAG system with the expectation of driving down the support call rate. Instead, the rate increased. Callers tried AI-powered search, were provided incorrect answers with a high degree of confidence and called customer support angrier than before.

This pattern is repeated over and over. In healthcare, customer-facing AI assistants are providing patients with formulary information that’s outdated by weeks or months. Financial services chatbots are spitting out answers from both retail and institutional product content. Retailers are seeing discontinued products surface in product searches.

The issue isn’t a failure of AI technology. It’s a failure of architecture

Why standard RAG architectures fail

The standard RAG pattern — embedding the query, retrieving semantically similar content

1. The intent gap

Intent is not context. But standard RAG architectures don’t account for this.

Say a customer types “I want to cancel” What does that mean? Cancel a service? Cancel an order? Cancel an appointment? During our telecommunications deployment, we found that 65% of queries for “cancel” were actually about orders or appointments, not service cancellation. The RAG system had no way of understanding this intent, so it consistently returned service cancellation documents.

Intent matters. In healthcare, if a patient is typing “I need to cancel” because they're trying to cancel an appointment, a prescription refill or a procedure, routing them to medication content from scheduling is not only frustrating — it's also dangerous.

2. Context flood

Enterprise knowledge and experience is vast, spanning dozens of sources such as product catalogs, billing, support articles, policies, promotions and account data. Standard RAG models treat all of it the same, searching all for every query.

When a customer asks “How do I activate my new phone,” they don’t care about billing FAQs, store locations or network status updates. But a standard RAG model retrieves semantically similar content from every source, returning search results that are a half-steps off the mark.

3. Freshness blindspot

Vector space is timeblind. Semantically, last quarter’s promotion is identical to this quarter’s. But presenting customers with outdated offers shatters trust. We linked a significant percentage of customer complaints to search results that surfaced expired products, offers, or features.

The Intent-First architecture pattern

The Intent-First architecture pattern is the mirror image of the standard RAG deployment. In the RAG model, you retrieve, then route. In the Intent-First model, you classify before you route or retrieve.

Intent-First architectures use a lightweight language model to parse a query for intent and context, before dispatching to the most relevant content sources (documents, APIs, agents).

Comparison: Intent-first vs standard RAG

Cloud-native implementation

The Intent-First pattern is designed for cloud-native deployment, leveraging microservices, containerization and elastic scaling to handle enterprise traffic patterns.

Intent classification service

The classifier determines user intent before any retrieval occurs

ALGORITHM: Intent Classification

INPUT: user_query (string)

OUTPUT: intent_result (object)

1. PREPROCESS query (normalize, expand contractions)

2. CLASSIFY using transformer model

- primary_intent ← model.predict(query)

- confidence ← model.confidence_score()

3. IF confidence < 0.70 THEN

requires_clarification: true

suggested_question: generate_clarifying_question(query)

4. EXTRACT sub_intent based on primary_intent

- IF primary = "ACCOUNT" → check for ORDER_STATUS, PROFILE, etc.

- IF primary = "SUPPORT" → check for DEVICE_ISSUE, NETWORK, etc.

- IF primary = "BILLING" → check for PAYMENT, DISPUTE, etc.

5. DETERMINE target_sources based on intent mapping

- ORDER_STATUS → [orders_db, order_faq]

- DEVICE_ISSUE → [troubleshooting_kb, device_guides]

- MEDICATION → [formulary, clinical_docs] (healthcare)

requires_personalization: true/false

Context-aware retrieval service

Once intent is classified, retrieval becomes targeted

ALGORITHM: Context-Aware Retrieval

INPUT: query, intent_result, user_context

OUTPUT: ranked_documents

1. GET source_config for intent_result.sub_intent

- primary_sources ← sources to search

- excluded_sources ← sources to skip

- freshness_days ← max content age

2. IF intent requires personalization AND user is authenticated

- FETCH account_context from Account Service

- IF intent = ORDER_STATUS

- FETCH recent_orders (last 60 days)

- ADD to results

3. BUILD search filters

- content_types ← primary_sources only

- max_age ← freshness_days

- user_context ← account_context (if available)

4. FOR EACH source IN primary_sources

- documents ← vector_search(query, source, filters)

- ADD documents to results

5. SCORE each document

- relevance_score ← vector_similarity × 0.40

- recency_score ← freshness_weight × 0.20

- personalization_score ← user_match × 0.25

- intent_match_score ← type_match × 0.15

- total_score ← SUM of above

6. RANK by total_score descending

7. RETURN top 10 documents

Healthcare-specific considerations

In healthcare deployments, the Intent-First pattern includes additional safeguards

Healthcare intent categories

Clinical: Medication questions, symptoms, care instructions

Coverage: Benefits, prior authorization, formulary

Scheduling: Appointments, provider availability

Billing: Claims, payments, statements

Account: Profile, dependents, ID cards

Critical safeguard: Clinical queries always include disclaimers and never replace professional medical advice. The system routes complex clinical questions to human support.

Handling edge cases

The edge cases are where systems fail. The Intent-First pattern includes specific handlers

Frustration detection keywords

Anger: "terrible," "worst," "hate," "ridiculous"

Time: "hours," "days," "still waiting"

Failure: "useless," "no help," "doesn't work"

Escalation: "speak to human," "real person," "manager"

When frustration is detected, skip search entirely and route to human support.

Cross-industry applications

The Intent-First pattern applies wherever enterprises deploy conversational AI over heterogeneous content

Sales, Support, Billing, Account, Retention | Prevents "cancel" misclassification | |

Clinical, Coverage, Scheduling, Billing | Separates clinical from administrative | |

Retail, Institutional, Lending, Insurance | Prevents context mixing | |

Product, Orders, Returns, Loyalty | Ensures promotional freshness |

Results

After implementing Intent-First architecture across telecommunications and healthcare platforms

Reduced by more than half | |

Reduced approximately 70% | |

Improved roughly 50% | |

The return user rate proved most significant. When search works, users come back. When it fails, they abandon the channel entirely, increasing costs across all other support channels.

The strategic imperative

The conversational AI market will continue to experience hyper growth.

But enterprises that build and deploy typical RAG architectures will continue to fail … repeatedly.

will confidently give wrong answers, users will abandon digital channels out of frustration and support costs will go up instead of down.

Intent-First is a fundamental shift in how enterprises need to architect and build AI-powered customer conversations. It’s not about better models or more data. It’s about understanding what a user wants before you try to help them.

The sooner an organization realizes this as an architectural imperative, the sooner they will be able to capture the efficiency gains this technology is supposed to enable. Those that don’t will be debugging why their AI investments haven’t been producing expected business outcomes for many years to come.

The demo is easy. Production is hard. But the pattern for production success is clear: Intent First.

Sreenivasa Reddy Hulebeedu Reddy is a lead software engineer and enterprise architect