The RTX 5090 isn’t just another iteration—it’s a deliberate step toward addressing the growing demands of AI at scale. With a focus on enterprise-grade workloads, it introduces features that could make it a standout choice for organizations pushing the limits of what’s possible in data centers.

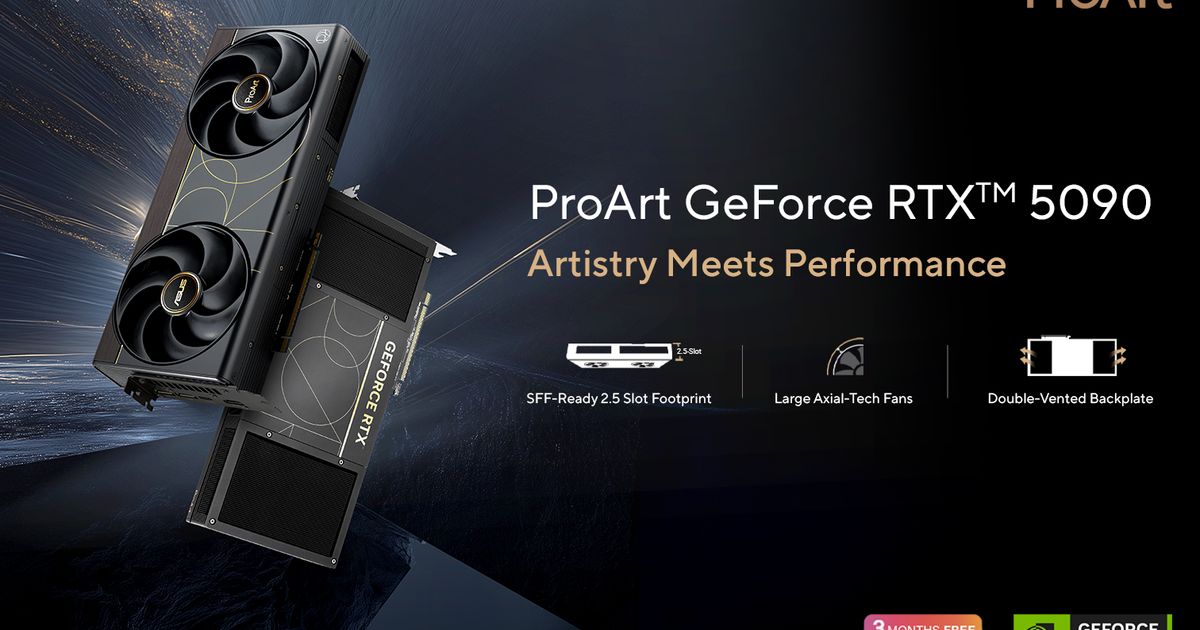

One of its key differentiators is a substantial increase in compute power, particularly in how efficiently it handles tensor operations. This isn’t just about raw performance; it’s about optimizing workflows where AI models require both speed and precision. The architecture also emphasizes memory efficiency, with 24GB of GDDR6X memory tailored for high-bandwidth tasks like training on large-scale models.

Power consumption remains a critical factor, especially in enterprise environments where cost per watt is as important as performance. The RTX 5090 addresses this by integrating advanced cooling and power delivery systems, ensuring it can operate at peak efficiency without compromising reliability—a balance that could set a new standard for AI hardware.

Despite its potential, the RTX 5090 faces challenges, particularly around its price point. At an estimated $5,000, it positions itself as a premium offering, which may limit accessibility for smaller teams or startups. The real test will be whether software optimization and ecosystem support can keep pace with its hardware advancements.

If successful, the RTX 5090 could redefine what enterprise-grade AI hardware should deliver—not just in terms of raw performance, but also in how it integrates into workflows. For now, it remains a product that could either solidify NVIDIA’s dominance or force competitors to raise their game in response.