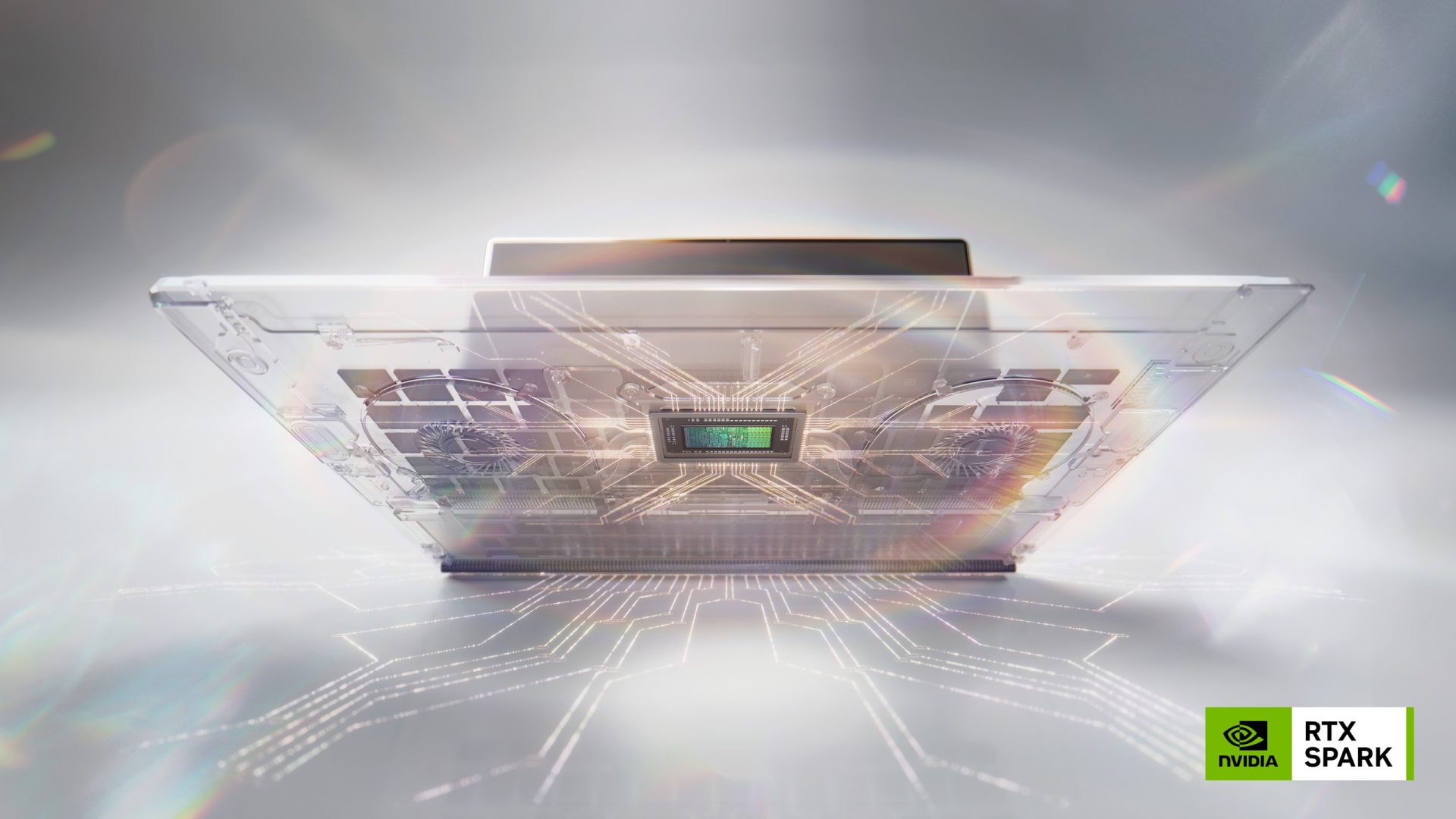

NVIDIA has unveiled a pivotal advancement in its strategy to support the burgeoning field of Artificial Intelligence: the NVIDIA BlueField-4 data processor now serves as the core component within a novel storage infrastructure tailored for AI-native applications. This development represents a significant step forward, addressing the unique and escalating requirements of modern AI systems.

The core of this innovation lies in the BlueField platform’s design philosophy – to provide dedicated hardware acceleration precisely aligned with the computational needs of AI workloads. Traditionally, storage solutions have been built as general-purpose devices, often requiring extensive processing overhead and introducing bottlenecks when integrated into AI workflows. The BlueField-4 directly tackles these challenges by offering a specialized architecture optimized for data access patterns prevalent in machine learning and deep learning applications.

Understanding the Need: AI’s Storage Demands

The rapid growth of AI, particularly generative models and large language models (LLMs), has placed unprecedented demands on storage infrastructure. These models require massive datasets for training and inference, characterized by high bandwidth requirements, low latency access to data, and a need for efficient memory management. Traditional storage systems frequently struggle to keep pace with these needs, leading to performance limitations and increased operational costs.

The volume of data generated by AI is constantly increasing, fueling the demand for scalable and responsive storage solutions. Furthermore, the nature of AI workloads – often involving complex matrix operations and large-scale parallel processing – necessitates hardware that can efficiently handle these computations directly at the storage level. This is where the BlueField-4 platform’s strategic focus comes into play.

BlueField-4: A Dedicated Solution

The BlueField-4 data processor itself is a key element of this new infrastructure. Built upon NVIDIA's advanced System on Chip (SoC) architecture, it combines high-performance processing capabilities with integrated memory controllers and I/O interfaces. This tightly coupled design minimizes latency and maximizes bandwidth – critical factors for AI storage.

Specifically, the BlueField-4 incorporates features designed to accelerate data movement and processing

- High Bandwidth Memory (HBM) Support: Enables rapid access to large datasets.

- Optimized I/O Interfaces: Facilitates high-speed data transfer between the storage system and compute nodes.

- Hardware Acceleration for AI Workloads: Dedicated processing units accelerate common AI operations, such as matrix multiplication and convolution.

AI-Native Storage Infrastructure

The BlueField-4 isn’t simply a processor; it's the foundation of an entirely new type of storage infrastructure designed from the ground up for AI. This ‘AI-native’ approach prioritizes performance, efficiency, and integration with AI frameworks. The system is engineered to seamlessly interact with popular machine learning platforms, streamlining the development and deployment of AI applications.

This new infrastructure aims to reduce the computational burden on CPUs and GPUs, allowing them to focus exclusively on AI model training and inference. By offloading data access and processing tasks to the BlueField-4, the overall system becomes more responsive and efficient.

Applications & Use Cases

The potential applications for this new storage infrastructure are broad and span numerous industries. Some key areas include

- Generative AI: Accelerating the training of generative models, such as those used to create images, text, and music.

- Large Language Models (LLMs): Providing fast and reliable storage for LLM training and inference, enabling more responsive conversational AI systems.

- Scientific Computing: Supporting data-intensive scientific simulations and research in fields like drug discovery and materials science.

- Autonomous Vehicles: Processing the massive amounts of sensor data generated by autonomous vehicles.

Future Implications

The introduction of the BlueField-4 and its associated AI-native storage infrastructure marks a significant shift in how AI systems are supported. It represents a move away from general-purpose storage solutions towards specialized hardware designed to meet the unique demands of modern AI workloads. As AI continues to evolve and become even more computationally intensive, this type of dedicated storage will undoubtedly play an increasingly critical role.

NVIDIA’s focus on tightly integrated hardware and software is a key differentiator in the rapidly evolving landscape of AI infrastructure. The BlueField-4 represents a strategic investment in enabling the next generation of AI applications, promising to unlock new possibilities and accelerate innovation across various industries.