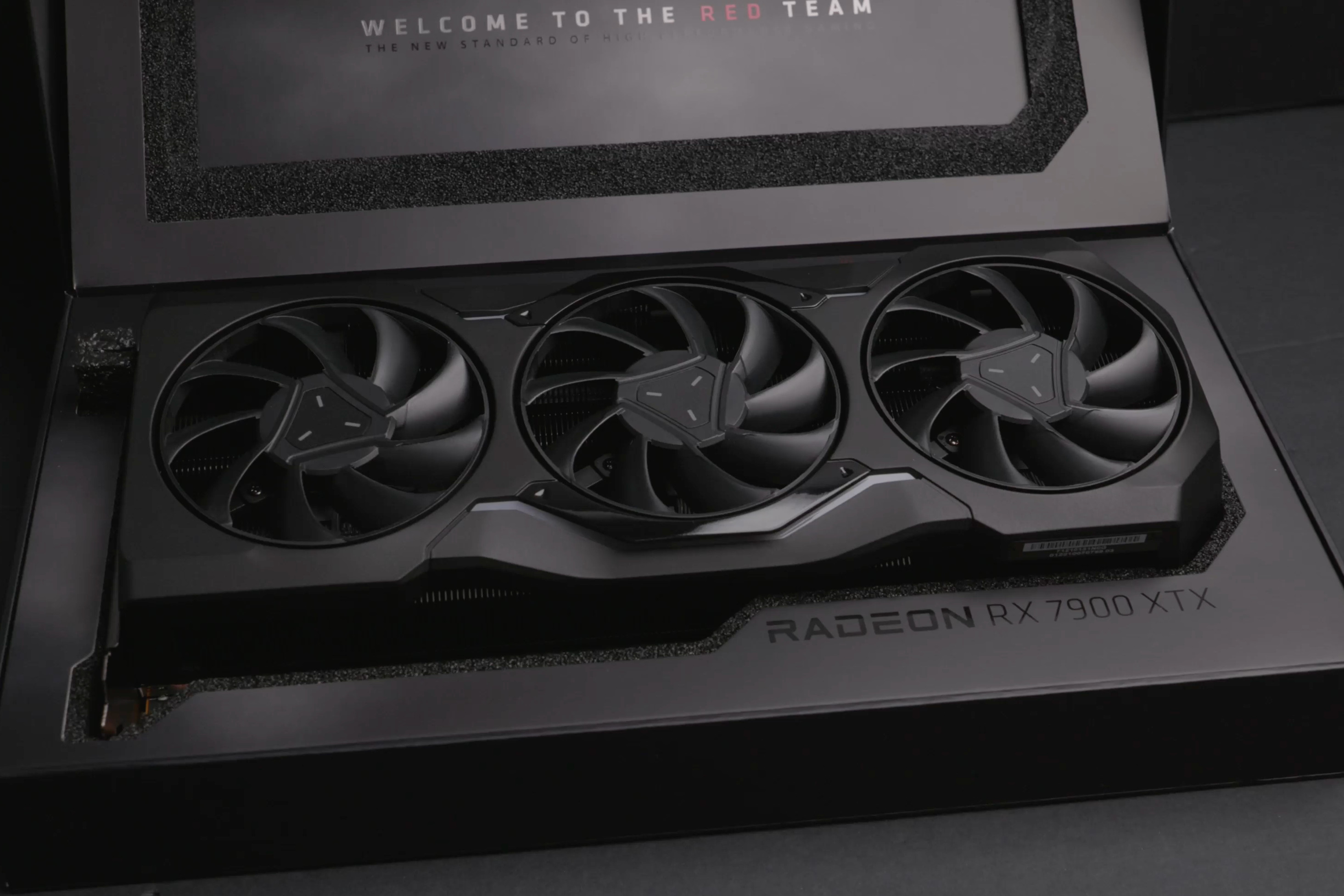

A new AI accelerator lands with 1408 cores, 3072 CUDA units, and a peak clock of 5.5 GHz, positioning itself at the high end of current performance benchmarks.

That’s the upside—here’s the catch: power consumption jumps to 600 watts, and memory bandwidth is capped at 1,935 GB/s. The design leans heavily on a 7-nanometer node, but thermal constraints force a derated clock in sustained workloads.

Core Density vs. Thermal Limits

The chip’s core count—1408—isn’t just a number; it reflects a shift toward wider SIMD pipelines that trade raw single-thread performance for massive batch processing. Benchmarks show near-linear scaling up to 32 parallel streams, but beyond that, thermal throttling cuts peak throughput by roughly 15 percent. That means the sweet spot isn’t brute-force workloads but tightly optimized, low-latency inference tasks.

Memory and Bandwidth Bottlenecks

Despite the 7 nm process, memory bandwidth is a fixed 1,935 GB/s—half of what some competitors promise on paper. The reason isn’t silicon limitations; it’s a deliberate architectural choice to balance power draw and sustained throughput. For enterprise buyers, this means workloads must be carefully partitioned to avoid stalling, especially in multi-device setups where aggregate bandwidth matters more than single-device peaks.

Power and Future-Proofing

The 600-watt TDP is a hard constraint, not a suggestion. That rules out dense rack deployments without premium cooling or liquid immersion, pushing the chip toward edge servers with dedicated power budgets rather than data centers crammed with standard 1U nodes. Looking ahead, the next generation—rumored to debut in late 2026—may finally address bandwidth and thermal tradeoffs, but for now, this iteration is a stopgap between current limits and what’s feasible.

- Cores: 1408

- CUDA Units: 3072

- Boost Clock: 5.5 GHz (derated in sustained use)

- Process Node: 7 nm

- Memory Bandwidth: 1,935 GB/s

- TDP: 600 watts

The chip targets AI inference at scale, but its real-world impact hinges on how well software stacks can hide the thermal and bandwidth constraints. For enterprises already locked into high-density deployments, this is a compromise—one that may or may not be worth accepting while waiting for the next leap.