IT teams often face the challenge of balancing performance with efficiency, especially when workloads shift unpredictably. A recent benchmark update introduces a new way to measure system performance, but its full impact remains unclear. What is confirmed? A more refined approach to analyzing system behavior under load. What’s still unconfirmed? How this translates into tangible improvements for everyday users.

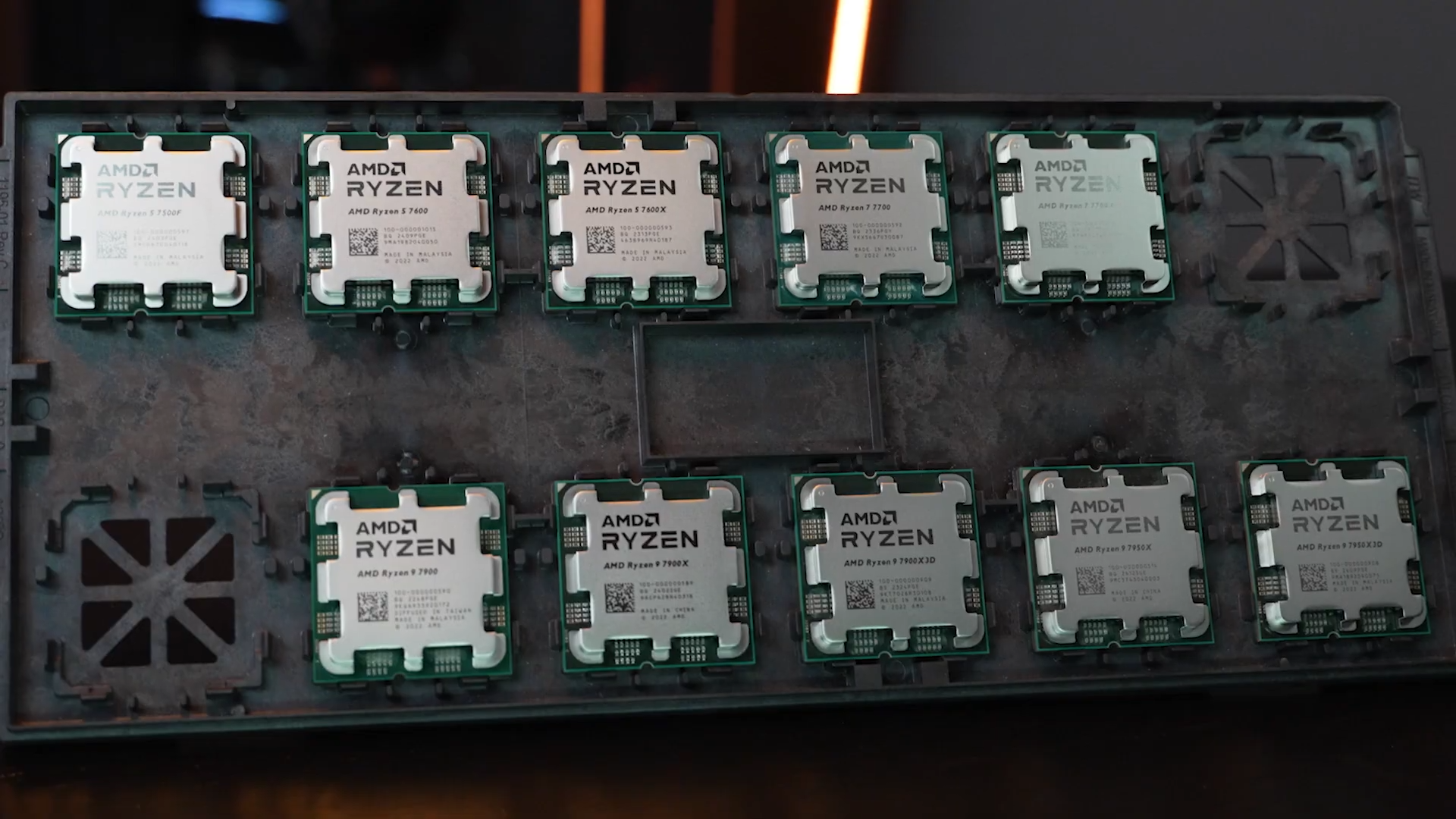

At the core of this development lies a shift in how systems are tuned. Traditionally, performance tuning has relied on static benchmarks—fixed workloads designed to stress-test components like CPU, GPU, or RAM. These benchmarks provide a snapshot but often fail to capture the dynamic nature of real-world computing. The new approach aims to address this gap by incorporating variable workloads that mimic everyday tasks more closely.

What’s Improved

The most notable change is the introduction of a more adaptive benchmarking framework. Instead of relying on a single, rigid test scenario, this new method uses multiple workload profiles that adjust in real time based on system behavior. This adaptability allows for a deeper dive into how components interact under different conditions.

- Dynamic workload adjustment: The benchmark dynamically shifts between CPU-intensive, GPU-intensive, and memory-bound tasks to simulate a broader range of real-world scenarios.

- Real-time performance analysis: System metrics like thermal throttling, power draw, and component utilization are tracked in parallel with workload execution, providing a more holistic view of performance.

- Improved tuning recommendations: Based on the analysis, the system generates tailored suggestions for optimization, such as adjusting CPU/GPU clock speeds or memory timings, rather than relying on one-size-fits-all presets.

A practical example of this in action would be a user running a video editing workload that suddenly shifts to rendering 3D graphics. With the old benchmarking method, this transition might have been treated as an anomaly. The new approach, however, treats it as part of a continuous spectrum, allowing for more accurate performance predictions and optimizations.

Why It Matters

For IT teams managing complex workloads, this development could be a game-changer. No longer constrained by the limitations of static benchmarks, they can now fine-tune systems with greater precision. However, the real-world benefits for everyday users remain uncertain.

The dynamic nature of the new benchmarking method means that it may not yet be ready to replace traditional tools entirely. While it offers a more nuanced view of system performance, its complexity could make it less accessible for casual users who rely on straightforward benchmarks to gauge their hardware’s capabilities. For now, IT teams should focus on integrating this tool into their existing workflows while keeping an eye on how it evolves.

What is confirmed? A more adaptive and detailed approach to benchmarking that promises deeper insights into system performance. What’s still unconfirmed? Whether this level of detail will translate into meaningful improvements for everyday users or if it remains a tool primarily for advanced tuning and analysis. As the technology matures, these questions may find their answers.