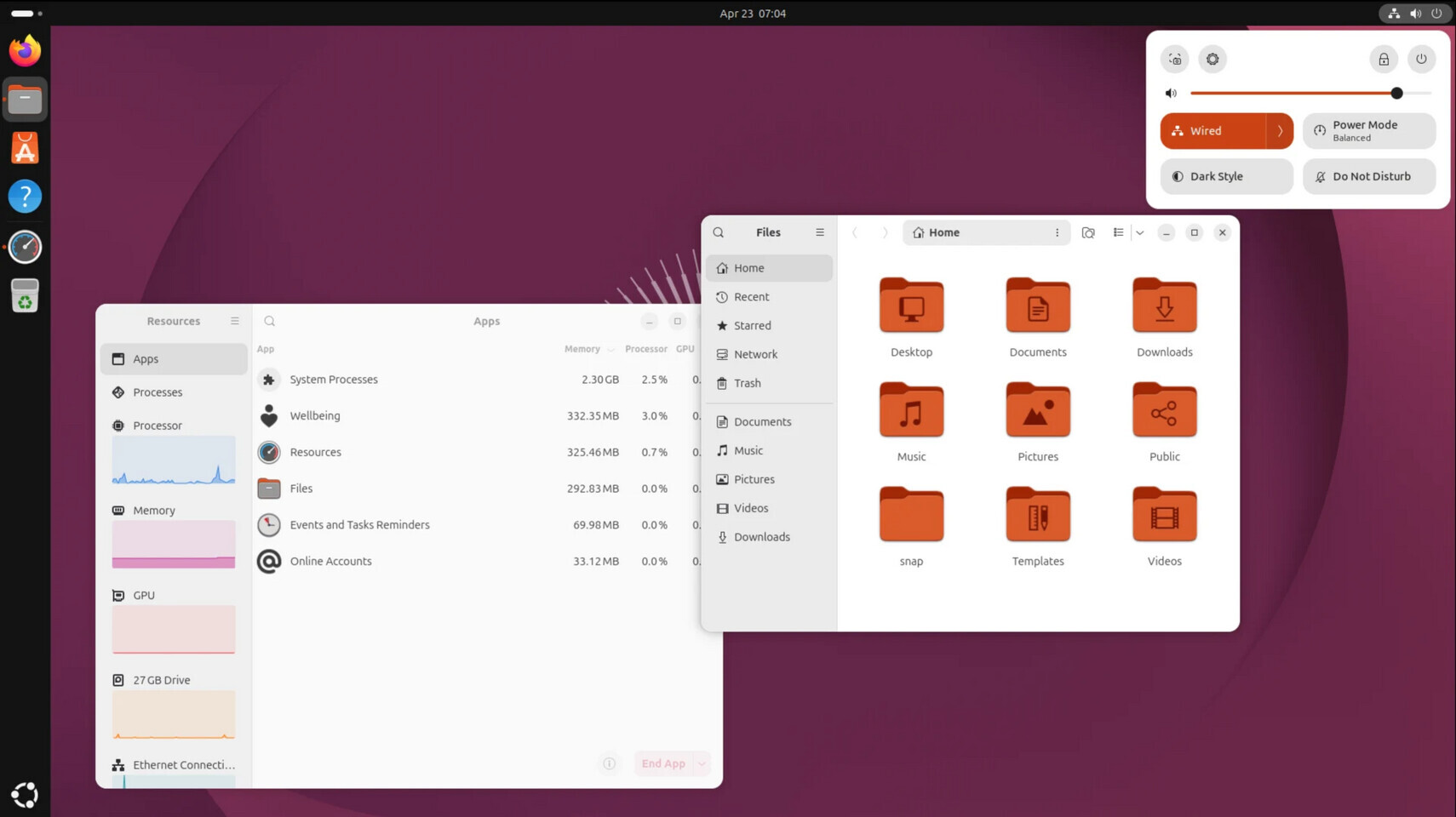

Starting with Ubuntu 26.10 Stonking Stingray in October 2026, the operating system will roll out new AI capabilities that users can choose to enable. These features are designed to improve existing functions—such as speech-to-text and OCR—while also introducing more advanced, agent-like workflows for automation and troubleshooting.

Unlike broader industry trends that often rely on proprietary models or cloud-based inference, Ubuntu’s approach prioritizes open-source solutions with clear licensing terms. Local inference will be the default, reducing dependency on external services while maintaining performance. This strategy aims to balance innovation with responsibility, ensuring transparency in how AI tools are integrated into the system.

Enhancing efficiency without sacrificing control

The AI features will fall into two categories: implicit and explicit. Implicit features subtly improve standard operations, such as accessibility tools that leverage open-source models for accuracy and efficiency. Explicit features, on the other hand, introduce new capabilities—like automated troubleshooting or personalized daily briefings—that require stricter security controls to prevent unintended side effects.

- Implicit AI: Enhances existing functions (e.g., speech-to-text, text-to-speech) with minimal user intervention.

- Explicit AI: Introduces new workflows (e.g., agentic automation, document authoring) with built-in safeguards.

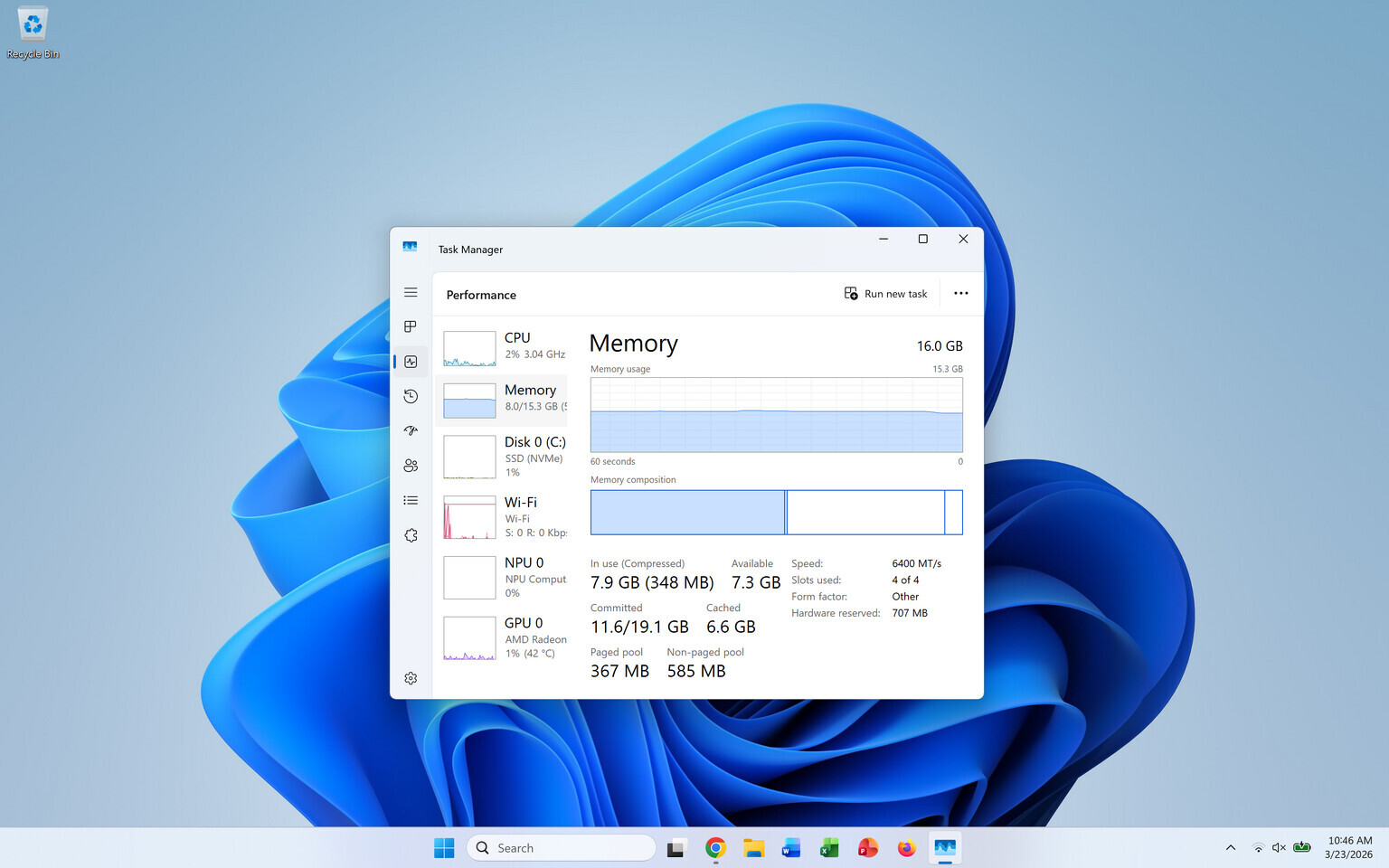

This dual approach allows users to benefit from AI enhancements without compromising system stability or privacy. Canonical’s focus on local inference—powered by optimized models like Gemma 4 and Qwen-3.6-35B-A3B—reduces computational overhead while maintaining advanced capabilities, such as tool-calling for API interactions.

Why this matters for users and developers

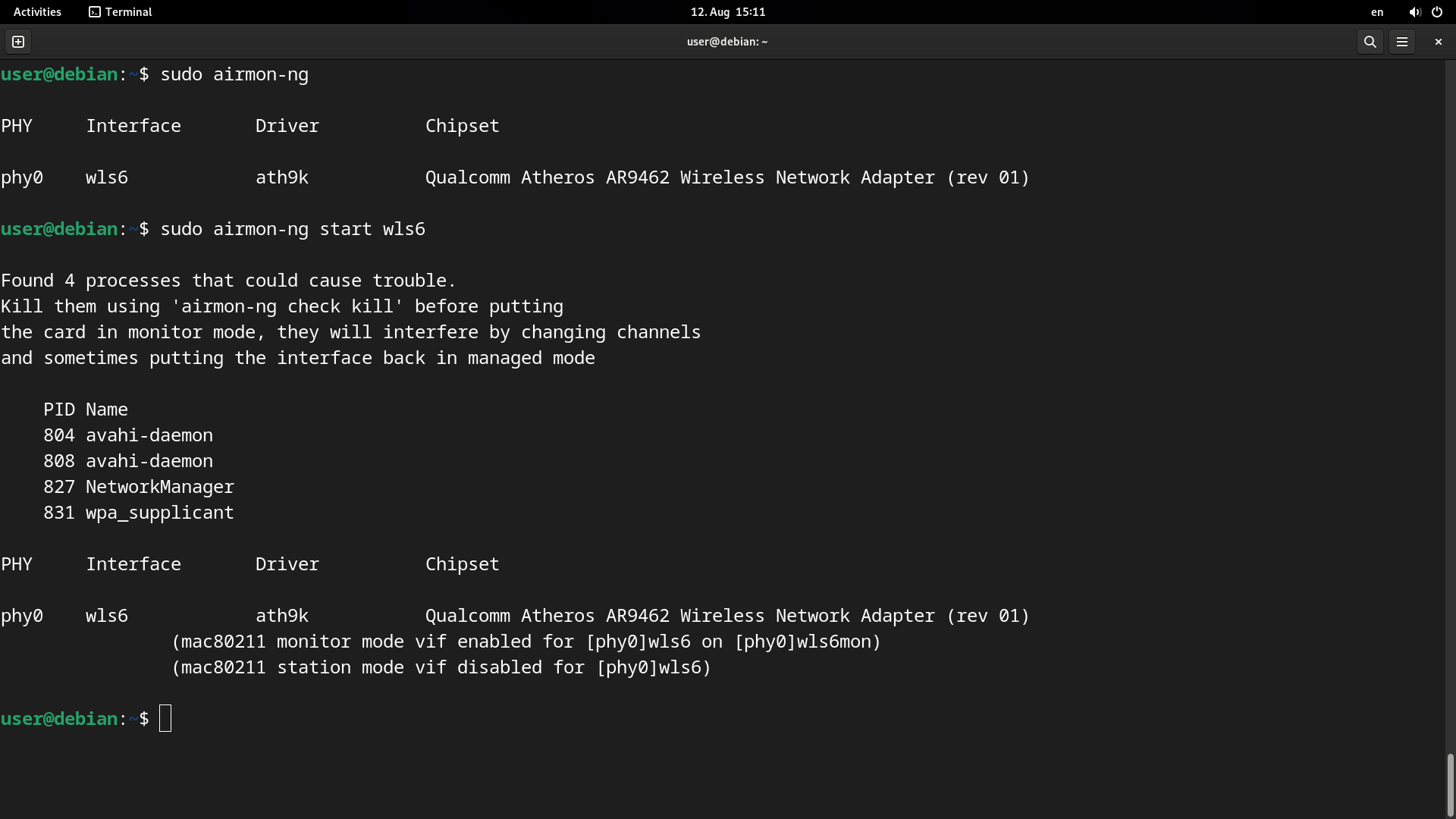

For power users, Ubuntu’s AI integration could streamline complex tasks like system administration or log analysis. Site Reliability Engineers (SREs) managing Ubuntu fleets may see faster root-cause identification during incidents, while everyday users gain improved accessibility tools without sacrificing control.

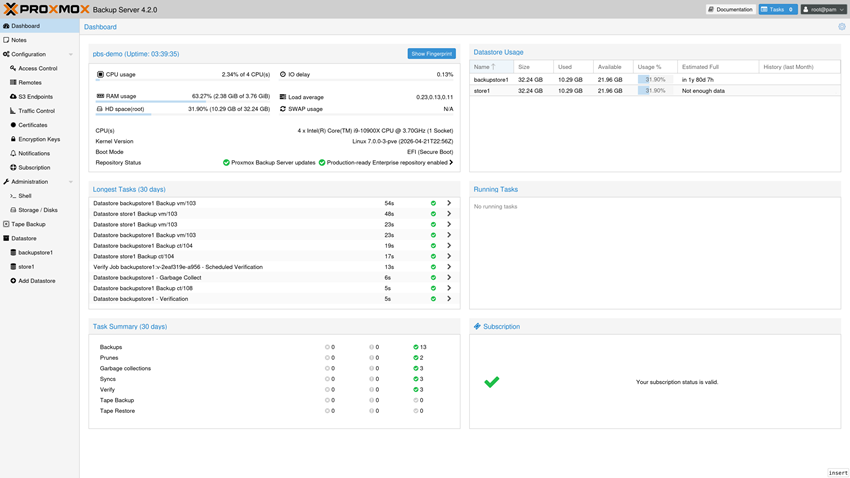

The partnership between Canonical and silicon manufacturers ensures hardware-optimized inference snaps, making it easier to deploy models like nemotron-3-nano with minimal setup. These snaps adhere to Ubuntu’s confinement rules, ensuring models cannot access user data indiscriminately—a critical factor for enterprise adoption.

As AI tools become more ubiquitous, Ubuntu’s measured approach sets a precedent for responsible integration in open-source ecosystems. By focusing on education, security, and performance, Canonical aims to deliver meaningful improvements without repeating past mistakes of over-reliance or lack of transparency.

The next major release will mark the beginning of this transition, with further refinements expected as silicon capabilities advance. For now, users can expect a more capable OS—one that leverages AI thoughtfully while keeping operational costs and trust at the forefront.