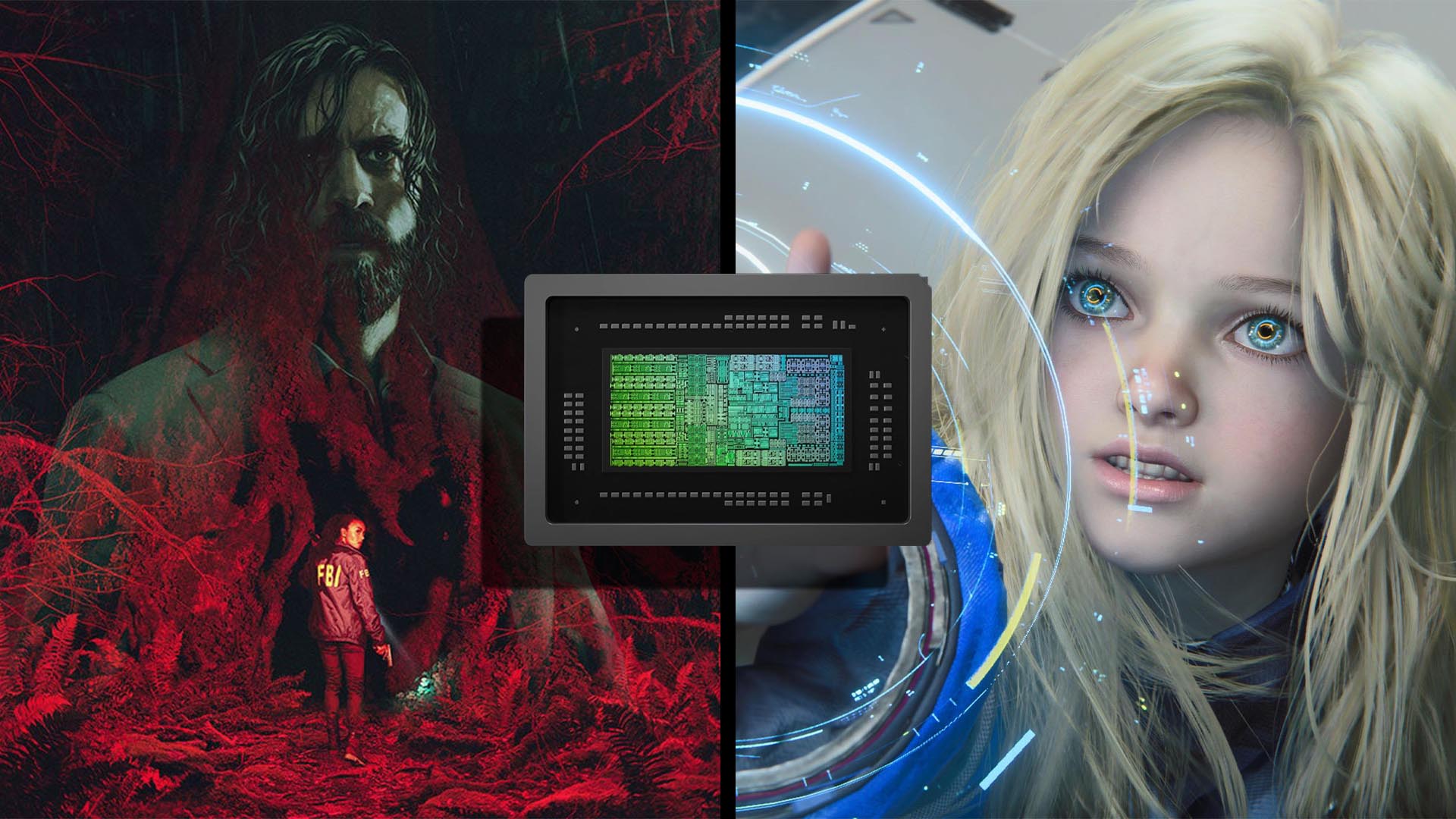

Tenstorrent has unveiled an AI model optimized for its Blackhole server platform, claiming to generate a five-second video in just 2.4 seconds—a speed that outpaces real-time processing. The achievement hinges on a combination of hardware acceleration and software optimization, but the practical implications remain unclear.

The system is built around Tenstorrent’s Blackhole architecture, which integrates high-performance computing with AI-specific workloads. While the exact details of the model—such as its size or training data—are not yet disclosed, the focus appears to be on raw throughput rather than quality or versatility. This raises questions about how such a system might fit into existing workflows, particularly for users who prioritize flexibility over speed.

For enthusiasts and developers, the immediate appeal lies in the sheer performance metric: 2.4 seconds per five-second video is a benchmark that could redefine expectations for AI-generated content. However, the lack of transparency around power consumption, cost, or scalability introduces caution. If this speed comes at the expense of efficiency, it may not be as transformative as it seems.

Everyday users, on the other hand, may find less to get excited about. The system is currently a prototype, with no clear path to consumer adoption. The focus appears to remain on enterprise or specialized use cases, leaving general audiences to wonder when—or if—this level of performance will trickle down.

A reality check: while the speed is impressive, the ecosystem around this technology is still nascent. Tenstorrent’s Blackhole servers are a relatively new entry in the AI hardware space, and their long-term viability remains untested. Without broader industry support or standardized workflows, even the most optimized models risk becoming niche solutions.

The most significant change here is not just the speed, but the potential shift in how AI-generated content is measured. If real-time—or faster-than-real-time—processing becomes the new baseline, it could pressure competitors to match this cadence. But for now, the question lingers: is this a step forward, or a distraction from what users actually need?