The NVIDIA Vera CPU marks a critical milestone in AI infrastructure, addressing the escalating demands of agentic intelligence. Unlike conventional processors, Vera is engineered from the ground up to handle the complex orchestration required by modern AI agents—planning tasks, executing tools, processing data, and validating results—all while operating with significantly higher efficiency.

At its core, Vera represents a departure from traditional CPU design. It combines 88 custom Olympus cores, each capable of running two tasks simultaneously through NVIDIA Spatial Multithreading, ensuring consistent performance under the high utilization typical of agentic AI workloads. This architecture, paired with a second-generation low-power memory subsystem built on LPDDR5X, delivers up to 1.2 TB/s of bandwidth—double that of general-purpose CPUs—while consuming half the power. The result is a processor that not only meets but exceeds the performance thresholds for large-scale AI services, from coding assistants to enterprise-grade agentic systems.

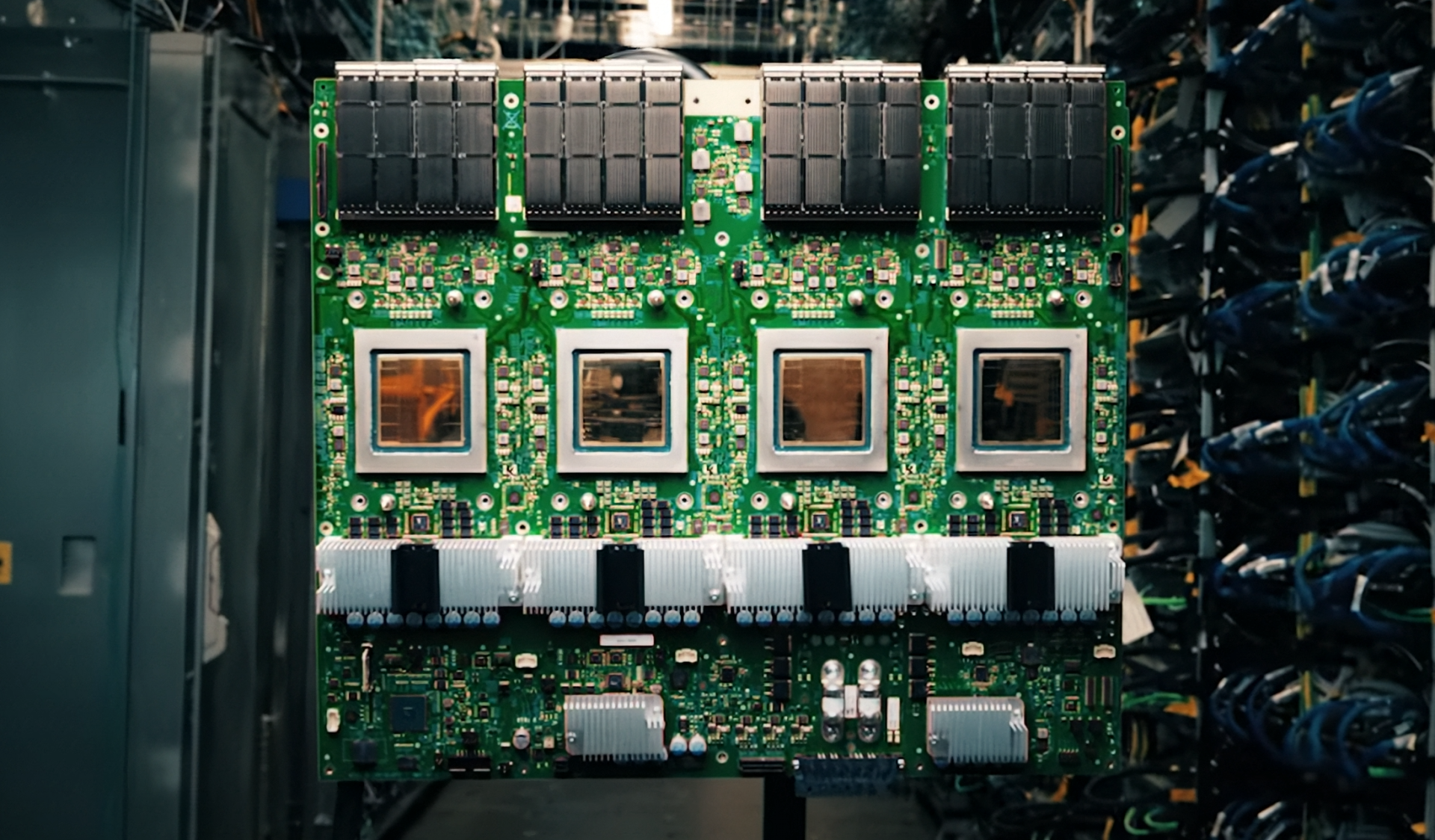

The impact of Vera extends beyond raw performance metrics. Its integration with NVIDIA's NVLink-C2C interconnect technology provides 1.8 TB/s of coherent bandwidth between CPUs and GPUs, a sevenfold improvement over PCIe Gen 6. This high-speed data sharing capability is critical for workloads that demand seamless coordination between processing units, such as reinforcement learning and multi-tenant AI factories. When deployed in the new Vera CPU rack—comprising 256 liquid-cooled Vera CPUs—the system can sustain over 22,500 concurrent environments, each operating at full performance. This level of scalability is a game-changer for organizations looking to deploy tens of thousands of simultaneous AI instances within a single rack.

Vera's ecosystem support is equally impressive. Leading hyperscalers, including Alibaba, Meta, and Oracle Cloud Infrastructure, along with global system manufacturers like Dell Technologies, HPE, and Lenovo, are already integrating Vera into their platforms. This broad adoption underscores its potential to become the standard for AI workloads that span from developer tools to enterprise-scale applications. Additionally, NVIDIA's reference designs for dual and single-socket CPU server configurations ensure flexibility across a range of use cases, from reinforcement learning to cloud applications.

While Vera's specifications are groundbreaking, its real-world implications are still unfolding. The processor's ability to deliver 50% faster performance than traditional CPUs while maintaining energy efficiency is a significant leap forward. However, the long-term impact on AI development and deployment will depend on how quickly developers and enterprises adopt this new architecture. For now, Vera stands as a testament to NVIDIA's commitment to pushing the boundaries of what is possible in AI infrastructure.

The most critical change introduced by Vera is its redefinition of CPU performance for agentic AI. By combining high-performance cores with advanced memory and interconnect technologies, it sets a new benchmark for efficiency and scalability in large-scale AI systems. This shift will likely accelerate the adoption of agentic intelligence across industries, from scientific computing to cloud services.