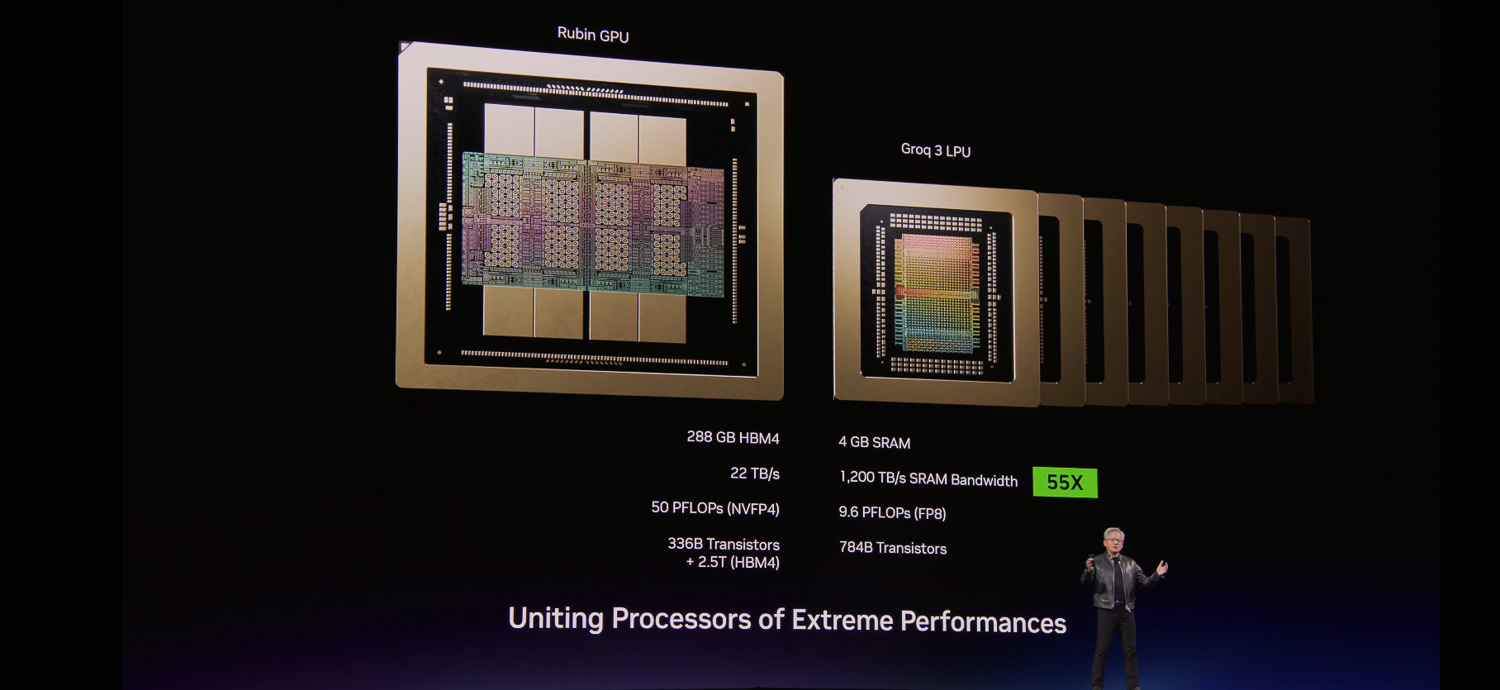

NVIDIA’s hardware roadmap is taking a decisive turn toward specialization, with three distinct processor families—Rubin GPUs, Groq LPUs, and Vera CPUs—emerging as the backbone of next-generation AI infrastructure. This shift reflects a broader industry trend: the growing need for workload-specific silicon to balance performance and cost in data centers handling trillion-parameter models.

The Rubin GPU, codenamed after the astronomer Edwin Hubble’s son, is designed to push the boundaries of inference efficiency. It inherits the CUDA architecture but introduces deeper optimizations for large-scale AI tasks. Meanwhile, Groq’s LPU (Language Processing Unit) takes a different approach, focusing on linear algebra operations with minimal memory overhead—a critical advantage in models where compute-bound bottlenecks dominate.

On the CPU front, NVIDIA’s Vera architecture aims to bridge the gap between traditional x86 performance and AI-accelerated workloads. It’s positioned as a high-frequency, multi-core design optimized for both general computing and AI offloading, though its exact role in NVIDIA’s ecosystem remains less clear than that of Rubin or Groq.

The key question for PC builders and data center operators is how these architectures will integrate into existing workflows. Rubin GPUs are expected to replace current-generation accelerators in high-end setups, offering better throughput per watt—a significant factor as power costs rise. Groq LPUs, with their specialized linear algebra cores, could redefine how inference is handled in large-scale deployments, potentially reducing operational expenses by up to 30% compared to traditional GPU-based solutions.

However, tradeoffs exist. Rubin’s efficiency gains come at the cost of broader compatibility; it won’t support all CUDA workloads out of the box, requiring software adjustments for legacy applications. Groq’s LPU, while impressive in raw performance, lacks the flexibility of a general-purpose GPU, limiting its use cases to specialized AI tasks. Vera CPUs, meanwhile, face an uphill battle against established x86 designs unless NVIDIA can demonstrate clear advantages in AI-accelerated workloads.

For end users, the most immediate impact will be in data centers running trillion-parameter models. Rubin GPUs could become the default choice for high-throughput inference, while Groq LPUs might find a niche in environments where linear algebra operations dominate. PC builders focusing on AI workstations may need to adapt their designs, balancing compatibility with the latest hardware trends.

The bigger picture is about operational cost. As models grow larger and more complex, the ability to optimize for specific workloads—whether through Rubin’s inference-focused GPU or Groq’s linear algebra cores—becomes a critical factor in maintaining profitability. NVIDIA’s strategy suggests that specialization, not just raw performance, will define the next wave of AI hardware.