training is hitting its limits—power requirements are soaring, and the hardware can’t keep up.

NVIDIA’s latest move, NemoClaw, targets that gap head-on. It’s not just a new GPU; it’s an attempt to redefine how data centers handle heavy workloads by merging performance with strategic ecosystem benefits.

The Performance Push

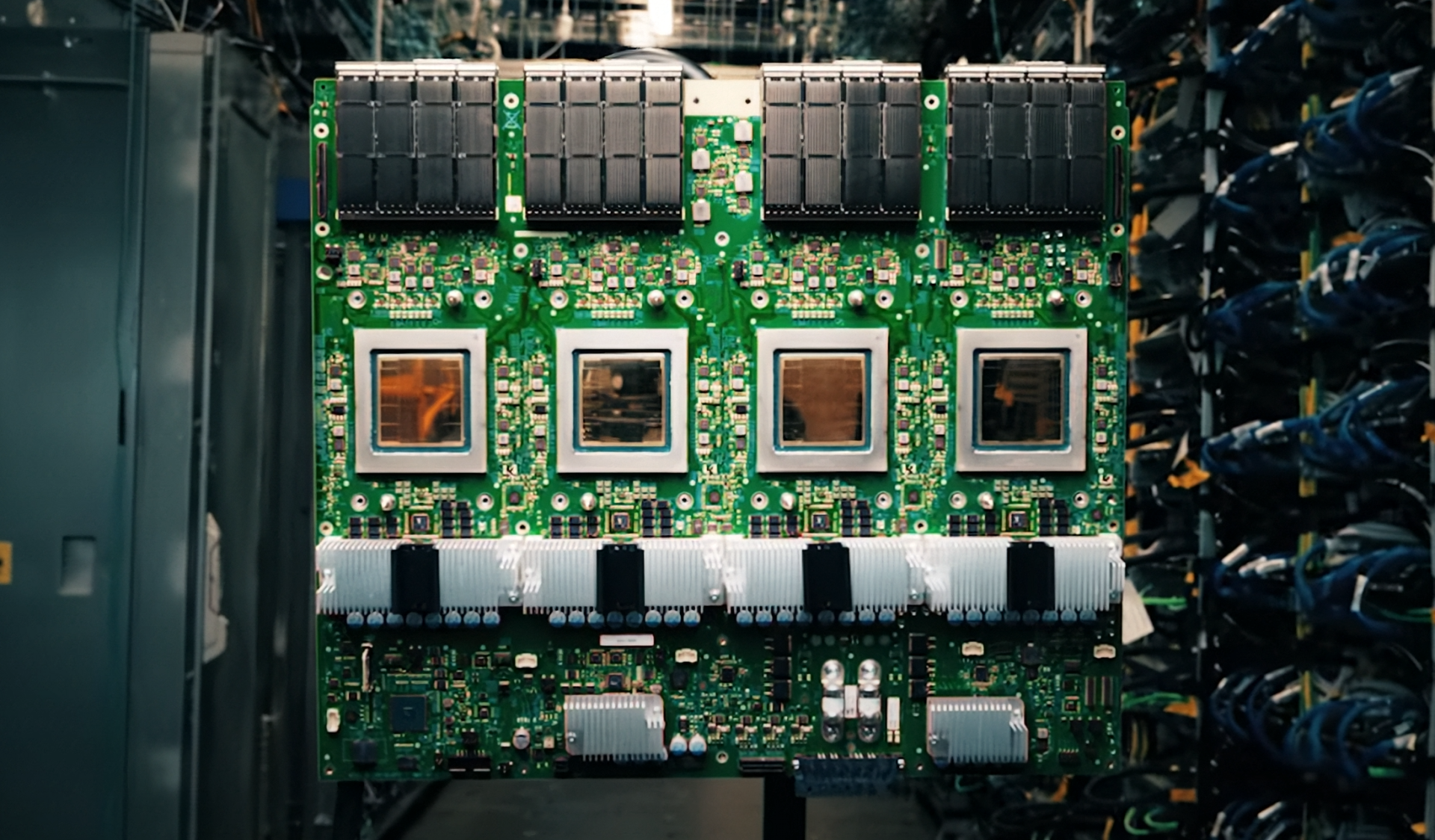

NemoClaw packs 108GB of HBM3 memory, a 50% boost in bandwidth compared to its predecessors. That matters for large language models and complex simulations where memory latency is the bottleneck. The GPU also supports NVIDIA’s NVLink technology, allowing multiple nodes to share memory as if it were one massive system.

But the real standout is the clock speed—2.7GHz, a modest increase but enough to squeeze out more efficiency in power-constrained environments. Benchmarks suggest a 15% improvement in training throughput for certain AI workloads, though real-world gains will depend on how well software catches up.

Why This Matters

The AI boom has created a supply crunch, with data centers scrambling to scale without overloading power grids. NemoClaw is part of that push, but it’s not just about raw performance—it’s about making sure the hardware can actually be deployed in large numbers.

NVIDIA isn’t disclosing full production details yet, but early indications suggest this is a step toward more standardized, high-volume manufacturing. If successful, it could ease the strain on supply chains while keeping AI training within reach for more organizations.

The caveat? The jump in memory capacity comes with tradeoffs. Higher bandwidth means more power draw, and without better cooling solutions, data centers might hit thermal limits faster than expected. It’s a balance NVIDIA will need to refine as adoption grows.

For now, the focus is on proving that performance and availability can coexist. If it does, this could be the start of a more sustainable AI infrastructure—one where hardware keeps pace with demand without choking on its own success.