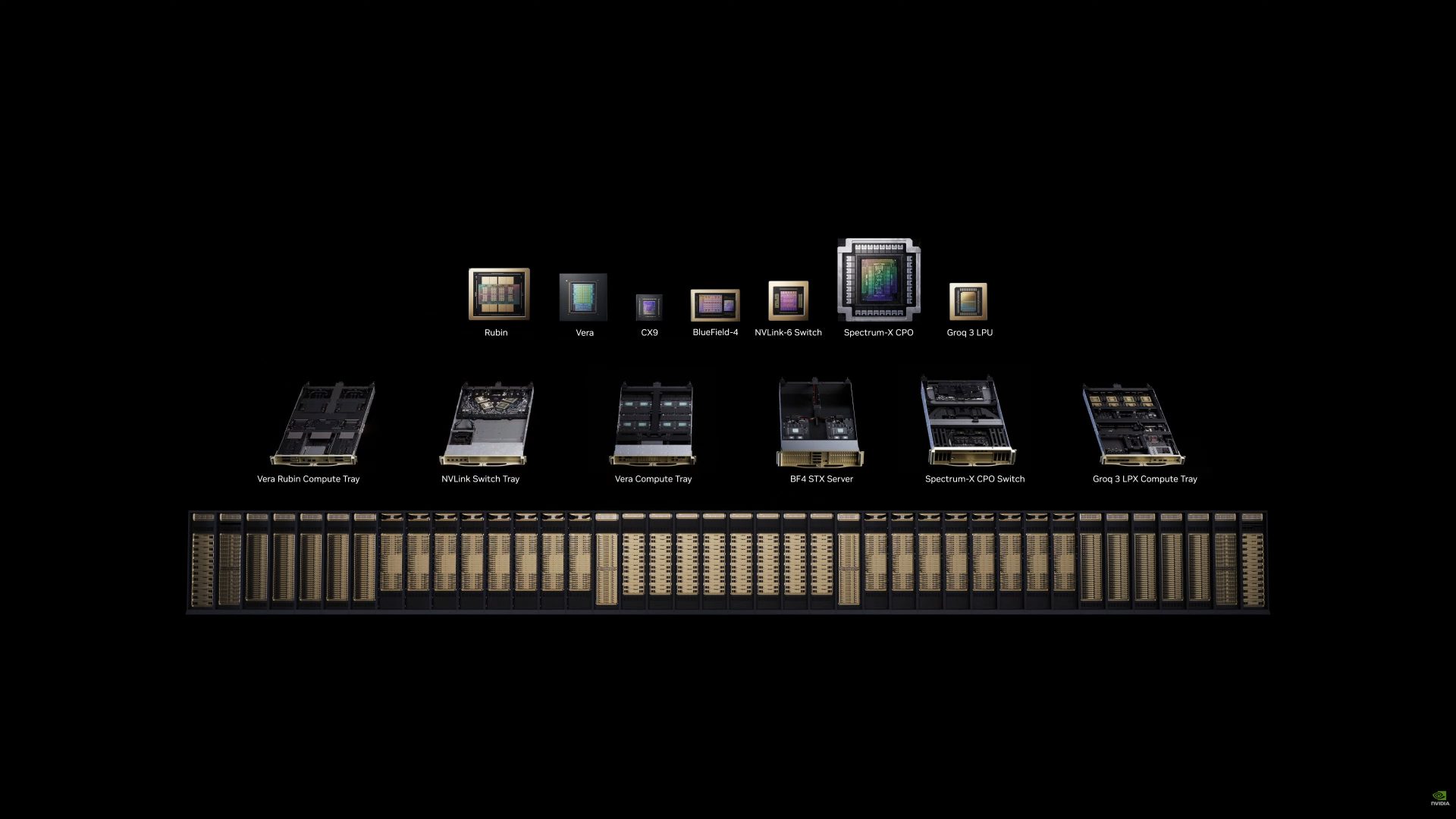

GTC 2026Hardware NVIDIA Vera Rubin Achieves 40 Million Times More Compute In 10 Years: 288 GB HBM4, 22 TB/s Bandwidth, 50 PFLOPs of AI Horsepower Hassan Mujtaba • at EDT Add on Google NVIDIA has officially unveiled its next-gen AI data center platform called Vera Rubin, powered by the Rubin GPU and Vera CPU architectures. NVIDIA Vera Rubin AI Data Center Offers A Stunning 40,000,000x Compute Growth Within A Decade The NVIDIA Vera Rubin platform is designed with a total of 7 chips and six different racks, each serving a singular purpose, to power next-gen AI datacenters. Those seven chips that have been announced today are: Related Story Micron Begins Volume Production of 36GB HBM4, 28 Gbps PCI Gen6 SSDs, & 192 GB SOCAMM2 Memory For NVIDIA Vera Rubin Platform Rubin (GPU) Vera (CPU) CX9 (Connectivity) BlueField-4 (DPU) NVLINK-6 Switch (Interconnect) Spectrum-X CPO (Optics) Groq 3 (LPU) First up, we have the Vera Rubin Compute tray, and what has changed is the mounting system, with which AI data centers now take just 2 hours to install instead of 2 days. The Vera Rubin Compute Tray is entirely liquid-cooled, and liquid-cooled by hot water (45 °C), which takes pressure off the data center. This is also the main compute tray, which houses the new Rubin GPUs, featuring two massive reticle-sized dies and 8 HBM sites. Each NVIDIA Rubin GPU features 288 GB of HBM4 memory, offering up to 22 TB/s of total bandwidth, and 50 PFLOPs of NVFP4 compute performance. Each chip packs 336B transistors, with an additional 2.5 trillion transistors from the HBM4 memory. NVIDIA also has a few things to say about its Vera CPU, which offers extremely high single-threaded core performance, incredibly high data output, and extreme levels of energy efficiency. Vera is the world's first and only data center CPU to utilize LPDDR5 memory and offers unrivaled performance per watt. NVIDIA is not just integrating Vera CPUs into its Vera Rubin platform; these will also be shipped standalone, & the company expects this to open another multi-billion-dollar business front for it. Next comes the NVLink Switch Tray, which is designed with 6th Gen NVLINK, and this rack is a scale-up switching system that is also entirely liquid-cooled. The Groq 3 LPX compute tray is comprised of 8 GROK LPUs and is codenamed LP30. Each Groq 3 LPU offers 500 MB of SRAM, 150 TB/s of SRAM bandwidth, and 1.2 PFLOPs of FP8 performance. Each chip houses 98B transistors. NVIDIA's Spectrum-X CPO Switch is the world's first co-packaged optics switch, which is made at TSMC using NVIDIA's Cu-Litho technology. The Spectrum-X switch is now in full production. The Vera Compute Tray, or ConnectX-9, is also powered by the Vera CPU, and NVIDIA has also adopted a new storage platform to meet the demands of Vera Rubin, called the Bluefield-4 STX storage platform. NVIDIA Vera Rubin NVL72NVIDIA Vera Rubin SuperchipNVIDIA Rubin GPUConfiguration72 NVIDIA Rubin GPUs | 36 NVIDIA Vera CPUs2 NVIDIA Rubin GPUs | 1 NVIDIA Vera CPU1 NVIDIA Rubin GPUNVFP4 Inference3,600 PFLOPS100 PFLOPS50 PFLOPSNVFP4 Training²2,520 PFLOPS70 PFLOPS35 PFLOPSFP8/FP6 Training²1,260 PFLOPS35 PFLOPS17.5 PFLOPSINT8²18 POPS0.5 POPS0.25 POPSFP16/BF16²288 PFLOPS8 PFLOPS4 PFLOPSTF32²144 PFLOPS4 PFLOPS2 PFLOPSFP329,360 TFLOPS260 TFLOPS130 TFLOPSFP642,400 TFLOPS67 TFLOPS33 TFLOPSFP32 SGEMM³28,800 TFLOPS800 TFLOPS400 TFLOPSFP64 DGEMM³14,400 TFLOPS400 TFLOPS200 TFLOPSGPU Memory | Bandwidth20.7 TB HBM4 | 1,580 TB/s576 GB HBM4 | 44 TB/s288 GB HBM4 | 22 TB/sNVLink Bandwidth260 TB/s7.2 TB/s3.6 TB/sNVLink-C2C Bandwidth65 TB/s1.8 TB/s-CPU Core Count3,168 custom NVIDIA Olympus cores (Arm® compatible)88 custom NVIDIA Olympus cores (Arm compatible)-CPU Memory54 TB LPDDR5X1.5 TB LPDDR5X-Total NVIDIA + HBM4 Chips1,2963012 All of these come together in the NVIDIA Vera Rubin NVL72, which will be offered by various partners. Each NVL72 offers a 10x performance per watt increase, 3.6 ExaFlops of NVFP4 performance, 1.6 PB/s of HBM4 bandwidth, and 260 TB/s of NVLINK6 interconnect speeds. The NVIDIA Vera Rubin platform is opening the next AI frontier with: NVIDIA Spectrum-6 SPX Ethernet racks Vera Rubin NVL72 GPU racks Vera CPU racks NVIDIA Groq 3 LPX inference accelerator racks NVIDIA BlueField-4 STX storage racks Also, as mentioned above, NVIDIA Vera CPUs will also come in a 256 Vera CPU rack, offering 300 TB/s of LPDDR5X bandwidth, all connected together using an ETL Spine, and with 6.5x the throughput versus the last-gen solution. Broad Ecosystem SupportVera Rubin-based products will be available from partners starting the second half of this year. This includes leading cloud providers Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure, along with NVIDIA Cloud Partners CoreWeave, Crusoe, Lambda, Nebius, Nscale and Together AI. Global system manufacturers Cisco, Dell Technologies, HPE, Lenovo and Supermicro are expected to deliver a wide range of servers based on Vera Rubin products, as well as Aivres, ASUS, Foxconn, GIGABYTE, Inventec, Pegatron, Quanta Cloud Technology (QCT), Wistron and Wiwynn. AI labs and frontier model developers including Anthropic, Meta, Mistral AI and OpenAI are looking to use the NVIDIA Vera Rubin platform to train larger, more capable models and to serve long-context, multimodal systems at lower latency and cost than with prior GPU generations. Follow on Google to get more of our news coverage in your feeds. Further Reading Micron Ships Out the “World’s First” 256GB SOCAMM2 Modules Targeted Toward the Agentic AI Frenzy Here’s a Look at One of the World’s Most Complex AI Systems, the NVIDIA Vera Rubin, Integrating a Million Components NVIDIA’s CEO to Unveil Chips the “World Has Never Seen Before” at This Year’s GTC, Likely Pointing Toward Rubin or Next-Gen Feynman AI Lineups NVIDIA Manages to Bag In One of AMD’s Biggest AI Customers, Meta, Offering Next-Gen Vera Rubin In a “Multi-Generational” Partnership Read all on NVIDIA Vera Rubin Achieves 40 Million Times More Compute In 10 Years: 288 GB HBM4, 22 TB/s Bandwidth, 50 PFLOPs of AI Horsepower

Reading tools

Key takeaways

- GTC 2026Hardware NVIDIA Vera Rubin Achieves 40 Million Times More Compute In 10 Years: 288 GB HBM4, 22 TB/s Bandwidth, 5...

Share this article