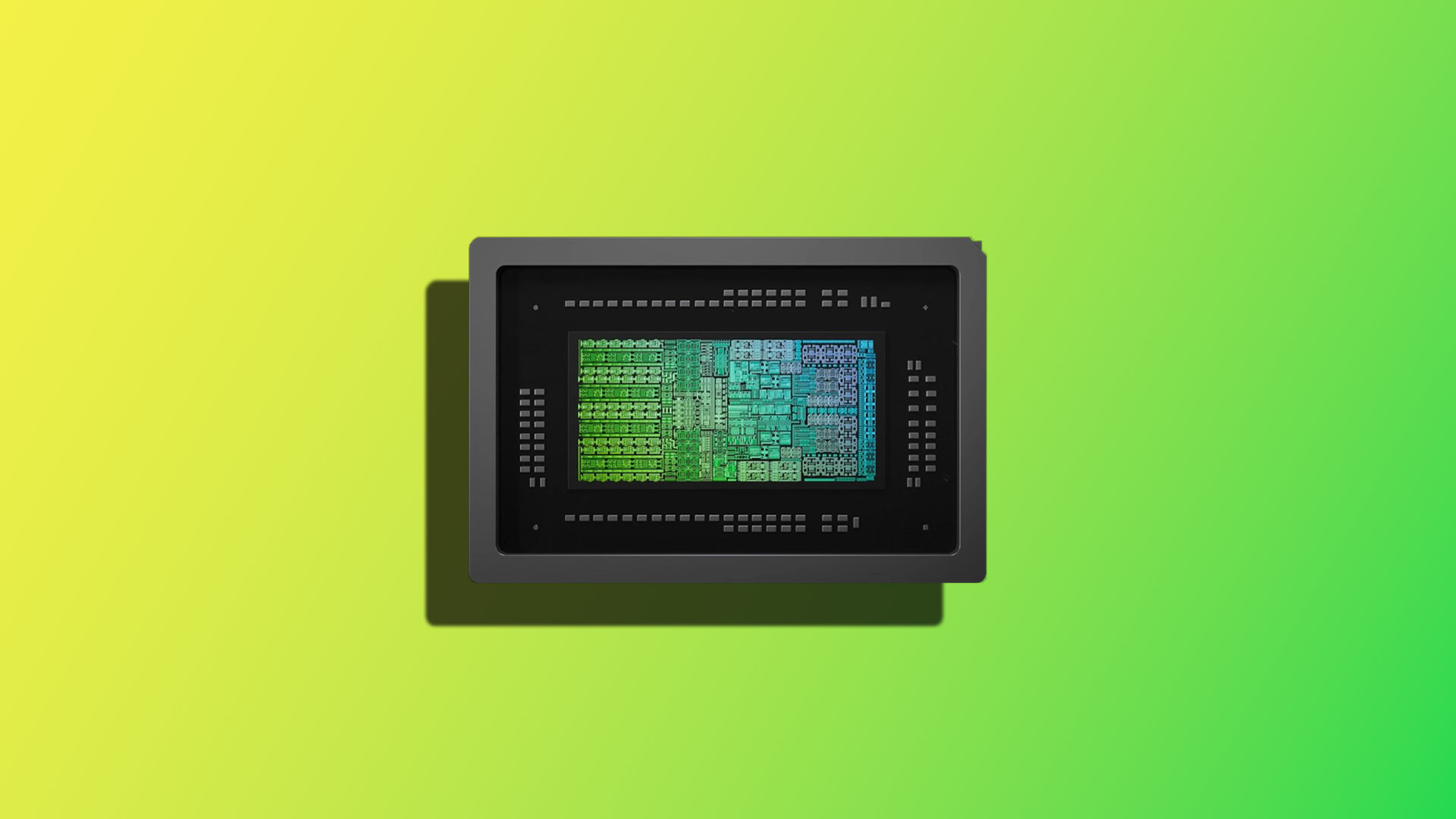

NVIDIA has dramatically shifted its focus with the announcement of the Rubin platform, a dedicated architecture poised to redefine large-scale AI computing. Revealed during recent industry events, the Rubin platform is designed to tackle increasingly complex computational demands, particularly in areas like generative AI, scientific simulations, and advanced robotics.

The DGX SuperPOD: A Foundation for Scale

At the heart of this unveiling lies the DGX SuperPOD – a modular system engineered for organizations demanding maximum performance. This setup isn't merely an upgrade; it’s a fundamentally new approach to deploying AI infrastructure, built around the core tenets of the Rubin architecture. The SuperPOD configuration utilizes six distinct processing units derived from the Rubin design, working in concert to create a single, incredibly powerful supercomputer.

Key Features and Architectural Design

The Rubin platform distinguishes itself through several key innovations. Central to its performance is a focus on optimized data movement and efficient parallel processing – critical factors for accelerating AI training and inference. While specific technical details are being rolled out gradually, early information highlights significant improvements in bandwidth and memory capacity compared to previous generations of NVIDIA’s high-performance computing solutions.

The architecture is designed with a modularity that allows for scalability. The six Rubin processing units within the SuperPOD can be expanded upon as computational needs evolve, providing a flexible infrastructure that adapts to changing workloads. This contrasts with traditional monolithic systems, offering greater agility and cost-effectiveness in the long term.

Target Applications & Use Cases

The potential applications of the Rubin platform are vast and span numerous industries. Its capabilities make it ideally suited for

- Generative AI Development: Training large language models (LLMs) and other generative AI algorithms benefits significantly from the increased processing power and memory bandwidth offered by the Rubin architecture.

- Scientific Discovery: Researchers in fields like drug discovery, materials science, and climate modeling can leverage the SuperPOD’s performance to accelerate simulations and analysis.

- Robotics & Autonomous Systems: The platform's ability to handle complex sensor data and real-time processing is crucial for advancing robotics applications – from autonomous vehicles to industrial automation.

- High-Performance Computing (HPC): The Rubin architecture can be utilized for tackling computationally intensive problems in areas like fluid dynamics, weather forecasting, and astrophysics.

Beyond the SuperPOD: A Modular Ecosystem

NVIDIA is positioning the Rubin platform as more than just a single system; it’s intended to be a foundational element of a broader ecosystem. The company intends to provide various components – including networking solutions, storage options, and software tools – that seamlessly integrate with the Rubin architecture.

This modular approach is designed to reduce complexity for customers, simplifying deployment and management. It also fosters innovation by allowing organizations to tailor their infrastructure precisely to their specific needs.

Performance Expectations & Future Roadmap

While precise benchmarks haven’t been released, NVIDIA has stated that the Rubin platform delivers a substantial performance uplift compared to existing solutions in comparable workloads. The focus is on achieving significant reductions in training times and inference latency – critical metrics for AI applications.

NVIDIA plans to progressively unveil more details about the Rubin platform over the coming months, including deeper dives into its technical specifications and software support. The company’s strategy emphasizes collaboration with partners to drive adoption across diverse industries.

Looking Ahead: A Shift in AI Infrastructure

The unveiling of the Rubin platform represents a clear signal from NVIDIA about the future of AI computing. It’s a move beyond simply increasing GPU power; it's a strategic investment in an architecture designed to meet the unique demands of increasingly sophisticated AI workloads. The DGX SuperPOD, as the initial embodiment of this new approach, is poised to become a cornerstone for organizations seeking to unlock the full potential of artificial intelligence.