The rise of open-source AI is fundamentally reshaping industries, driving innovation at an unprecedented pace. Central to this transformation are powerful computing solutions capable of handling the demanding workloads associated with training and deploying sophisticated models. NVIDIA’s DGX Spark and DGX Station systems are specifically engineered to meet these needs, offering a streamlined pathway for developers to translate groundbreaking research into tangible results.

Bringing Frontier AI Closer to Home

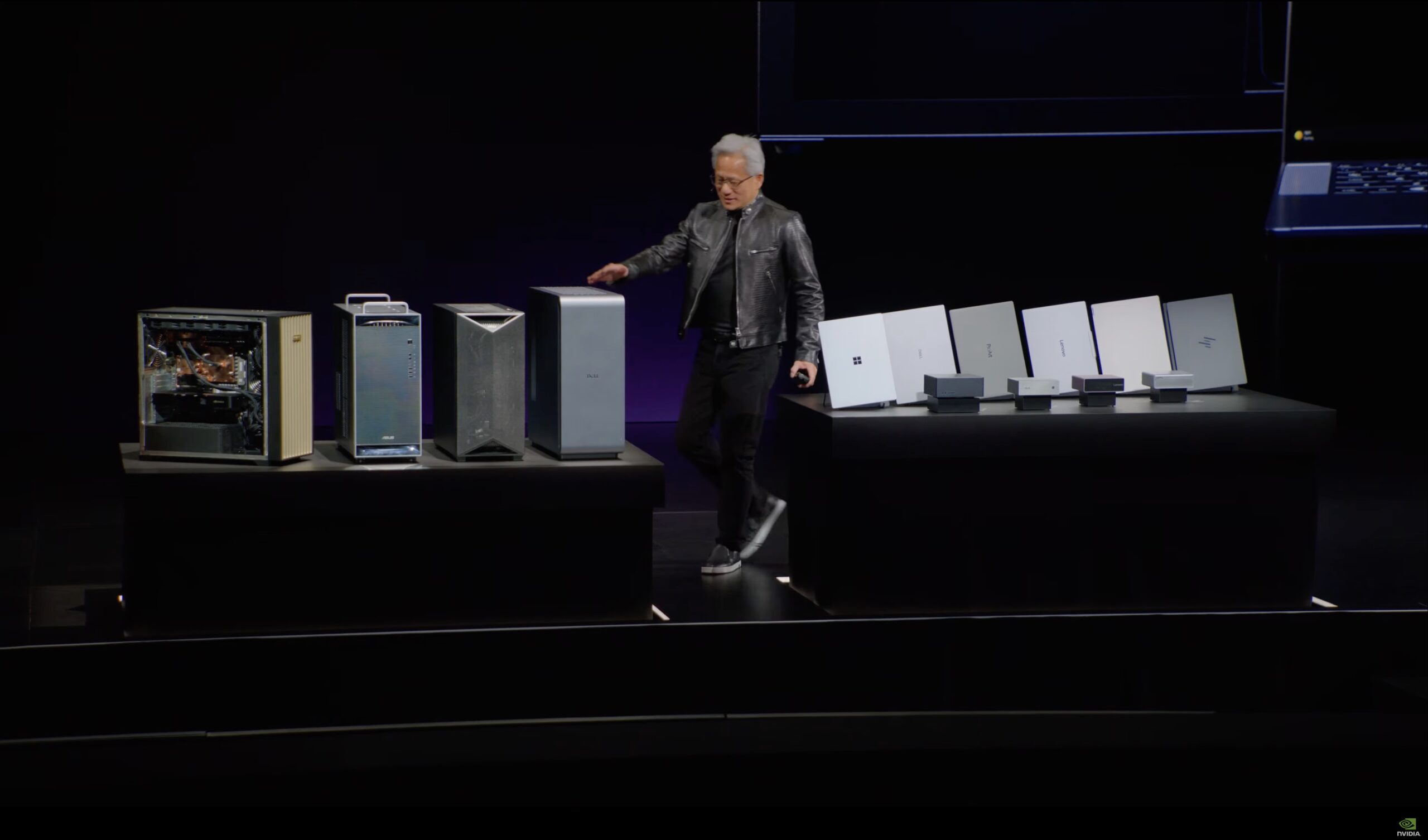

Traditionally, access to the most advanced AI models – often referred to as ‘frontier’ models – has been limited by the significant computational resources required. Training these models typically necessitates large-scale data centers and substantial investment in specialized hardware. The DGX Spark and DGX Station systems dramatically alter this landscape by bringing a powerful, integrated AI computing environment directly to the developer’s desk.

These deskside supercomputers are designed for rapid prototyping, experimentation, and initial model deployment. They allow developers to bypass the complexities of managing large-scale infrastructure and instead focus on refining their algorithms and validating their ideas with real-world data. This localized approach fosters faster iteration cycles and accelerates the overall development process.

DGX Spark: Scalable Performance for Complex Workloads

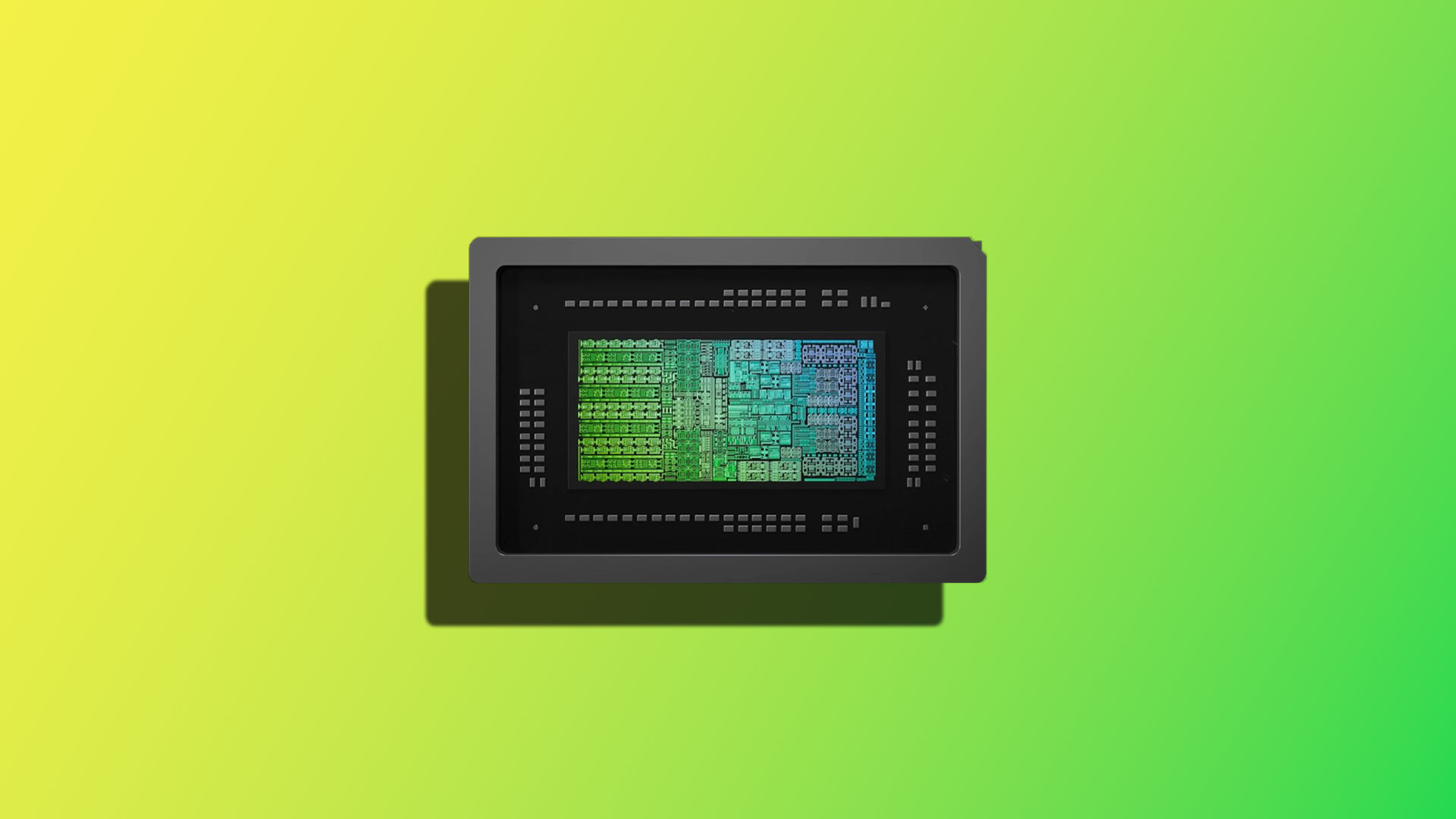

The NVIDIA DGX Spark system is positioned as a highly scalable solution, catering to organizations with increasingly demanding AI workloads. Built around NVIDIA’s latest generation of GPUs, the DGX Spark provides exceptional performance for training large language models (LLMs), generative AI applications, and other computationally intensive tasks. Its modular design allows users to scale resources up or down based on their specific needs, optimizing cost-effectiveness.

Key features of the DGX Spark include

- High Bandwidth Interconnects: Enabling rapid data transfer between GPUs and memory for accelerated training.

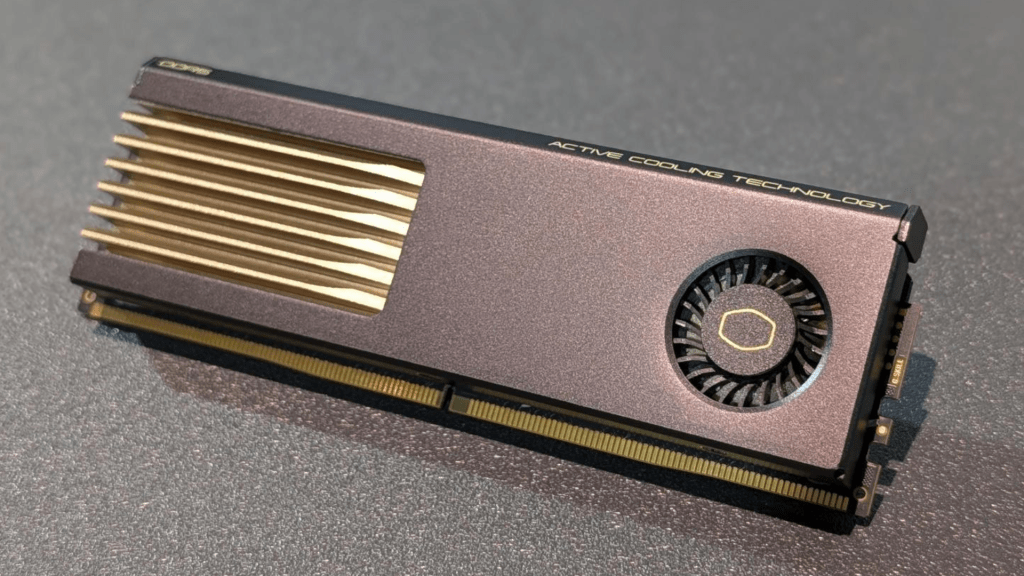

- Optimized Cooling Solutions: Maintaining stable operating temperatures even under heavy workloads, ensuring consistent performance.

- Integrated Software Stack: Providing a streamlined environment with NVIDIA’s AI software suite pre-installed and optimized for the system.

DGX Station: A Versatile Platform for Diverse Applications

The NVIDIA DGX Station offers a more accessible entry point into high-performance AI computing, ideal for research institutions, startups, and individual developers. While sharing core architectural principles with the DGX Spark, the DGX Station is designed to deliver exceptional performance for a wider range of applications – from computer vision and robotics to scientific simulations and personalized medicine.

The DGX Station’s versatility stems from its powerful GPU capabilities combined with NVIDIA's comprehensive software ecosystem. This allows developers to seamlessly integrate with popular AI frameworks and tools, fostering collaboration and accelerating innovation.

Supporting the Open-Source AI Movement

NVIDIA’s commitment extends beyond hardware; it actively supports the burgeoning open-source AI community. The DGX Spark and DGX Station systems are designed to be compatible with leading open-source frameworks, such as TensorFlow and PyTorch, empowering developers to leverage the latest advancements without being constrained by proprietary ecosystems.

This collaborative approach is crucial for driving innovation in AI, fostering a shared understanding of best practices, and accelerating the development of transformative technologies. By providing accessible computing power, NVIDIA is playing a pivotal role in democratizing access to advanced AI capabilities.

Future Implications

The availability of systems like DGX Spark and DGX Station represents a significant shift in the AI landscape. As open-source models continue to evolve and become increasingly sophisticated, the demand for powerful local computing solutions will only intensify. These supercomputers are not merely hardware platforms; they are enablers – empowering developers to push the boundaries of what’s possible with artificial intelligence.