NVIDIA's Rubin Platform: A New Era in AI Computing

At the recent industry event, NVIDIA introduced a significant advancement in AI hardware – the Rubin platform. This innovative architecture represents a substantial step forward in accelerating compute-intensive workloads, particularly those central to advancements in agentic AI and complex model training.

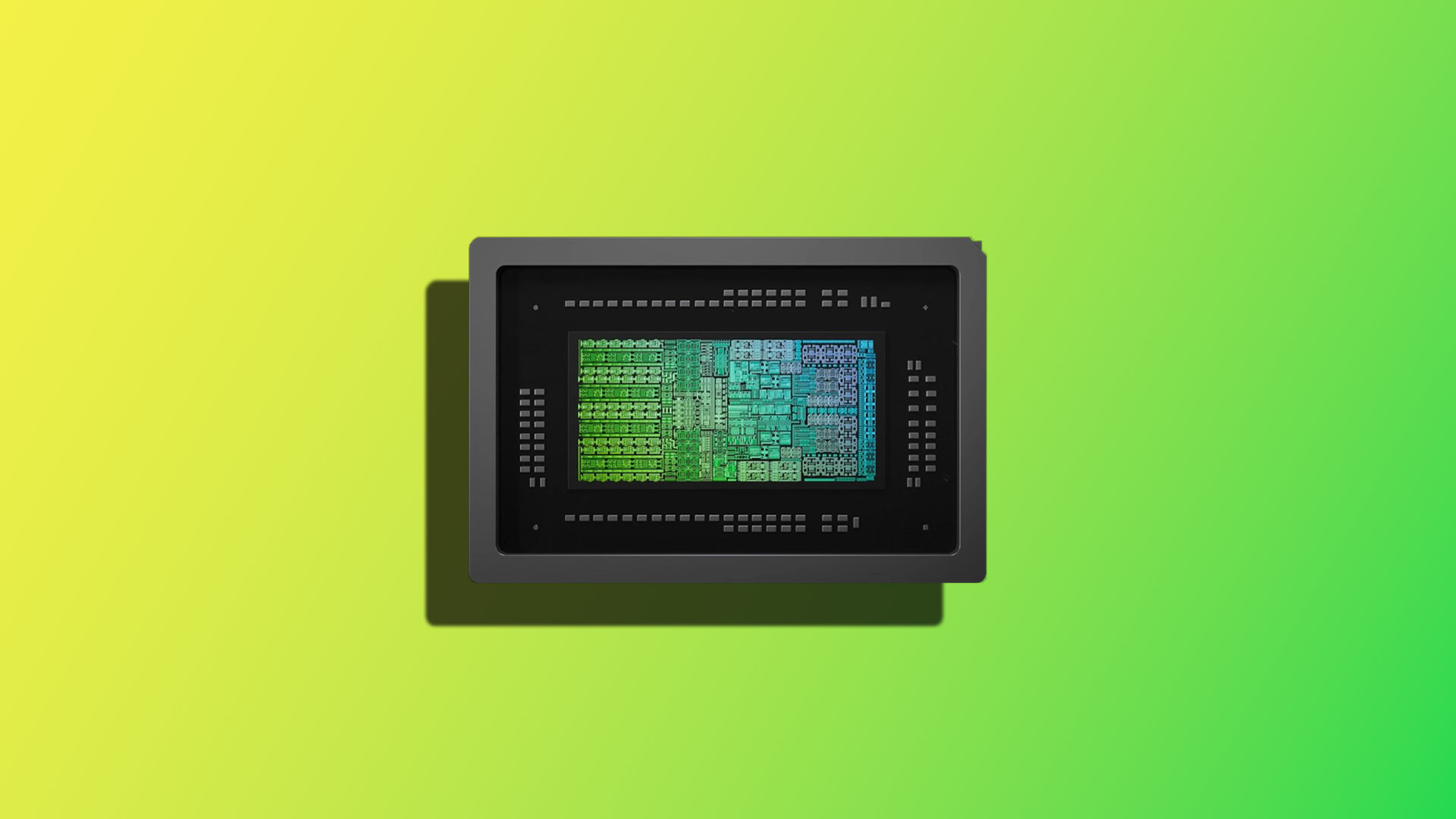

The Core of the Rubin Platform

The Rubin platform isn’t just one component; it's a meticulously engineered system comprised of six distinct chips working in perfect harmony. These include

- NVIDIA Vera CPU: A high-performance CPU designed to manage and orchestrate the complex operations within the system.

- Rubin GPU: The core processing unit optimized for accelerating AI computations, specifically tailored to handle demanding tasks like mixture-of-experts (MoE) models and long-context reasoning.

- NVLink 6 Switch: A high-speed interconnect technology enabling rapid data transfer between GPUs within the SuperPOD system.

- ConnectX-9 SuperNIC: A network interface card engineered for exceptional bandwidth and low latency, crucial for efficient communication during large-scale AI training.

- BlueField-4 DPU: A Data Processing Unit (DPU) that offloads networking and storage tasks from the CPUs and GPUs, further boosting overall system performance.

- Spectrum-6 Ethernet Switch: Provides robust and scalable connectivity for the entire SuperPOD infrastructure.

This codesign approach – integrating these components into a unified architecture – is designed to dramatically reduce the cost of inference token generation while simultaneously accelerating training processes.

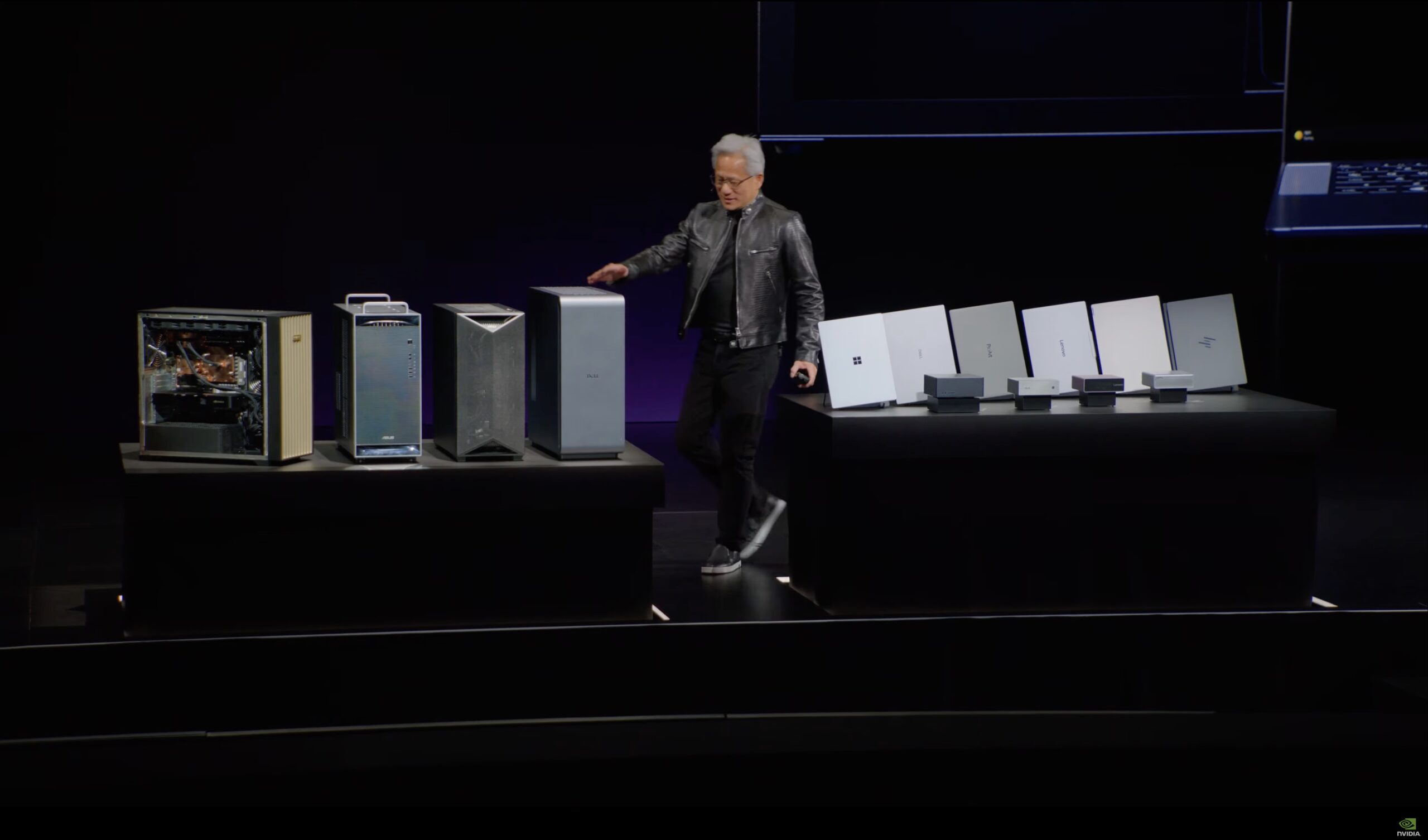

DGX SuperPOD: The Foundation for Rubin Deployment

The NVIDIA DGX SuperPOD remains the foundational design for deploying Rubin-based systems. It represents a holistic solution, addressing the entire technology stack – from NVIDIA’s computing hardware to networking components and supporting software – as an integrated system.

Addressing Growing AI Demand

�Rubin arrives at exactly the right moment, as AI computing demand for both training and inference is going through the roof,” stated Jensen Huang, founder and CEO of NVIDIA. This sentiment reflects a broader industry trend: the exponential growth in the need for powerful AI hardware to support increasingly complex models and burgeoning workloads.

Key Benefits of the Rubin Platform

- Accelerated Training: The combined architecture significantly reduces training times, allowing researchers and developers to iterate faster on their AI models.

- Efficient Inference: Optimized for inference token generation, reducing operational costs and improving real-time performance.

- Simplified Deployment: The DGX SuperPOD removes the complexities of infrastructure integration, enabling teams to focus solely on developing and deploying innovative AI solutions.

- Scalability: Designed for large-scale deployments, the Rubin platform is adaptable to evolving AI needs.

Applications Across Industries

The potential applications of the Rubin platform are vast, spanning across diverse industries including

- Research Institutions: Accelerating scientific discovery through advanced AI modeling and simulation.

- Enterprise Computing: Driving innovation in areas such as autonomous vehicles, robotics, and financial modeling.

- Data Centers: Optimizing performance for large-scale data processing and analytics.

The introduction of the Rubin platform and DGX SuperPOD marks a pivotal moment in the evolution of AI computing, positioning NVIDIA at the forefront of this transformative technology.