The latest collaboration between NVIDIA and Google Cloud is not just another partnership—it’s a full-stack reimagining of how agentic and physical AI moves from concept to factory floor. The new tools, built on more than a decade of joint engineering, promise to streamline development across hardware, software, and cloud infrastructure, but the trade-offs for creators are worth examining closely.

At its core, this initiative is about removing friction between AI research and industrial application. NVIDIA’s performance-optimized libraries and Google Cloud’s enterprise-grade services now form a seamless pipeline, allowing developers to deploy complex AI models without the usual bottlenecks of scaling or integration. For startups and enterprises, the appeal is clear: faster iteration, lower entry costs, and the ability to test ideas at scale before committing resources.

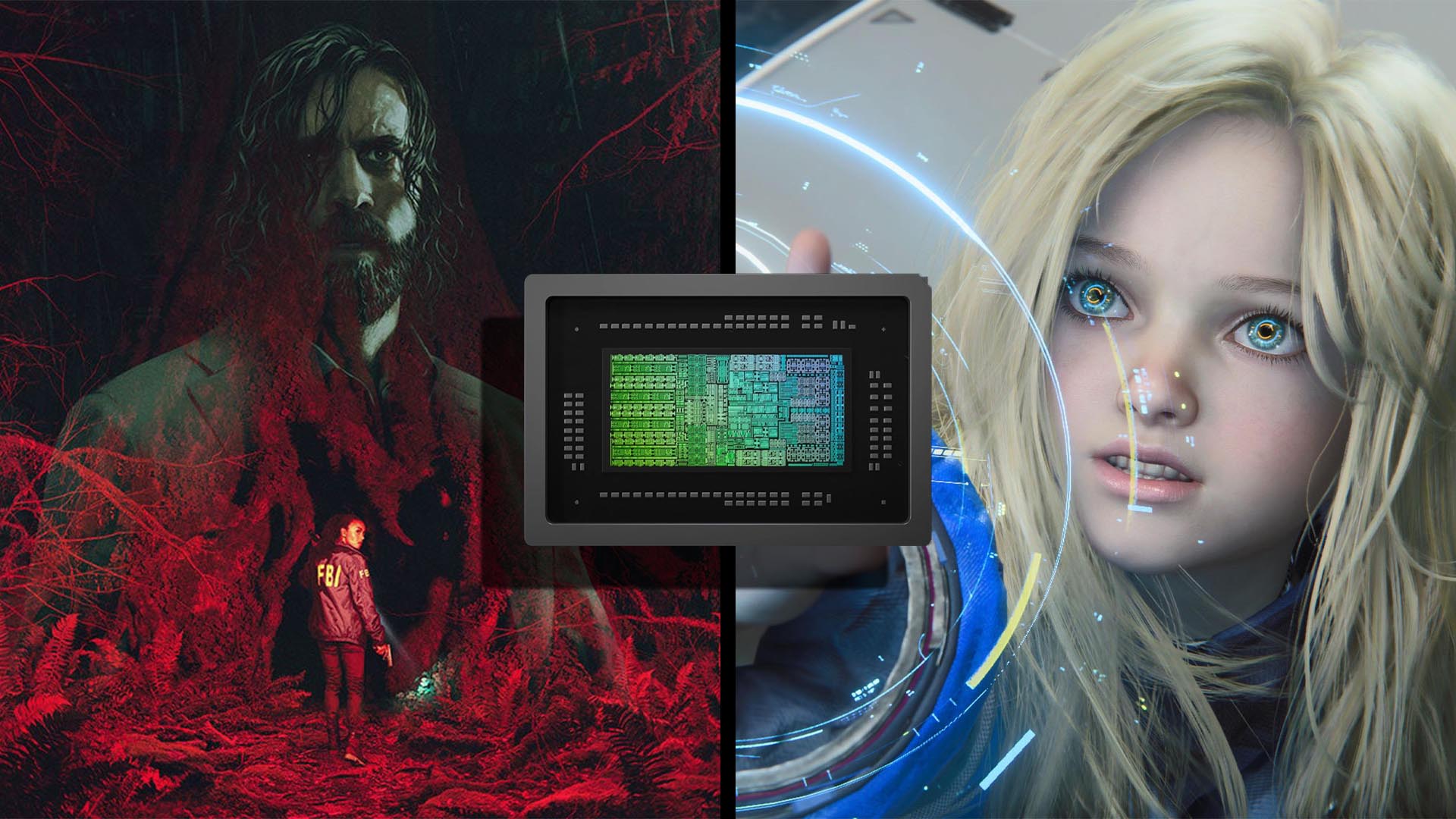

Yet the deeper implications lie in the engineering trade-offs that come with such a tightly integrated platform. Creators must weigh the convenience of a unified ecosystem against potential lock-in risks. While NVIDIA’s hardware—like its latest GPUs with specialized cores for AI workloads—and Google Cloud’s infrastructure offer undeniable performance advantages, the question remains: How much flexibility do developers sacrifice to gain speed? The answer could define the future of agentic AI deployment.

For creators working in physical AI, the new tools introduce a practical shift. No longer confined to lab environments, these models can now be stress-tested under real-world conditions—think robotics, autonomous systems, or smart factories—without the usual overhead of custom infrastructure. The collaboration’s focus on factory-scale readiness suggests that the line between simulation and production is blurring faster than ever.

- Performance-optimized libraries for hardware acceleration.

- Enterprise-grade cloud services with pre-integrated AI workflows.

- Factory-scale testing capabilities for physical AI models.

The immediate benefit is undeniable: reduced time-to-market and lower barriers to experimentation. But the long-term cost could be platform dependency, a risk that grows as AI systems become more complex. Creators must decide whether the short-term gains justify tying their work to a single ecosystem—or if they’re better off hedging bets with modular, open-source alternatives.

The most significant change here is not just the tools themselves but the way they redefine the AI development lifecycle. What was once a fragmented process—jumping between hardware, software, and cloud providers—is now a more linear path. Whether that path remains open to all or narrows over time will determine how widely these advancements are adopted.