Micron has quietly accelerated the evolution of GPU memory with the confirmation of 24Gb GDDR7 modules running at 36 Gbps, a leap that could redefine high-end graphics and AI acceleration. This isn’t just an incremental upgrade—it’s a foundational shift for how next-generation GPUs handle data, particularly in ray tracing, 8K rendering, and AI-driven workloads. The implications stretch beyond raw performance, addressing long-standing frustrations like texture pop-in and frame stutters in modern games.

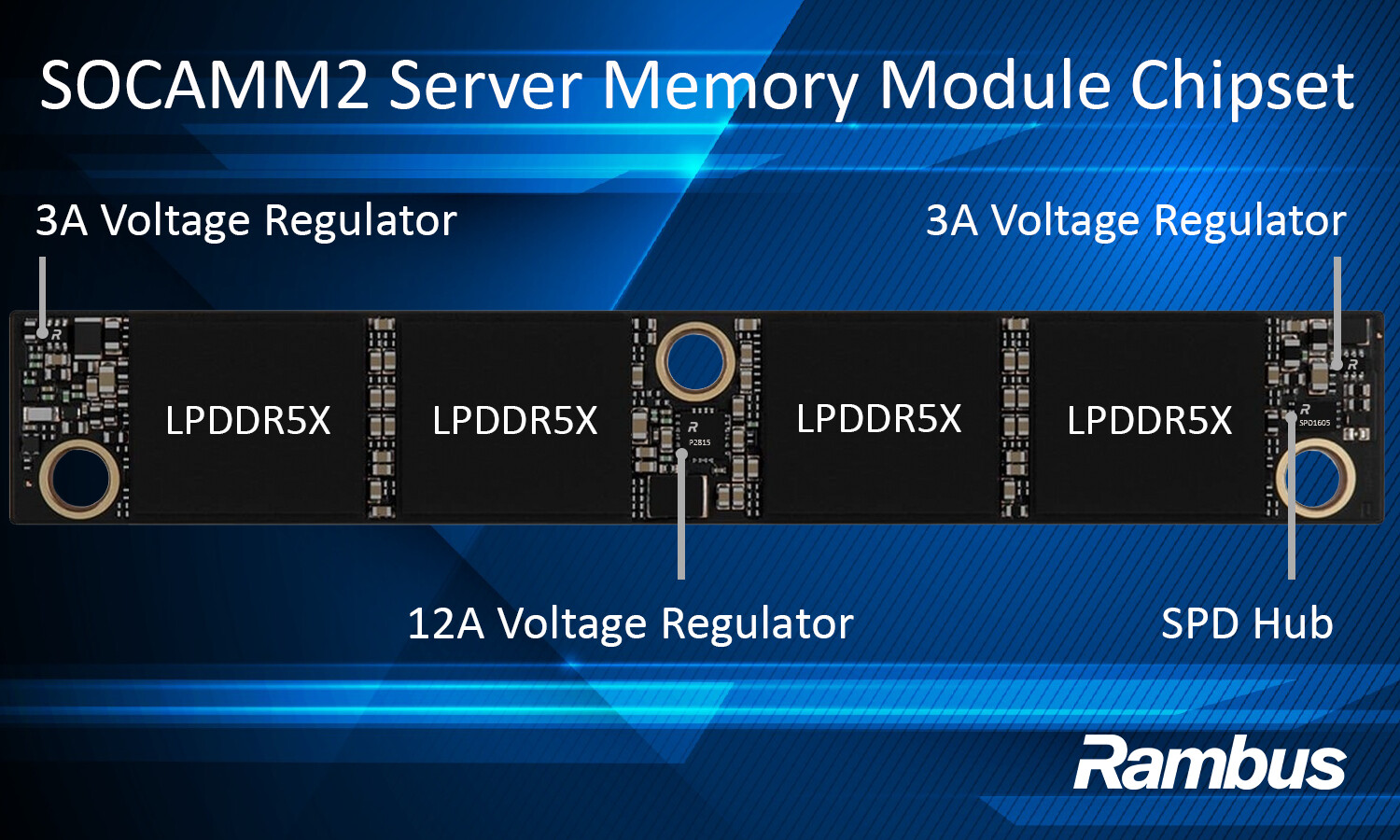

The new memory standard builds on GDDR7’s debut in NVIDIA’s RTX 50 series, where it first appeared in the RTX 5090 and RTX 5080—though those cards still used 30 Gbps speeds (with the 5090 Laptop and RTX PRO 6000 Blackwell pushing up to 96GB via 3GB modules). Micron’s 36 Gbps modules now offer a 20% bandwidth boost, while the 24Gb density nearly doubles what’s available today, hinting at future GPUs with 48GB or even 64GB VRAM in a single card.

A Memory Bottleneck No More?

Modern games demand more from GPUs than ever. Real-time ray tracing, AI upscaling, and open-world environments with dynamic lighting and physics require massive datasets to be loaded and processed without interruption. When GPU memory runs out, the result is familiar to gamers: texture pop-in, mid-frame stutters, and uneven performance—especially during complex ray-traced scenes. Even AI-assisted rendering suffers, as intermediate buffers and neural networks compete for limited VRAM.

Micron’s solution? Larger frame buffers that keep textures, lighting data, and geometry resident in memory, eliminating the need for constant swapping. At 36 Gbps, the new modules can sustain

- 128-bit bus: 576 GB/s (12GB capacity)

- 192-bit bus: 846 GB/s (18GB capacity)

- 256-bit bus: 1,152 GB/s (24GB capacity)

- 320-bit bus: 1,440 GB/s (30GB capacity)

- 384-bit bus: 1,728 GB/s (36GB capacity)

- 512-bit bus: 2,304 GB/s (48GB capacity)

For comparison, NVIDIA’s RTX 5090 (with a 384-bit bus at 28 Gbps) delivers 1,344 GB/s—Micron’s 256-bit module alone would outpace it by ~30%. This isn’t just about higher resolutions like 4K or 8K; it’s about smoother, artifact-free rendering in demanding scenarios.

Who Benefits—and When?

Micron’s announcement aligns with NVIDIA’s Rubin GPU roadmap, suggesting these modules will power the next wave of discrete GPUs—likely RTX 50 SUPER variants or a 2027 refresh. Samsung, Micron’s competitor, has already begun mass production of 24Gb GDDR7 modules (since November 2025) and sampled 36 Gbps chips to partners, indicating a race to standardize the tech. However, supply constraints remain a hurdle, delaying widespread adoption.

Beyond gaming, the upgrades cater to AI workloads, where GDDR7’s lower latency and higher throughput improve on-device inference for generative AI, neural rendering, and hybrid CPU-GPU-NPU workflows. Micron highlights

- Faster AI model processing with reduced latency

- Better power efficiency due to architectural refinements

- Support for neural graphics and real-time upscaling without VRAM thrashing

The technology also hints at future-proofing for 8K gaming and professional workloads, where memory bandwidth becomes the limiting factor. While Samsung and SK Hynix are expected to follow with their own 24Gb+ and 32Gb densities, Micron’s early move positions it as a key supplier for NVIDIA’s next-gen GPUs.

The Bigger Picture: A Memory Arms Race

This isn’t the first time memory standards have evolved to meet GPU demands. From GDDR5X (used in the RTX 2080) to GDDR6X (powering the RTX 4090), each generation has pushed the boundaries of what’s possible. The shift to GDDR7 marks a turning point, particularly as AI and ray tracing become mainstream.

For gamers, the immediate takeaway is reduced texture pop-in and more stable frame rates in high-end titles. For AI developers, it means faster training cycles and lower latency in real-time applications. And for hardware manufacturers, it’s a chance to design GPUs that finally outpace memory bottlenecks—a problem that’s plagued PC gaming for years.

With Samsung and Micron now locked in a competition to deliver 32Gb densities and 42.5 Gbps speeds, the next 12–18 months will determine whether these upgrades hit consumer GPUs—or remain reserved for workstation and AI-focused hardware. One thing is clear: the era of memory-constrained rendering may soon be over.