server memory is about to get a modular upgrade. Rambus has unveiled the SOCAMM2 chipset, designed to enable detachable LPDDR5X memory modules that can be swapped in and out of servers without requiring complete system replacements. This marks a significant departure from traditional soldered DDR5 or LPDDR5 setups, offering IT teams greater flexibility in managing AI workloads while potentially reducing operational costs.

The SOCAMM2 leverages the power efficiency and compact form factor of LPDDR technology, which is typically used in mobile devices but now being adapted for data center use. Unlike conventional memory solutions, these modules are built to be serviceable—meaning they can be upgraded or replaced on demand, a feature that could minimize downtime in large-scale AI deployments. Rambus describes this as the first step toward a broader family of LPDDR-based server memory products, with plans to eventually support standards like LPDDR6.

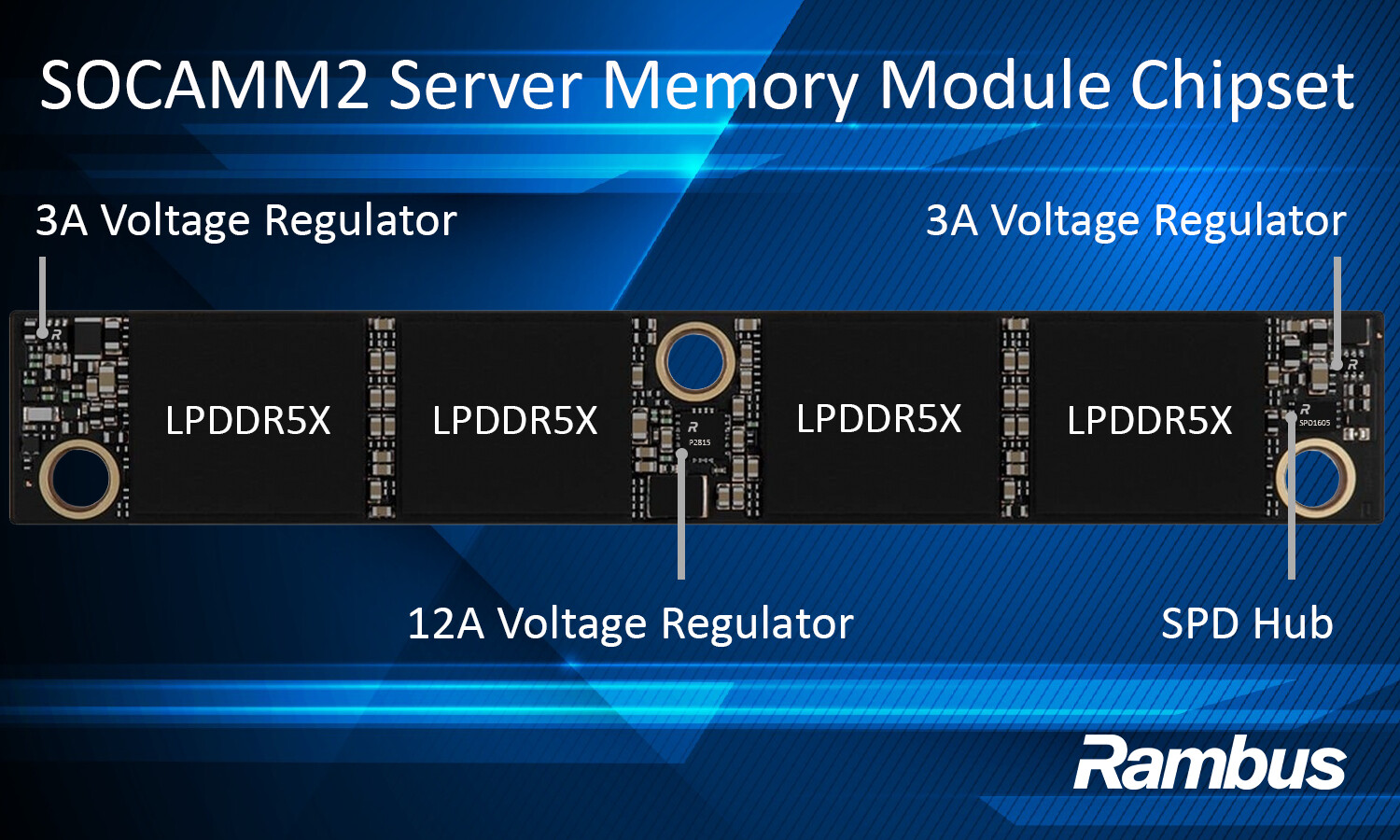

Technically, SOCAMM2 supports LPDDR5X memory modules operating at speeds up to 9.6 Gb/s, along with integrated voltage regulators (12A and 3A) for localized power conversion. An SPD hub is also included, allowing for module identification and telemetry, which could streamline system management in data centers. The chipset is already being produced by partners like Micron and SK hynix, with capacities ranging from 6 GB to 512 GB available.

How This Changes AI Server Management

The biggest advantage of SOCAMM2 lies in its serviceability. IT teams managing AI infrastructure could see reduced downtime and lower maintenance costs, as memory modules can be upgraded without replacing entire servers. However, transitioning from DDR5 to LPDDR-based solutions will require careful consideration of compatibility, power efficiency, and long-term scalability.

- Modular, detachable LPDDR5X modules with speeds up to 9.6 Gb/s

- Integrated voltage regulators (12A/3A) for efficient power distribution

- SPD hub for module telemetry and configuration

The roadmap for this technology isn’t fully clear yet, but Rambus has hinted at expanding beyond LPDDR5X in the future. Whether this shift will gain widespread adoption depends on how quickly industry partners standardize around it. IT teams should keep an eye on pricing stability for high-capacity modules (192 GB to 512 GB), compatibility with existing AI server platforms, and the timeline for LPDDR6 integration.

What’s Next for AI Memory?

The SOCAMM2 chipset represents a bold move toward modular memory in data centers, but its success will depend on broader industry support. If adopted widely, it could pave the way for more efficient, scalable AI server architectures—though challenges around performance consistency and cost remain. For now, the focus is on proving that LPDDR5X can deliver the same bandwidth and density as DDR5 while offering the flexibility of a modular design.