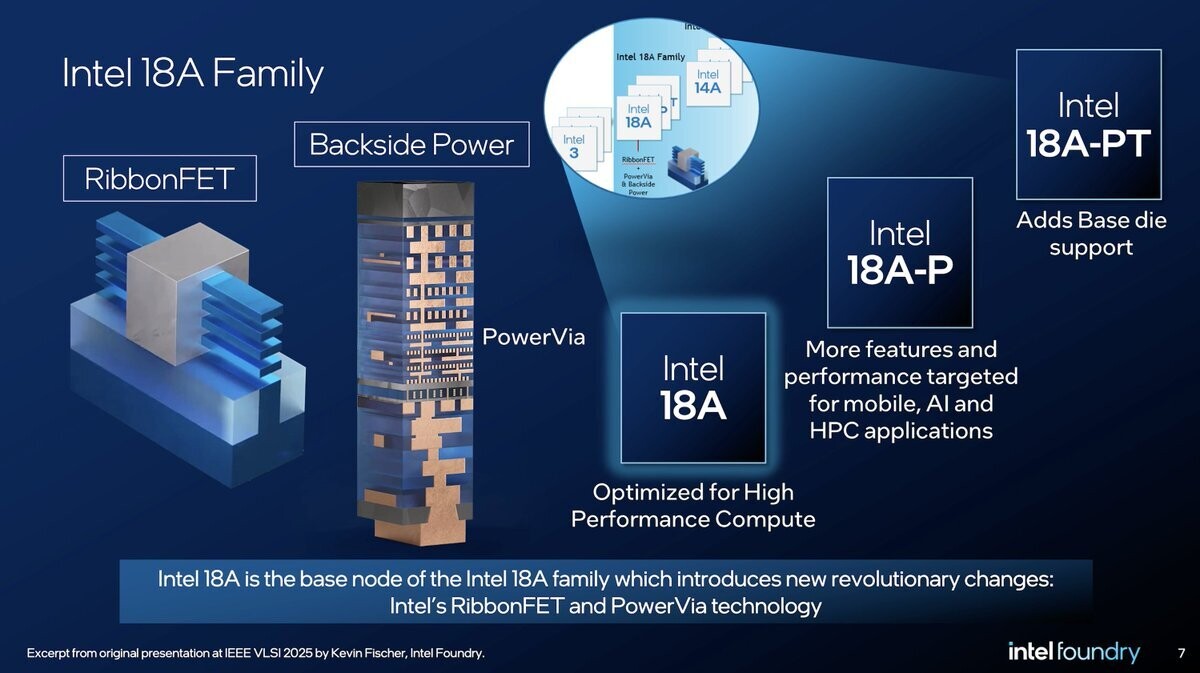

In the competitive landscape of high-performance computing, Intel is preparing to challenge the dominance of HBM4 with a new memory architecture. The HB3DM (High Bandwidth 3D Memory) stack, based on Z-Angle Memory technology, promises significant advancements in bandwidth and capacity, positioning itself as a potential game-changer for AI workloads.

The first generation of HB3DM will feature nine layers stacked using hybrid bonding techniques. This configuration includes a logic layer at the base, responsible for data movement, and eight DRAM layers on top for storage. Each layer is expected to contain approximately 13,700 TSVs (Through-Silicon Vias) for hybrid bonding, contributing to the overall performance.

Key Specifications

- Capacity: 10 GB per module

- Bandwidth: Approximately 5.3 TB/s per module

- Layer Count: Nine layers (one logic, eight DRAM)

- TSVs per Layer: Around 13,700

The HB3DM's bandwidth is a notable improvement over HBM4, which offers around 2 TB/s per stack. This significant increase in bandwidth could potentially overshadow competing memory technologies, making it an attractive option for AI accelerators and other high-performance computing applications.

However, the HB3DM is not without its limitations. Its capacity of 10 GB per module is considerably lower than HBM4's 48 GB per stack. This trade-off between bandwidth and capacity will likely influence the adoption of HB3DM in various market segments. Intel may address this limitation by increasing the number of layers in future generations, but for now, it remains a bandwidth leader.

Market Implications

The development of HB3DM memory stacks could have significant implications for the AI industry, which is increasingly reliant on high-bandwidth memory solutions. The technology's potential to deliver higher performance per unit area could make it an attractive option for data centers and other large-scale computing environments.

For IT teams evaluating memory solutions for their AI workloads, the HB3DM represents a promising alternative to HBM4. Its superior bandwidth could lead to improved performance in applications such as deep learning, natural language processing, and computer vision. However, the trade-off in capacity may limit its use cases in certain scenarios.

As Intel continues to develop this technology, it will be interesting to see how it positions itself against established memory solutions like HBM4. The potential return of Intel to DRAM manufacturing could also have broader implications for the industry, potentially reshaping the competitive landscape.