The partnership between IBM and Google Cloud is taking a significant leap forward, focusing on making enterprise AI more accessible and cost-effective. The new initiative combines IBM's deep expertise in hybrid cloud systems with Google Cloud's vast computational resources to create a platform that simplifies the complex process of training large AI models while maintaining operational control—a critical factor for businesses scaling AI deployments.

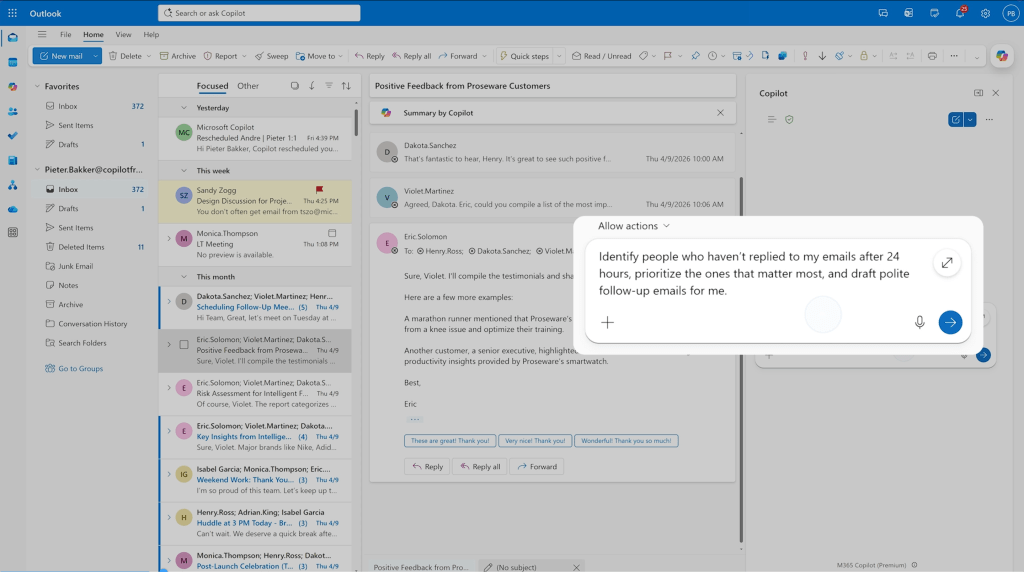

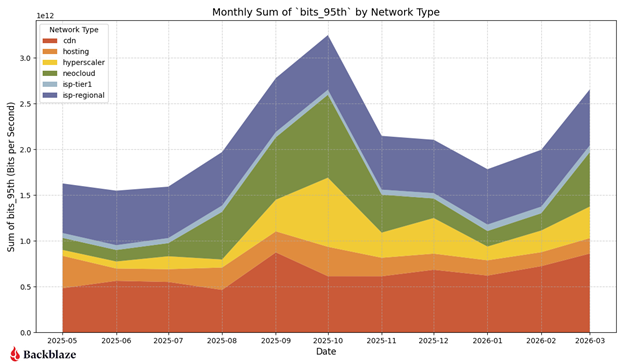

At the heart of this collaboration is an enterprise-grade AI training platform built on Google Cloud's infrastructure. This platform leverages Google Cloud's TPU v4 pods, which deliver up to 10 petaflops of performance, significantly accelerating model training while incorporating cost-saving features that allow organizations to scale resources dynamically based on demand. The integration with IBM's Watsonx and Google Cloud's Vertex AI services provides a unified environment for managing AI workloads, from development to deployment.

Why This Partnership Matters

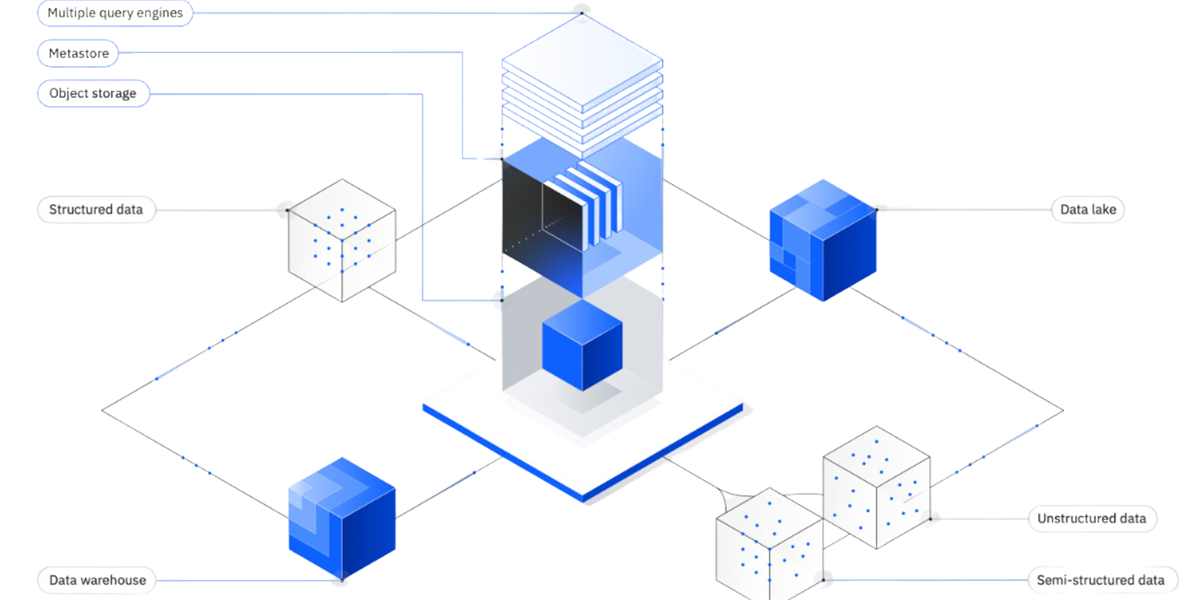

The push toward generative AI adoption has been rapid, but the underlying infrastructure required to train and deploy these models efficiently remains a hurdle. Many enterprises struggle with provisioning the necessary compute power without incurring prohibitive costs or losing flexibility across hybrid environments. The new platform aims to address this by abstracting much of the technical complexity while ensuring seamless integration between on-premises, cloud, and edge systems.

Key Features and Benefits

- A unified AI training and management platform that integrates IBM's Watsonx with Google Cloud's Vertex AI services, streamlining workflows for model development and deployment.

- Hybrid cloud governance tools that provide a single interface for managing workloads across multiple environments, reducing operational overhead and minimizing configuration drift—a common issue in distributed systems.

- Pre-built templates for common AI tasks, such as fine-tuning foundation models or deploying generative AI applications at scale, allowing enterprises to accelerate time-to-market without sacrificing customization.

The hybrid management suite is particularly noteworthy for its ability to enforce consistent policies across cloud and on-premises infrastructure. This ensures that governance remains robust as workloads grow, addressing a persistent challenge in large-scale deployments where maintaining control becomes increasingly difficult.

A Shift in Enterprise AI Adoption

This collaboration represents more than just technological advancement; it reflects a broader shift in how enterprises approach AI adoption. The focus on cost efficiency and operational control aligns with the practical needs of organizations that cannot afford to treat AI as an experimental endeavor. As the platform evolves, further refinements are expected in balancing performance, cost, and governance—a critical equation for any large-scale AI initiative.

The most significant change here lies in how this partnership reframes the conversation around enterprise AI. It moves beyond the question of feasibility to address efficiency, making it a development worth closely monitoring as businesses navigate the complexities of scaling AI in hybrid environments.