SpaceX and Tesla are betting on a future where AI’s insatiable hunger for power forces data centers into orbit—but the real constraint may not be energy, but the chips that run them. Elon Musk has long framed AI as an existential priority, and his latest remarks suggest the next phase of its expansion will require a radical rethinking of where—and how—computing happens.

The core issue? Earth’s power grid is already straining under the weight of AI training, with projections showing data centers consuming up to 12% of U.S. electricity by 2030. Musk’s solution: deploy massive AI clusters in space, where energy constraints evaporate—but only after overcoming a new bottleneck: the ability to manufacture enough advanced chips to fill them.

- Orbital data centers could become the dominant AI infrastructure within three years, Musk argues, bypassing Earth’s power grid limits.

- Current U.S. electricity usage hovers around 0.5 terawatts; AI demand alone could double that by 2030.

- Chips will emerge as the primary bottleneck once space-based computing scales, prompting Tesla to explore TeraFab, a proposed 2nm fabrication facility.

- Musk dismisses traditional cleanroom requirements for TeraFab, suggesting a more flexible (and controversial) approach to chip production.

- SpaceX’s Starship and Starlink would enable the logistics of orbital deployment, aligning with Musk’s broader Mars ambitions.

- Competitors like Starcloud have already tested AI hardware in low Earth orbit, but Musk’s vision targets a scale measured in gigawatts.

- Tesla is collaborating with TSMC and Samsung fabs but claims existing capacity falls short of AI’s accelerating needs.

The argument for space isn’t just about power. Musk has repeatedly framed Earth’s energy grid as a fundamental constraint, one that will force hyperscalers to either innovate or stall. Data centers already account for nearly 1% of global electricity use today; by 2030, that figure could balloon to 12% in the U.S. alone. Building enough terrestrial infrastructure to meet that demand would require constructing power plants at a pace unseen since the Industrial Revolution. Orbital data centers, by contrast, would sidestep grid limitations entirely—assuming the logistics of launching and sustaining them become viable.

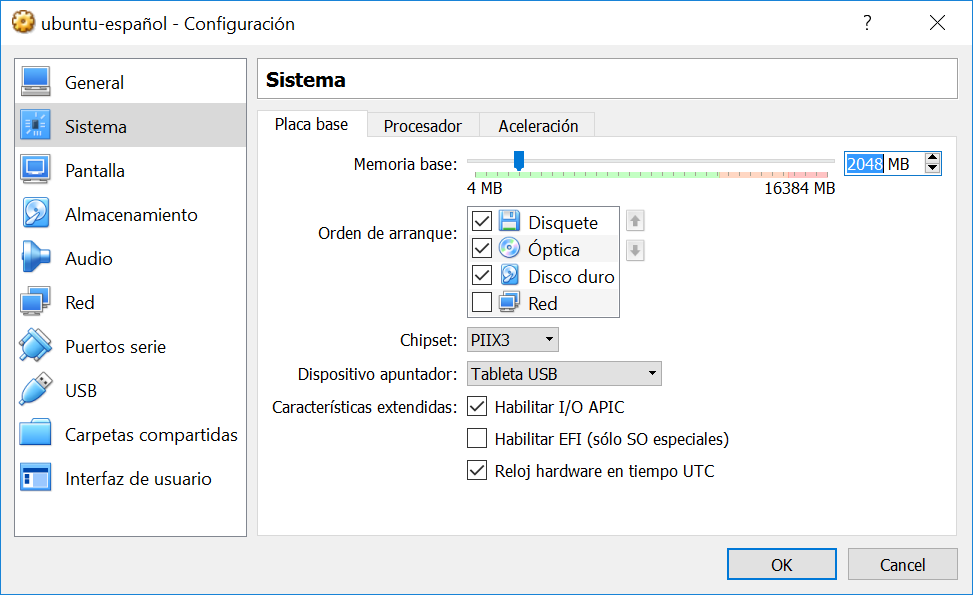

Yet the transition to space introduces its own challenges. Even if energy is no longer the problem, the chips powering these orbital clusters would need to be manufactured at unprecedented scales. Musk’s response? A proposed facility called TeraFab, designed to produce 2nm chips without relying on conventional cleanroom standards. The idea is provocative: Musk has suggested he’d smoke a cigar inside the facility, a jab at the rigid protocols of traditional semiconductor manufacturing. Whether TeraFab will materialize remains unclear, but its existence reflects a broader frustration with the current chip supply chain.

Tesla is already working with TSMC’s Arizona and Taiwan operations, as well as Samsung’s Korean and Texas fabs, but Musk has implied that even these partnerships can’t keep pace with AI’s demands. The result is a feedback loop: AI needs more chips, but the chips themselves are becoming harder to produce in the volumes required. Orbital data centers could break that cycle—but only if the underlying hardware can be scaled to match the vision.

The timeline Musk outlines is aggressive. Within 30 months, he predicts, the economics of AI will favor space-based infrastructure. That would mark a seismic shift, one that would require not just technological breakthroughs but a reconfiguration of how data centers are designed, deployed, and networked. For now, the biggest unknown isn’t whether orbital AI is possible—it’s whether the industry can build the chips to run it.