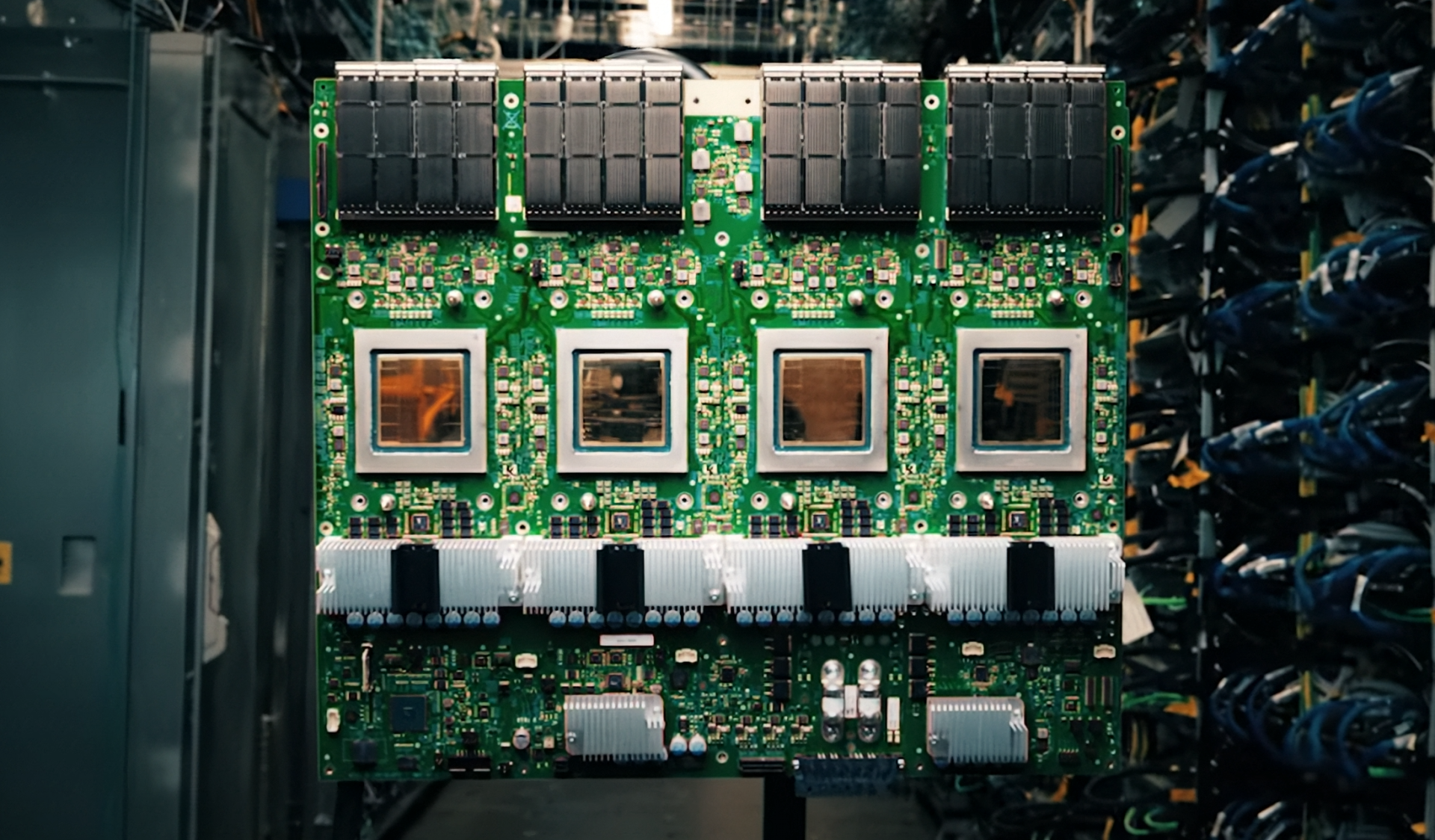

Data centers are evolving rapidly, with AI workloads demanding more power—and more cooling—than ever before. ASRock Rack’s latest liquid-cooled servers aim to meet that challenge head-on, integrating NVIDIA Rubin and Blackwell GPUs into a platform designed for sustained high-performance computing without thermal compromise.

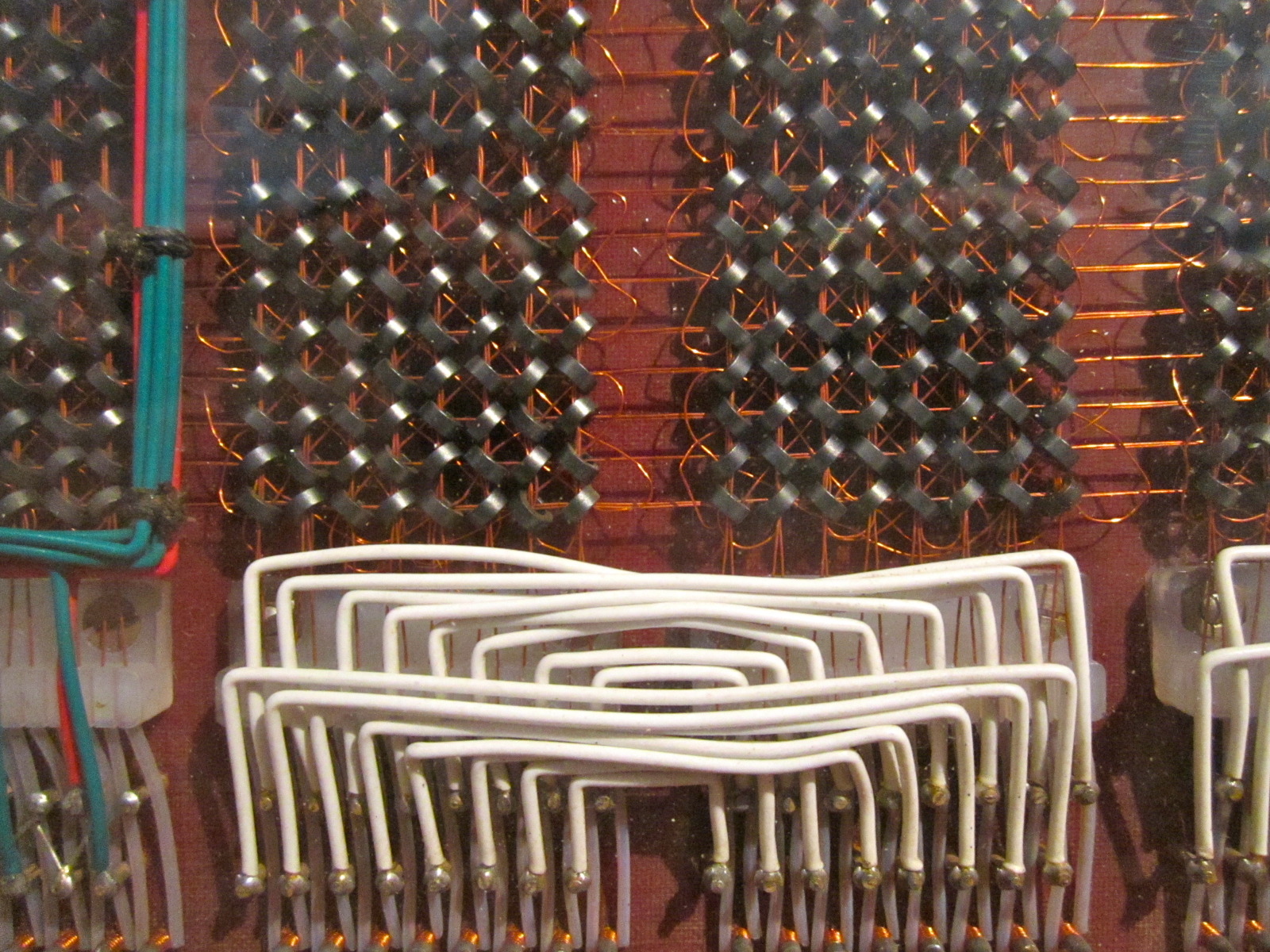

Liquid cooling has long been a staple in supercomputing, but its adoption in AI data centers is accelerating as workloads push traditional air-cooling solutions to their limits. These new systems from ASRock Rack take that approach further by embedding direct-to-chip liquid cooling directly into the server architecture. This ensures that even as power consumption spikes during intensive AI training or inference tasks, thermal stability remains consistent.

Balancing Performance and Efficiency

The heart of these servers lies in NVIDIA’s Rubin and Blackwell GPUs, each tailored for different aspects of AI workloads. The Rubin GPU is optimized for large-scale inference, delivering up to 16GB of HBM3 memory per chip, while the Blackwell GPU targets next-generation training tasks with up to 24GB of HBM3 memory and a memory bandwidth of 80GB/s. ASRock Rack’s design ensures seamless integration, allowing users to deploy these GPUs without sacrificing performance or stability.

- Dual-socket server support with capacity for up to four GPUs

- Redundant power supplies and hot-swappable components for enterprise-grade reliability

- Direct-to-chip liquid cooling to maintain thermal efficiency in high-density environments

A Trade-Off Worth Considering

While the benefits of liquid-cooled AI servers are clear—improved thermal performance, higher power efficiency, and the ability to handle dense GPU configurations—they also introduce new operational considerations. Deploying liquid cooling requires careful planning around plumbing, monitoring, and maintenance, which can add complexity for data center teams. However, for enterprises and hyperscalers running workloads that demand sustained high performance, these trade-offs are increasingly justified.

Looking Ahead

The new ASRock Rack servers are now available, targeting organizations with demanding AI workloads. As the industry continues to push toward more powerful and efficient computing solutions, balancing power consumption, cooling requirements, and scalability will remain a critical focus. These systems represent a step forward in that direction, offering a path to high-performance AI without compromising on thermal or operational efficiency.