AMD’s latest push into on-device AI agents introduces a clear performance divide between CPU-driven and GPU-accelerated setups, forcing users to weigh cost against raw throughput in a market where local inference is becoming a competitive edge.

The company’s ‘Agent Computer’ vision positions itself as the next frontier for personal AI, but the entry price—starting at $2,700 for a Ryzen AI Max+ system with 128 GB unified memory—locks this capability firmly behind enterprise thresholds rather than consumer adoption. The distinction between the two paths is stark: one prioritizes concurrency and memory flexibility, while the other delivers speed at the cost of token limits.

RyzenClaw vs. RadeonClaw: A Performance Gap Defined by Architecture

- RyzenClaw:

- Ryzen AI Max+ system with 128 GB unified memory (96 GB reserved for variable graphics memory)

- Qwen 3.5 35B A3B model performance: 45 tokens/second, 10,000 input tokens in ~19.5 seconds

- Context window: 260K tokens

- Concurrent agents: up to six

The RyzenClaw configuration leverages AMD’s AI Max+ architecture, designed for high concurrency and larger context windows—a key advantage for developers experimenting with ‘agent swarms’ on consumer-grade hardware. However, the 19.5-second latency for 10,000 tokens suggests this path is more suited to prototyping than real-time applications.

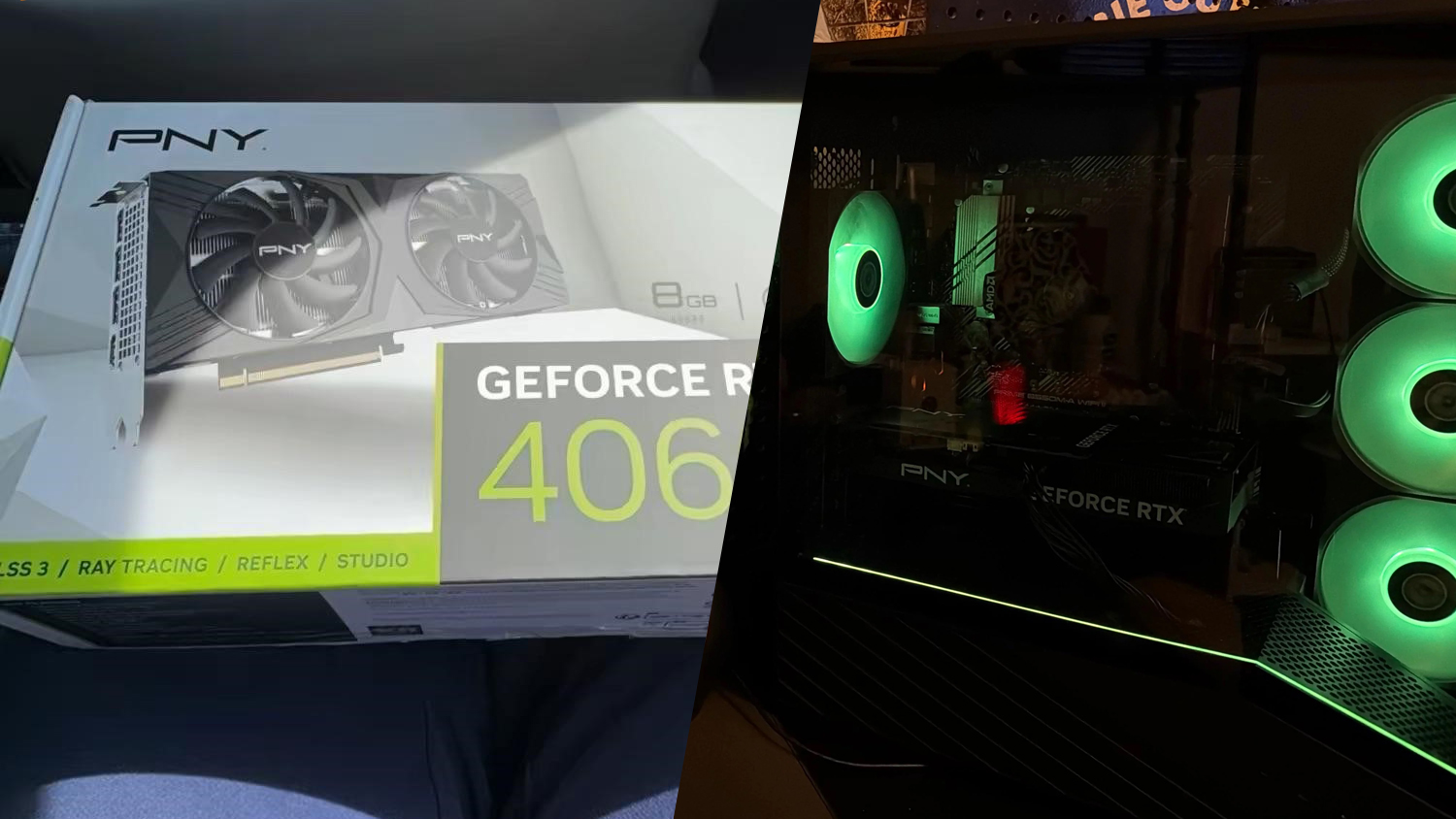

- RadeonClaw:

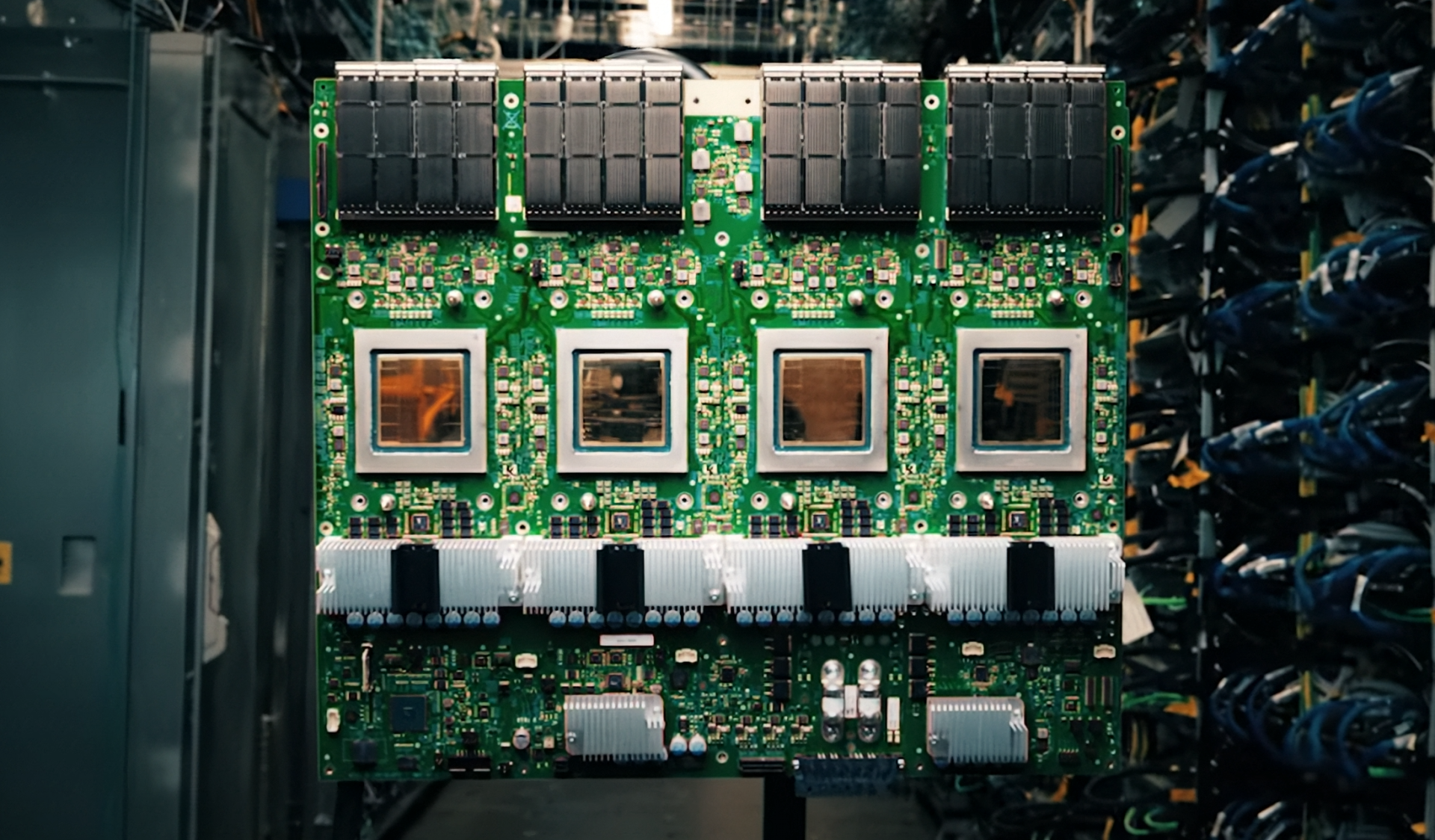

- Radeon AI PRO R9700 workstation GPU with 32 GB VRAM

- Qwen 3.5 35B A3B model performance: 120 tokens/second, 10,000 input tokens in ~4.4 seconds

- Context window: 190K tokens

- Concurrent agents: two

The RadeonClaw setup, by contrast, prioritizes raw speed with a workstation-class GPU that cuts latency nearly in half. The tradeoff—a smaller context window and limited agent concurrency—reflects the inherent constraints of GPU-accelerated inference, where memory bandwidth becomes the bottleneck for large-scale token processing.

Key Specifications: A Clash of Memory and Throughput

- Display:

- N/A (local AI agent workloads)

- Chip:

- Ryzen AI Max+ (128 GB unified memory) or Radeon AI PRO R9700 (32 GB VRAM)

- Memory:

- 128 GB (RyzenClaw), 32 GB (RadeonClaw)

- Storage:

- Not specified (external storage recommended for large models)

- Power:

- 200 W (Radeon AI PRO R9700)

- Connectivity:

- Standard PC ports (USB, DisplayPort, PCIe 5.0)

- Pricing:

- $2,700+ for RyzenClaw setup; $1,299 for Radeon AI PRO R9700 alone

The memory disparity is the most glaring difference: 128 GB of unified memory in the RyzenClaw path allows for aggressive agent swarming, while the RadeonClaw’s 32 GB VRAM—though faster—restricts scalability. This reflects a broader industry trend where GPU-based inference excels at throughput but struggles with memory-bound workloads.

Who Benefits—and Who Should Skip?

Developers and research teams working on multi-agent systems will find the RyzenClaw path more flexible, despite its higher latency. Those focused on single-threaded performance or real-time processing will lean toward RadeonClaw, though the cost of a workstation GPU alone ($1,299) makes this a niche proposition. For end users, neither configuration offers immediate value; the average consumer has little need for local AI agents at this stage, and the price barrier ensures this remains an early-adopter play.

A Market Dynamic Shaped by Cost and Control

AMD’s Agent Computer narrative hinges on data sovereignty—a compelling argument in a world where cloud dependency is increasingly scrutinized. Yet the performance tradeoffs and high cost suggest that the real market for local AI will only materialize when silicon efficiency improves, or when GPU architectures better balance memory and compute. Until then, this remains a developer’s toolkit rather than a mainstream capability.

The bottom line: AMD has laid out two distinct paths to local AI, but neither offers a compelling case for mass adoption. The choice between concurrency and speed is clear, but the price tag ensures that only those with deep pockets—and specific use cases—will take notice.