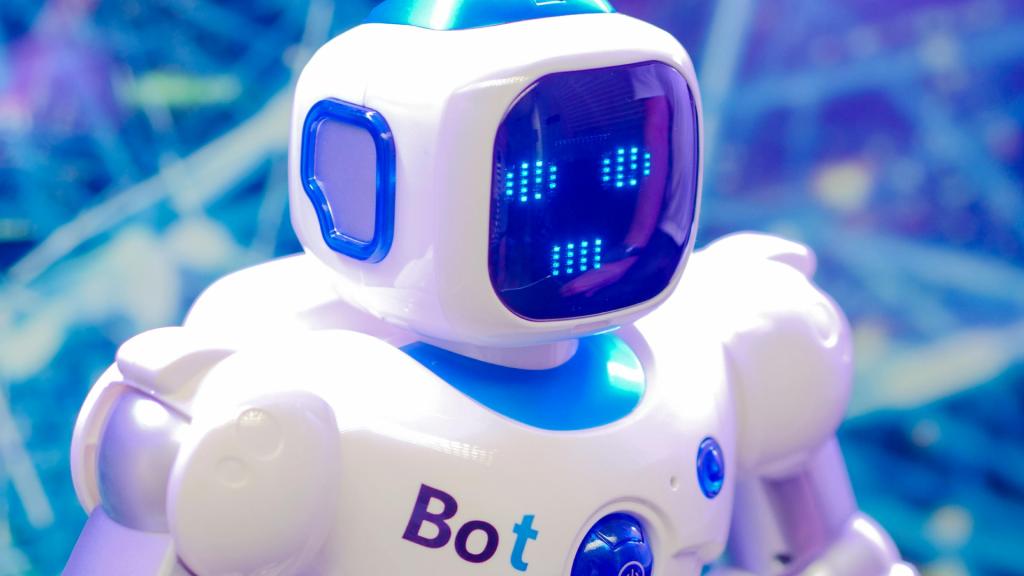

The rise of AI companions is redefining childhood play. Unlike earlier generations of toys that operated on fixed scripts, today’s models generate responses in real time, adapting to a child’s voice, tone, and even facial expressions. This level of interactivity creates an illusion of genuine connection—one that can feel more responsive than human interaction at times.

For children with social anxiety or autism, the benefits are undeniable. These toys provide structured emotional support without the pressure of real-world interactions. They offer infinite patience, tailored responses, and a safe space to practice communication skills. Yet for neurotypical children, the risks become more pronounced. Experts suggest that unchecked use could lead to an overreliance on artificial companionship, potentially replacing the developmental struggle that builds resilience.

The technology’s ability to mimic warmth and validation poses another concern. If a child finds AI responses more consistent or rewarding than human interaction, it may create a preference for artificial companionship—a dynamic that could have long-term emotional consequences. The challenge lies in ensuring these tools serve as educational aids rather than becoming primary sources of comfort.

Safety mechanisms are critical to mitigating these risks. Parents and educators must guide children in understanding the nature of AI, setting boundaries between fantasy and reality. Without proper oversight, the potential for emotional dependency or information misinterpretation grows. Yet the innovation itself is undeniable—these toys represent a significant leap forward in how children engage with technology.

As the industry evolves, the conversation will shift from whether these companions are appropriate to how they can be used responsibly. The balance between cutting-edge interaction and developmental safeguards will determine whether AI toys become tools for growth or sources of unintended emotional disruption.