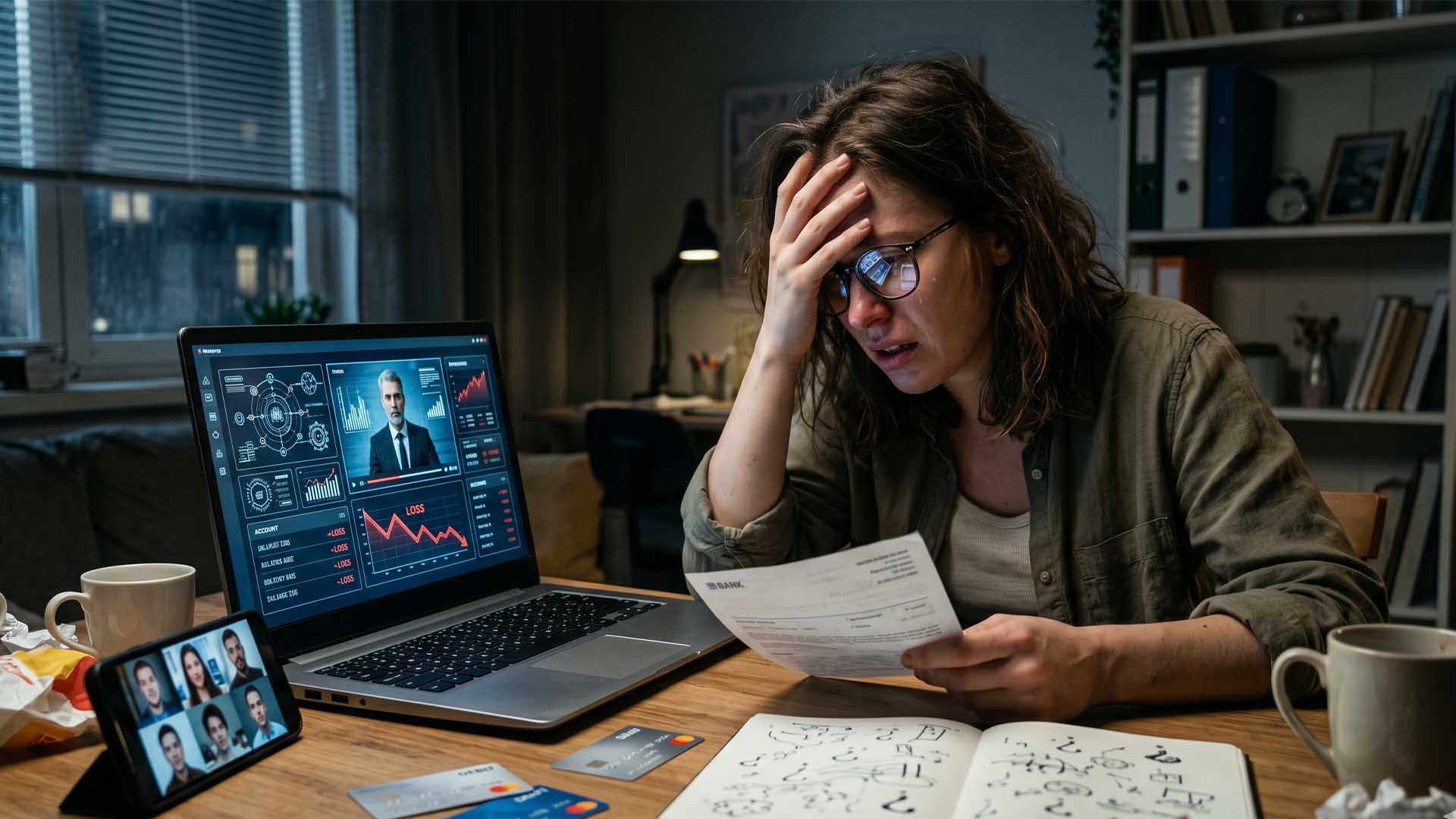

The AI Security Crisis: Why Your Defenses Are Failing Before You Even Deploy Them

security tools are selling a lie. Vendors promise ironclad protection—only to watch their defenses collapse when faced with even basic adversarial tactics. A groundbreaking study, funded by a $20,000 prize pool and conducted by top researchers from OpenAI, Anthropic, and Google DeepMind, exposed 12 widely used AI defenses to real-world attack simulations. The result? A 90%+ bypass rate across every single solution tested.

The problem isn’t that attackers are getting smarter—it’s that defenses were never designed to handle the threats they now face. Traditional security models assume threats are static, predictable, and detectable through keyword matching or simple pattern recognition. But AI attacks don’t follow those rules. They evolve in real time, fragment malicious intent across multiple interactions, and exploit the very training data used to harden models.

Take Crescendo, an attack method that breaks instructions into 10 conversational turns, or Greedy Coordinate Gradient (GCG), which automates jailbreak suffixes through optimization. Neither requires advanced technical skills—just an understanding of how defenses think. The study’s findings are a wake-up call: the security industry is playing catch-up in a game where the attacker already knows the rules.

How Attackers Are Already Winning the Game

The research identified four distinct threat vectors already exploiting these gaps, each with devastating potential

- Automated Adversaries leveraging open-source frameworks to test defenses in minutes. A single GCG attack can generate hundreds of bypass variations, forcing vendors to scramble for patches.

- API Abusers using stolen credentials to extract proprietary data through inference attacks—often succeeding in as few as 32 API calls. Enterprises with lax access controls are prime targets.

- Shadow AI Operators embedding malicious payloads in public LLM interactions, then repurposing responses for internal systems. IBM’s 2025 breach cost analysis found these insider-related incidents added an average of $670,000 per incident.

- State-Sponsored Actors compressing breach timelines from months to under 48 hours by orchestrating AI-driven phishing and exploitation at scale. Anthropic disrupted one such operation in September 2025, revealing attackers had executed thousands of requests per second—far beyond traditional defense thresholds.

The common thread? Every attack succeeded because defenses lacked contextual awareness. Stateless filters—still the industry standard—scan inputs in isolation, missing attacks that unfold across multiple turns or hide behind semantic camouflage.

The Fatal Flaw: Defenses Built on Outdated Assumptions

Most AI security tools operate under three dangerous assumptions

- Attacks are simple. Vendors test defenses against basic payloads, not adaptive, multi-stage exploits. The study’s researchers found that once a defense mechanism is documented, attackers reverse-engineer it within hours.

- Models can’t be their own adversaries. Training-based defenses assume AI systems will resist manipulation—but the same optimization techniques used to harden models can be repurposed to bypass them.

- Obscurity provides security. Vendors rely on proprietary algorithms, believing complexity will deter attackers. Instead, it creates a false sense of security while real threats move freely.

The study’s most alarming finding? Defenses that perform well in vendor-controlled labs fail spectacularly in the wild. A 99% protection rate in a static test environment drops to 10% when faced with adaptive attackers. The gap isn’t technical—it’s strategic.

What Enterprises Must Do Now

Deploying AI without proper safeguards is like building a skyscraper without firewalls. The question isn’t whether a breach will happen—it’s how quickly. To mitigate risk, enterprises should

- Replace stateless filters with stateful analysis. Track conversations across turns to detect fragmented attacks like Crescendo.

- Implement bi-directional filtering. Block not just malicious inputs, but also data exfiltration through model responses.

- Normalize all inputs before analysis. Strip encoding, obfuscation, and semantic camouflage before evaluation.

- Audit third-party AI providers. Many cloud-based AI services lack basic protections, exposing enterprises to supply-chain risks.

- Test defenses against adaptive attackers. Simulate real-world conditions where threat actors study and refine their tactics.

Ignoring these steps leaves organizations vulnerable to a new class of breaches—ones where the attack surface isn’t just code, but the AI model itself.

The Race Against Time

adoption is accelerating. By 2026, 40% of enterprise applications will integrate AI agents—up from less than 5% today. But security is lagging. The study’s authors warn that the window to fortify defenses is closing. Enterprises that treat AI security as an afterthought will pay the price in data leaks, regulatory fines, and reputational damage.

The good news? The tools to defend effectively exist. The challenge is recognizing that traditional security won’t cut it. The time to act is before the next attack—not after.

Final takeaway: AI defenses were built for a world where attackers didn’t adapt. That world no longer exists. Enterprises must move from reactive patching to proactive, context-aware protection—or risk becoming the next headline in AI’s security crisis.