SK Hynix Showcases Next-Generation HBM4 Memory

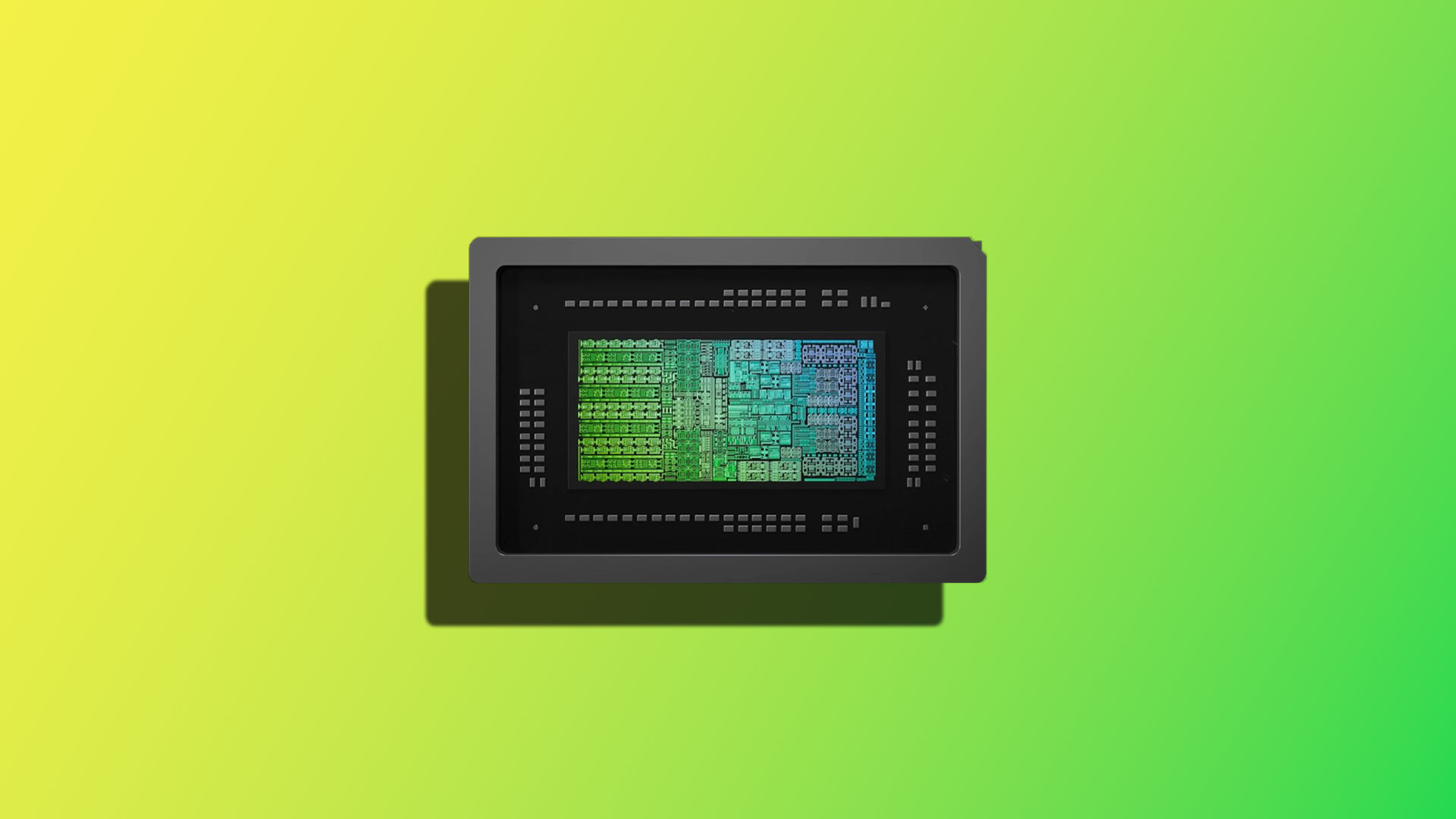

Continuing its commitment to pushing the boundaries of memory technology, SK Hynix unveiled a substantial upgrade at CES 2026: a 16-high 48 GB High Bandwidth Memory 4 (HBM4) module. This new configuration represents a notable step forward in addressing the increasingly demanding needs of high-performance computing (HPC) and artificial intelligence (AI) accelerator systems.

The move to a 16-high stack significantly increases memory capacity, allowing for larger datasets and more complex models to be processed concurrently. While specific performance metrics remain undisclosed, the expansion from previous iterations – previously limited to 12-high 36 GB HBM4 operating at 11.7 Gbps – suggests an effort to maximize bandwidth extraction from the added DRAM layers.

Key Features and Innovations

Beyond the increased capacity, SK Hynix highlighted a complementary technology: the custom base die, referred to as cHBM. This innovative approach aims to consolidate previously separate components within accelerator designs, potentially leading to substantial performance gains. The cHBM design integrates elements typically found on GPU or ASIC dies directly into the memory stack.

- Die-to-Die PHYs: SK Hynix’s demonstration showcased die-to-die physical layer (PHY) connectivity, facilitating faster and more efficient data transfer between the memory stack and other components.

- Embedded Memory Controllers: The cHBM architecture incorporates embedded memory controllers directly within the base die, reducing latency and improving overall system responsiveness.

- HBM PHY Integration: Streamlining the HBM PHY layer eliminates a significant bottleneck in data transmission, further enhancing bandwidth capabilities.

- Flexible Customization: A core element of the cHBM design is its adaptability. Customers have the option to tailor the base die with specific logic and processing elements, maximizing performance for their unique applications.

This level of customization opens up exciting possibilities for optimizing accelerator designs across a wide range of workloads, from complex scientific simulations to advanced deep learning training.

Implications for AI and HPC

The 16-high HBM4 module is strategically positioned to address the escalating demands of modern AI and HPC systems. The increased memory capacity allows for larger models and datasets to be processed, accelerating research and development in areas such as drug discovery, materials science, and climate modeling. Furthermore, the cHBM technology’s ability to integrate processing logic directly within the memory stack promises to drastically reduce communication overhead and improve overall system efficiency.

The competitive landscape surrounding HBM4 is intensifying, with key players like Micron and Samsung also actively developing enhanced modules. The introduction of SK Hynix’s cHBM technology adds another layer of complexity and innovation to this race, potentially influencing the future trajectory of accelerator design and performance.

The development of these advanced memory technologies underscores the critical role that memory plays in driving innovation across a broad spectrum of industries and applications.