NVIDIA has taken a significant step forward in AI infrastructure with the first shipments of its Vera Rubin VR200 platform. Unlike previous generations, this system is engineered to handle trillion-parameter models using just one-fourth the number of GPUs, drastically reducing both hardware requirements and operational costs.

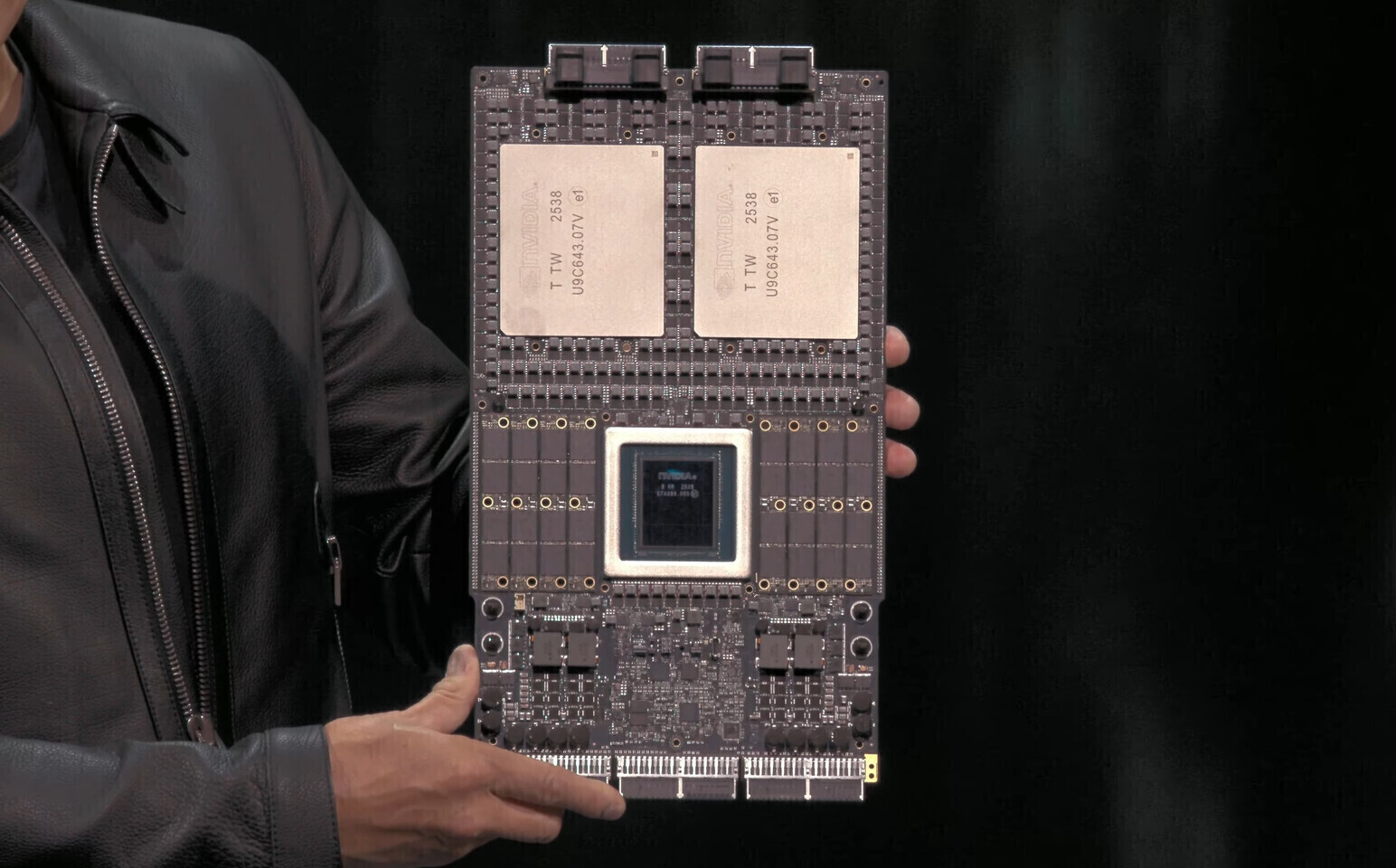

The Vera Rubin VR200 represents a modular, cable-free design that improves reliability and serviceability over its predecessor, the Blackwell platform. Each Rubin GPU within the system outputs 50 PetaFLOPS of FP4 compute, combining to deliver roughly 100 PetaFLOPS for the dual-GPU Superchip configuration.

Key Specifications

- Compute: Two reticle-sized compute chiplets per GPU, paired with eight HBM4 stacks (288 GB per GPU, 576 GB total).

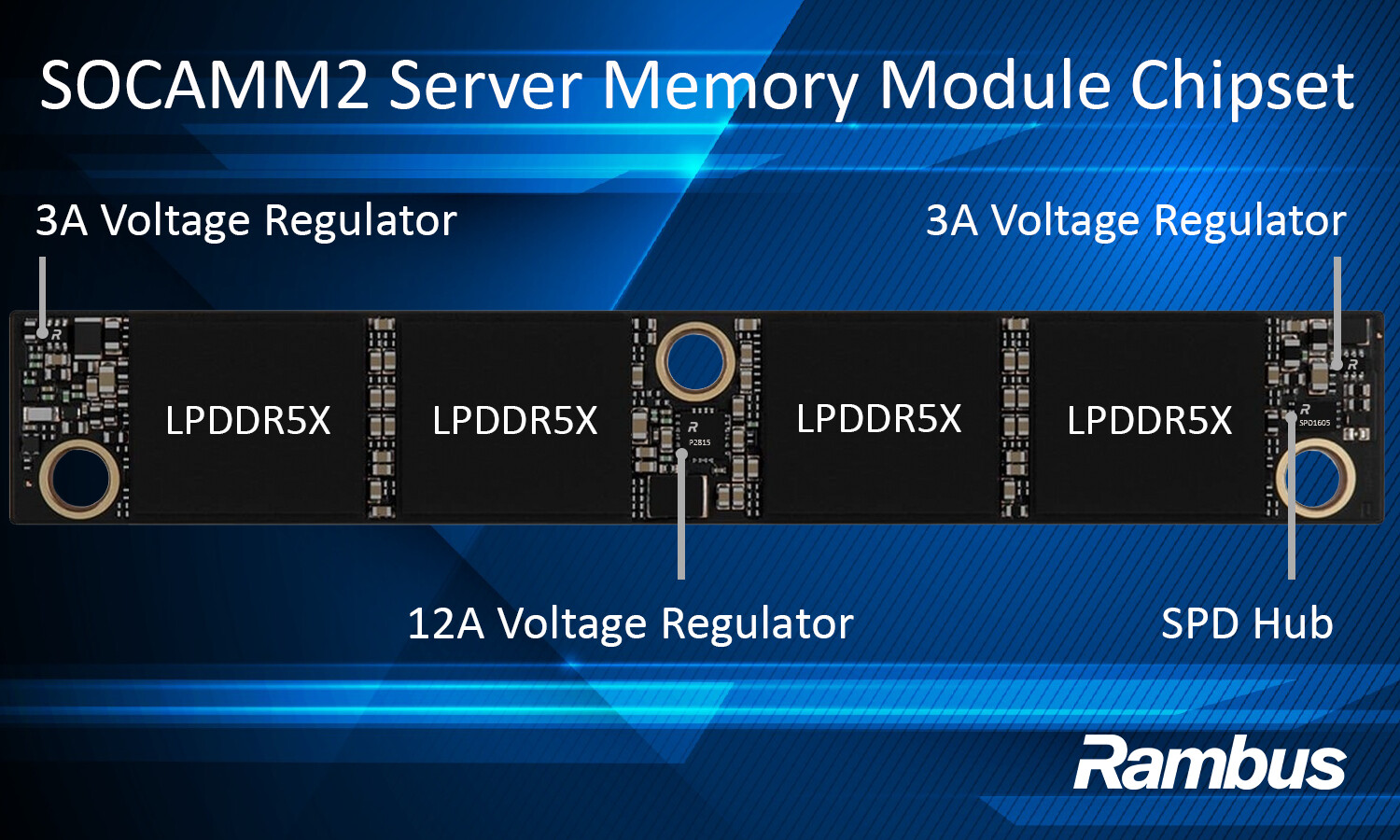

- CPU: Custom Armv9.2 Olympus cores (88 cores, 172 threads) in a SOCAMM form factor.

- Memory: Up to 1.5 TB of LPDDR5X system memory.

- Networking: ConnectX-9 SuperNIC and Spectrum-6 Ethernet switch for high-speed connectivity.

The platform’s efficiency is a direct response to the growing demands of AI workloads, where inference costs have become a critical factor. By leveraging FP4 precision—ideal for large-scale AI models—the Vera Rubin VR200 aims to cut inference expenses by up to 10 times compared to previous generations.

Market Impact and Future Outlook

NVIDIA’s move aligns with its strategy of dominating the AI infrastructure space, particularly in cloud deployments. The company’s financial results for 2025—totaling $215.9 billion, with $68.1 billion in Q4 alone—reflect the strong demand for its solutions. Volume production is expected to begin in the second half of 2026, with industry analysts already projecting high adoption rates.

While NVIDIA has long been a leader in AI hardware, the Vera Rubin VR200 introduces a new level of performance efficiency that could reshape how data centers operate. Competitors like AMD may face increased pressure, particularly given the platform’s modular design and integrated networking capabilities. For now, the focus remains on delivering the promised scalability, with early samples already in the hands of key customers.