NVIDIA has taken a bold step towards solidifying its dominance in the AI landscape with its recent acquisition of Groq. The company's CEO, Jensen Huang, hinted during the Q4 2026 earnings call that Groq's LPU units will play a crucial role in extending NVIDIA's architecture, much like Mellanox did for networking. This strategic move is expected to address latency-sensitive workloads, a growing bottleneck in AI applications.

NVIDIA's acquisition of Groq, valued at up to $20 billion, is the company's largest investment to date. The announcement, made on Christmas Eve, has left industry experts speculating about NVIDIA's plans for integrating Groq's technology. Huang's remarks during the earnings call suggest that Groq will be used as an accelerator in latency-sensitive workloads, particularly in the inference stage of AI applications.

Extending Architecture with Groq

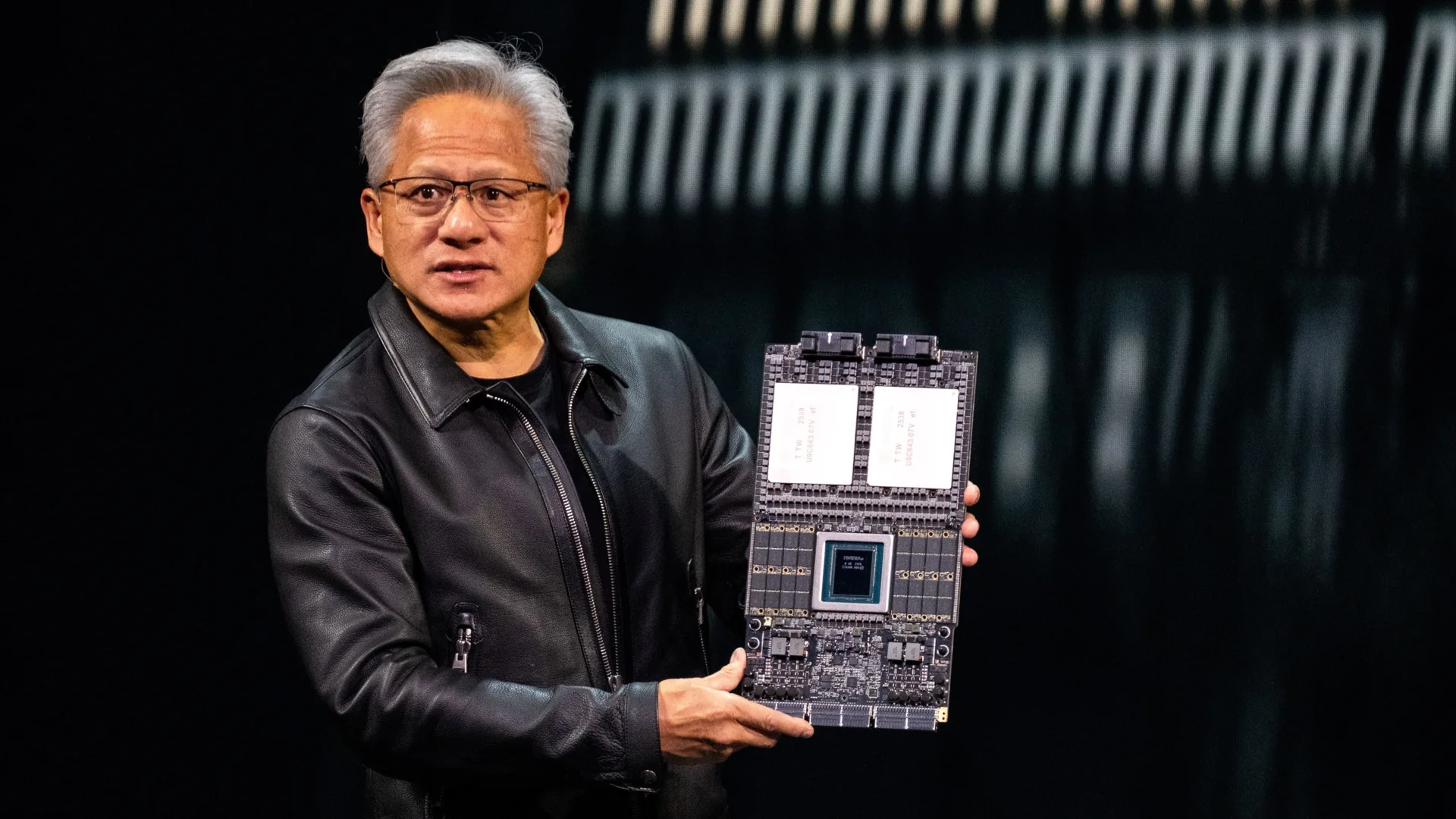

The idea behind this acquisition is to target latency-sensitive workloads, which have become a significant bottleneck for compute providers. NVIDIA has already established itself as a leader in training with its Hopper and Blackwell architectures. However, inference remains an area where the company seeks to solidify its lead. Groq's LPU units are expected to play a massive role in setting the bar for low-latency decoding.

Groq's LPU units leverage on-die SRAM to provide tens of terabytes per second of internal bandwidth, a technology already adopted by companies like Cerebras and Microsoft. The integration of Groq's technology into NVIDIA's architecture could take two main forms. One possibility is the design of hybrid compute nodes within rack-scale offerings, featuring multiple LPUs connected via a unified interconnect. Another theory suggests that LPUs could be integrated as on-die units within Feynman GPUs via hybrid bonding.

Key Specs

- Technology: Groq's LPU units

- Integration: Potential rack-scale or on-die integration with Feynman GPUs

- Value: Up to $20 billion acquisition

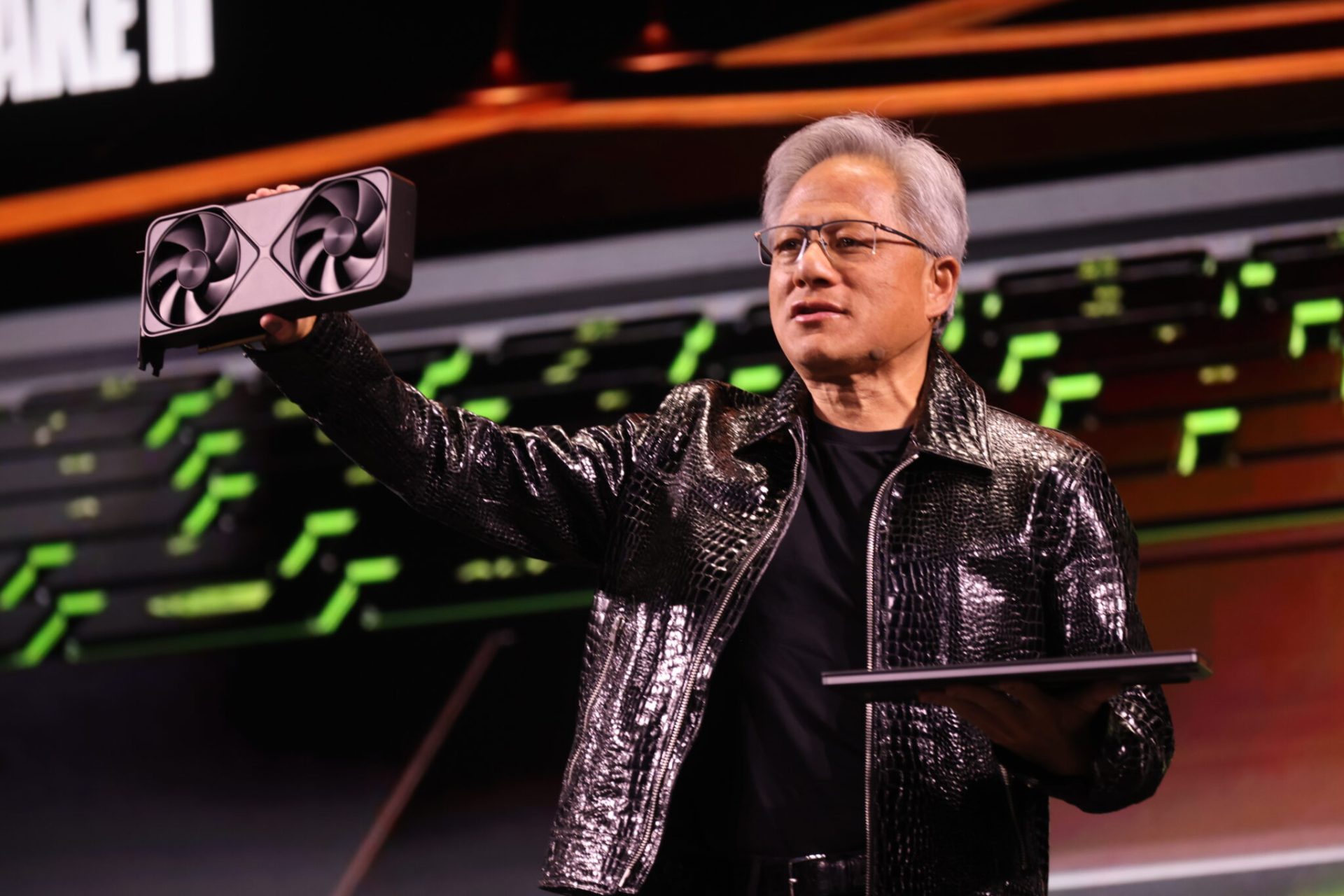

The potential for this hybrid architecture is significant, giving NVIDIA a head start in latency-sensitive workloads. The formal unveiling of NVIDIA's plans for LPUs is expected at the upcoming GTC 2026 event. This move could redefine the landscape for AI inference and agentic environments, where ultra-fast responses are crucial.

What's Still Unknown

While NVIDIA has provided some insights into its plans, many details remain unclear. The exact form of integration, whether rack-scale or on-die, is still under exploration. Additionally, the timeline for the commercial availability of these technologies is not yet confirmed. Industry experts are closely watching GTC 2026 for more concrete announcements and renderings that could shed light on NVIDIA's vision for Groq's LPU units.

NVIDIA's strategic move with Groq underscores its commitment to pushing the boundaries of AI acceleration. By leveraging Groq's technology, the company aims to address the growing demand for low-latency solutions in AI applications. This could mark a new chapter in NVIDIA's journey towards becoming a one-stop shop for all things AI.