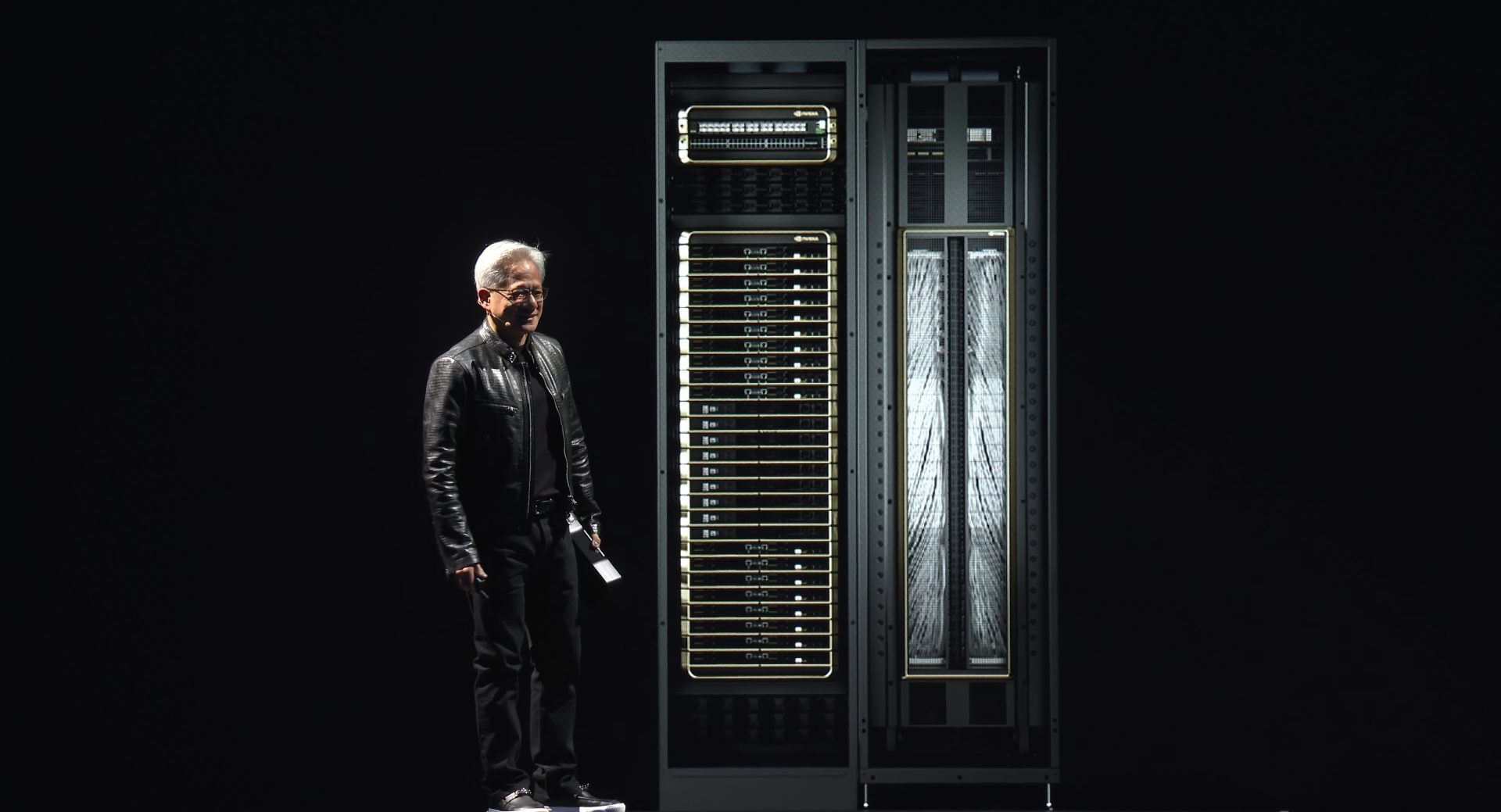

A developer working on a next-generation AI application finds themselves at a crossroads: should they wait for competitors to catch up, or invest in an ecosystem that is already pushing the boundaries of what's possible? NVIDIA has just removed one of those uncertainties.

NVIDIA's latest optimization efforts for DeepSeek V4 are not just about performance—they're about setting a new benchmark. The company has announced day-one support for the Blackwell platform, which could redefine how developers approach large-scale AI workloads. The implications for model training and inference are immediate: up to 3,500 tokens per second on models as large as 1.6 trillion parameters. This isn't incremental progress; it's a leap forward that leaves little room for competitors to match.

What does this mean for developers? It means NVIDIA is no longer just playing catch-up in the AI hardware race. By integrating DeepSeek V4 with Blackwell, NVIDIA has created a platform that addresses two critical pain points: pricing and supply constraints. The Blackwell architecture, designed to handle massive workloads efficiently, could alleviate some of the cost pressures developers face when scaling AI models. At the same time, NVIDIA's early optimization ensures that those who adopt this stack won't be left behind by compatibility risks as the industry evolves.

Performance and Scalability: A New Standard

The numbers tell a story. DeepSeek V4, when paired with Blackwell, can process 3,500 tokens per second on models reaching 1.6 trillion parameters. This isn't just about raw speed—it's about efficiency. The Blackwell platform is built to optimize memory bandwidth and compute power, which translates to faster training times and lower operational costs. For developers, this means the ability to experiment with larger, more complex models without the usual trade-offs in performance or cost.

Market Dynamics: Pricing and Supply

The AI hardware market has been volatile, with pricing fluctuations and supply constraints creating uncertainty for buyers. NVIDIA's move with DeepSeek V4 and Blackwell aims to stabilize this landscape. By pushing the boundaries of what's possible on a single platform, NVIDIA is positioning itself as a one-stop solution for developers who need both performance and reliability. The Blackwell architecture, with its focus on scalability, could also help mitigate some of the supply chain challenges that have plagued the industry in recent years.

Compatibility Risks: A Reality Check

However, not all risks are eliminated. While NVIDIA's day-one support for Blackwell is a significant advantage, developers must still consider how this integration will interact with other software stacks and frameworks. The AI ecosystem is complex, and compatibility isn't just about hardware—it's also about ensuring that the software layers play nicely together. There's no guarantee that every tool or library will be optimized for Blackwell right away, so early adopters may need to navigate some rough edges.

Looking Ahead: The Platform Ecosystem

The most important change here is clear: NVIDIA has shifted the goalposts in the AI hardware competition. By optimizing DeepSeek V4 for Blackwell and delivering day-one support, NVIDIA isn't just keeping pace—it's setting the pace. For developers, this means a platform that combines performance, scalability, and compatibility in ways that were previously unimaginable. The question now is whether competitors can keep up, or if they'll be left watching from the sidelines as NVIDIA solidifies its lead.