Latency in augmented reality is an often-overlooked but critical factor that can make or break professional workflows. Even minor delays can disrupt precision tasks, break immersion, and induce discomfort—particularly in industries where accuracy is non-negotiable. NVIDIA and Apple have now pushed this metric to a new low: under 10 milliseconds for RTX-powered simulations running natively on the Vision Pro.

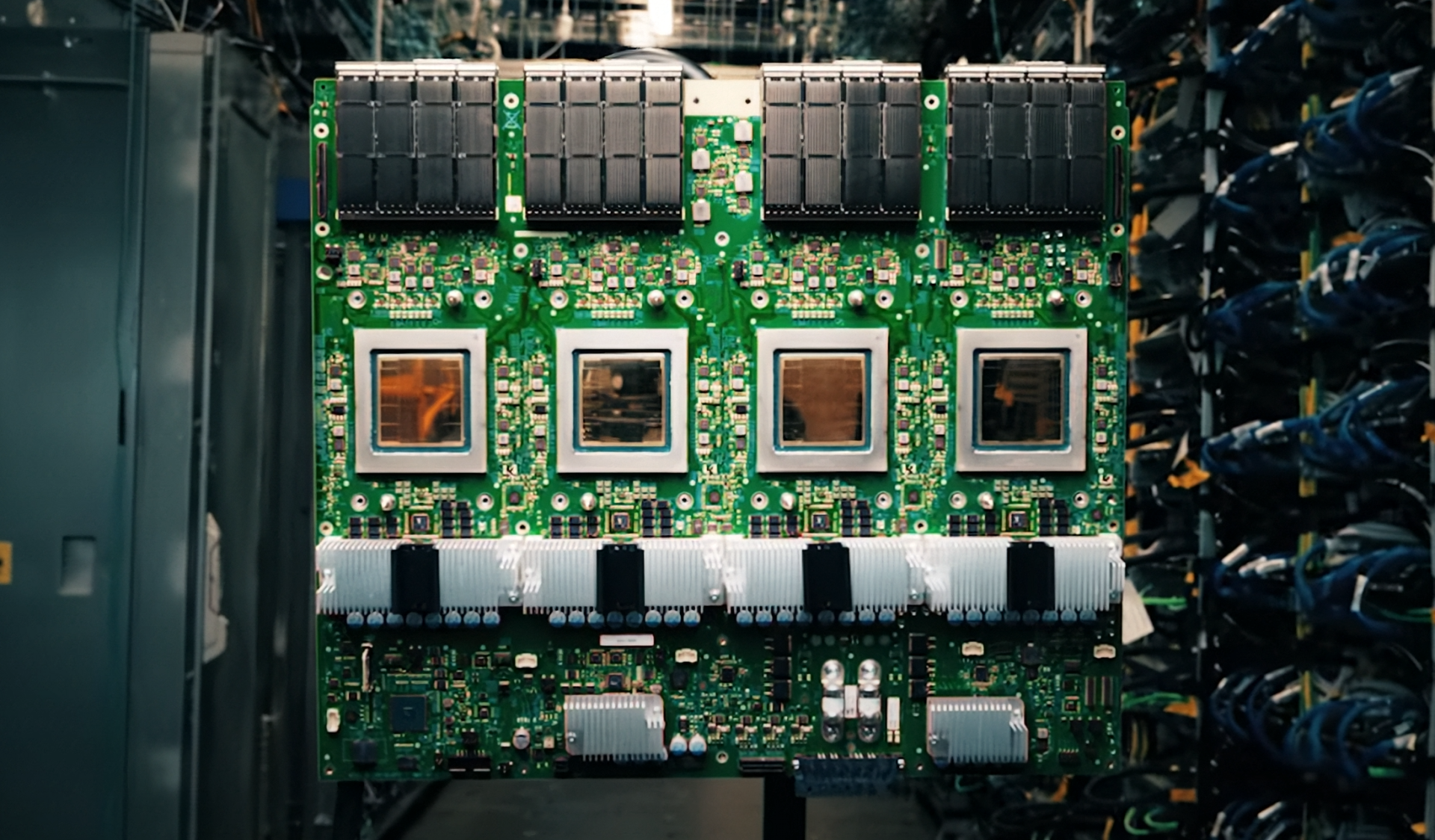

This achievement isn’t just about speed; it’s about redefining what professionals can accomplish in AR without being tethered to high-end workstations. Previously, high-fidelity simulations demanded powerful local GPUs, limiting mobility and collaboration. With this partnership, applications like Immersive for Autodesk VRED can now stream complex 3D models directly to the headset, eliminating the need for on-premises hardware. The key enabler? NVIDIA CloudXR 6.0’s optimized cloud pipeline, which handles rendering tasks while the Vision Pro acts as a lightweight client.

What’s Behind the Sub-10ms Latency?

The breakthrough stems from Apple’s integration of native RTX acceleration into visionOS—a feature that was once exclusive to desktop environments. By offloading rendering workloads to NVIDIA’s cloud infrastructure, the Vision Pro gains the ability to process real-time ray tracing and physics simulations, which are essential for industries like automotive design where timing is everything.

- NVIDIA CloudXR 6.0 compresses data at the source and optimizes network paths to ensure secure, low-latency streaming.

- The Vision Pro’s display refresh rate (up to 90Hz) works in tandem with this system to minimize motion artifacts—a first for enterprise AR headsets.

- Pricing is still under development, but early indications suggest a tiered model combining hardware costs with pay-per-use cloud acceleration fees.

The Broader Impact

This partnership doesn’t just improve performance; it introduces new considerations for IT teams managing cloud-dependent workflows. Balancing local and remote rendering, ensuring network stability in high-latency environments, and adapting to a cloud-native model will be critical for adoption.

If NVIDIA and Apple can sustain sub-10ms latency at scale, this collaboration could accelerate the shift from desktop-bound simulations to portable, cloud-native workflows—a move that would redefine professional training, product development, and remote collaboration. The question remains: Will latency become the new benchmark for enterprise AR, or will other factors like cost, interoperability, and ecosystem support take precedence? One thing is certain: the barrier between cloud power and AR just got significantly lower.