Data centers built around AI training and inference have long been constrained by one stubborn bottleneck: storage. Even as GPUs and accelerators surge forward with terabytes of memory and petabits of throughput, the pipelines connecting them to storage simply couldn’t keep up. That imbalance is now being addressed with the arrival of Micron’s 9650 NVMe SSD—the first PCIe Gen6 enterprise drive to hit mass production.

With up to 28,000MB/s sequential reads and 14,000MB/s writes, the 9650 isn’t just faster than PCIe Gen5 SSDs—it redefines what’s possible in environments where datasets grow larger than RAM itself. For AI operators, this means less time waiting for data to shuffle between storage and accelerators, and more time running models.

The drive’s debut signals a shift in how data centers are architected. No longer can storage be an afterthought; it’s now a critical component of performance, energy efficiency, and even cooling strategy.

Built for the GPU-Direct Era

The 9650 is designed to integrate seamlessly with modern AI platforms where data moves directly between accelerators and storage, bypassing the CPU. This peer-to-peer architecture—already adopted by NVIDIA’s GPU-direct storage—reduces latency by eliminating unnecessary hops. The result? Faster data loading for training jobs and lower overhead during inference, where context windows demand rapid access to vast datasets.

Micron offers two variants to suit different workloads

- Micron 9650 PRO: Optimized for endurance-heavy environments like persistent AI training clusters. Features 1 drive write per day (DWPD) for five years across capacities from 7.68TB to 30.72TB.

- Micron 9650 MAX: Targets high-throughput workloads with up to 900,000 IOPS for random writes, ideal for real-time analytics or mixed AI workloads. Available in 6.4TB to 25.6TB configurations.

Both models use Micron’s G9 TLC NAND and support E1.S and E3.S form factors—standardized for modern data center racks. They also share a 25-watt power envelope, a critical detail in an era where AI clusters consume megawatts.

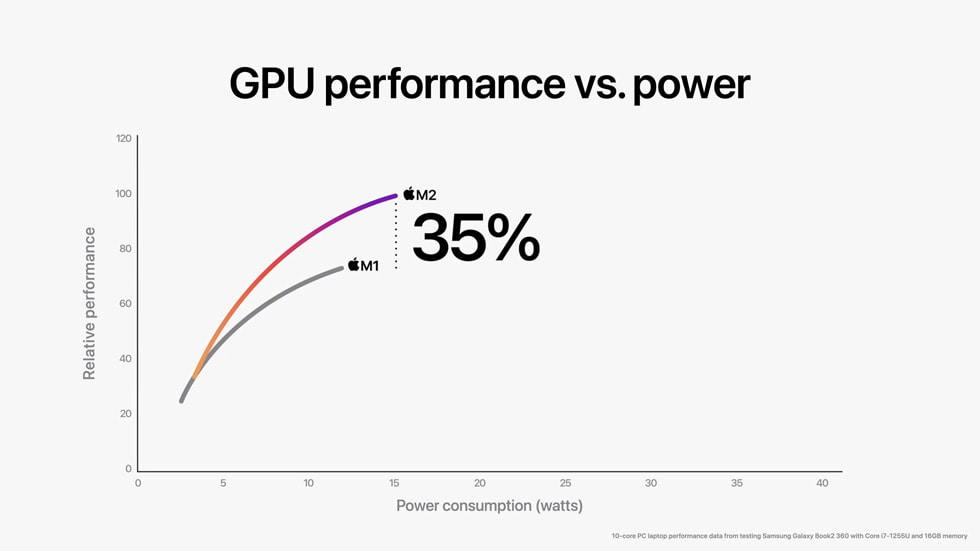

Performance That Outpaces Power

Doubling bandwidth over PCIe Gen5 isn’t just about raw speed—it’s about efficiency. The 9650 delivers

- Sequential read efficiency: 1,120MB/s per watt (vs. ~560MB/s per watt for Gen5).

- Random read efficiency: 1.7× improvement over Gen5.

- Thermal flexibility: Supports both air and liquid cooling, accommodating high-density AI nodes where traditional airflow struggles.

This matters because AI workloads are energy-hungry. By moving more data per watt, the 9650 helps operators squeeze more performance out of constrained power budgets—a growing concern as data centers push toward exawatt-scale consumption.

Who Stands to Gain?

The 9650 isn’t just for hyperscalers. Enterprises running large language models or generative AI pipelines will see immediate benefits in training speed, while cloud providers can offer faster inference services. The drive’s compatibility with GPU-direct storage also aligns with NVIDIA’s NVLink and AMD’s Infinity Fabric architectures, making it a versatile fit for next-gen AI servers.

Early adopters include OEM partners and AI-focused data centers already testing PCIe Gen6 ecosystems. With mass production underway, wider deployment is expected in 2026, though pricing remains under wraps.

For storage, the bottleneck is over—at least until PCIe Gen7 arrives.