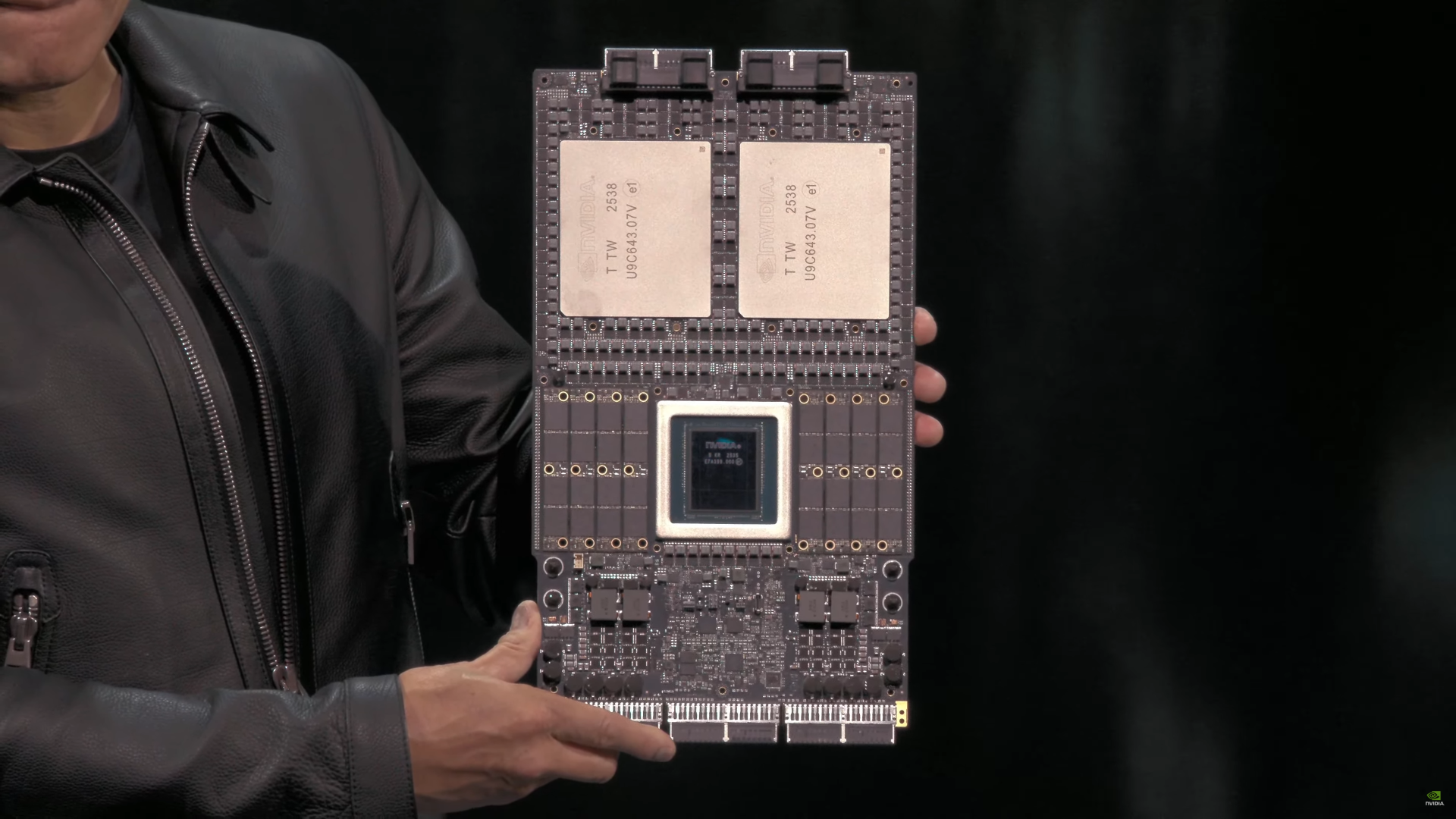

developers are about to get a new tool to tackle performance without the usual heat trade-offs. Intel’s Wildcat Lake GPUs, arriving next week, are designed to squeeze more computing power into smaller, cooler packages than previous generations.

The focus isn’t just on raw performance—it’s on how much work these GPUs can do before they start throttling under sustained loads. That matters for developers who push laptops to their limits, whether rendering 3D models or running AI inference locally. Wildcat Lake claims a 20% improvement in power efficiency over the last mainstream Intel GPU, which translates to longer battery life or more headroom for cooling solutions.

Thermal management has long been a bottleneck for high-end laptop GPUs. Previous generations often hit walls when workloads demanded both raw performance and sustained clock speeds without overheating. Wildcat Lake aims to redefine that balance by integrating smarter power gating and improved thermal interfaces, which Intel says can keep core temperatures 5-7°C lower under typical workloads.

Developers will need to adjust their workflows to take advantage of these gains. The new GPUs don’t just promise better thermals—they also introduce a revised instruction set that improves efficiency in parallelizable tasks, such as matrix operations common in AI development. That could mean faster compiles or smoother rendering without the usual thermal chatter.

Wildcat Lake won’t be a full replacement for Apple’s M-series chips in every scenario, but it offers a mainstream path for developers who need Intel’s ecosystem while avoiding the extreme heat of previous high-end GPUs. The question now is whether this shift will make laptop cooling a less dominant factor—or if developers will simply expect even more from their thermal solutions.