The AI revolution is running into a physical wall: compute. While tech companies race to deploy ever-larger language models and autonomous systems, Google’s AI Studio lead has sounded the alarm over a widening gap between supply and demand that could throttle progress across the industry.

Logan Kilpatrick, who oversees Google AI Studio, has highlighted how the bottleneck is not just theoretical but accelerating. The gap between available compute power and the demands of AI workloads is expanding by a single-digit percentage each day, according to his assessment. This isn’t a temporary hiccup—it’s a structural constraint that will dictate how fast AI can reshape economies and societies.

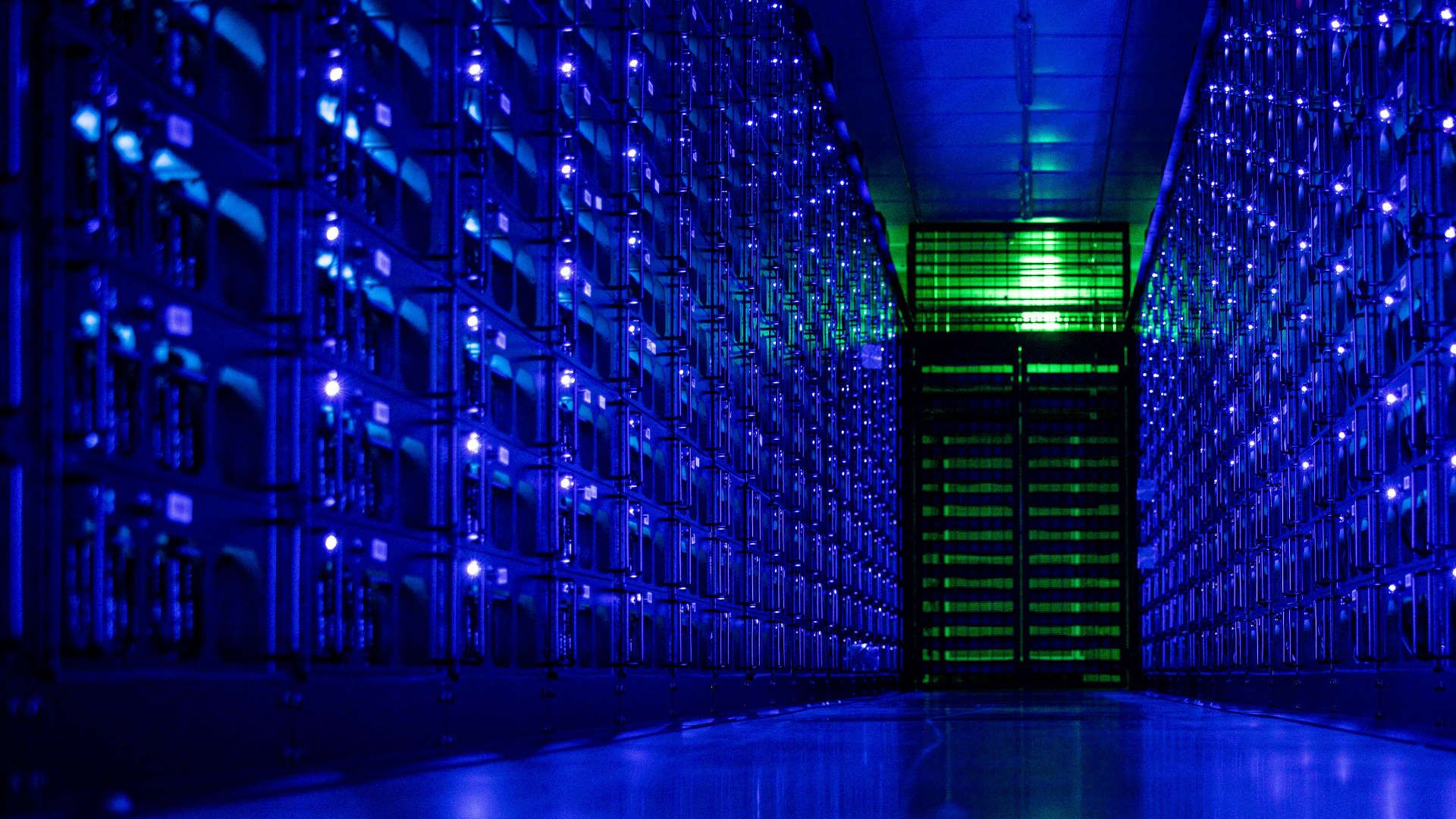

Google’s own internal projections underscore the urgency. An internal presentation from last year revealed the company’s AI infrastructure must double in capacity every six months, with a 1,000x scaling target over the next four to five years. Achieving this would require an unprecedented expansion of data centers, chip manufacturing, and energy infrastructure—none of which can be flipped on overnight.

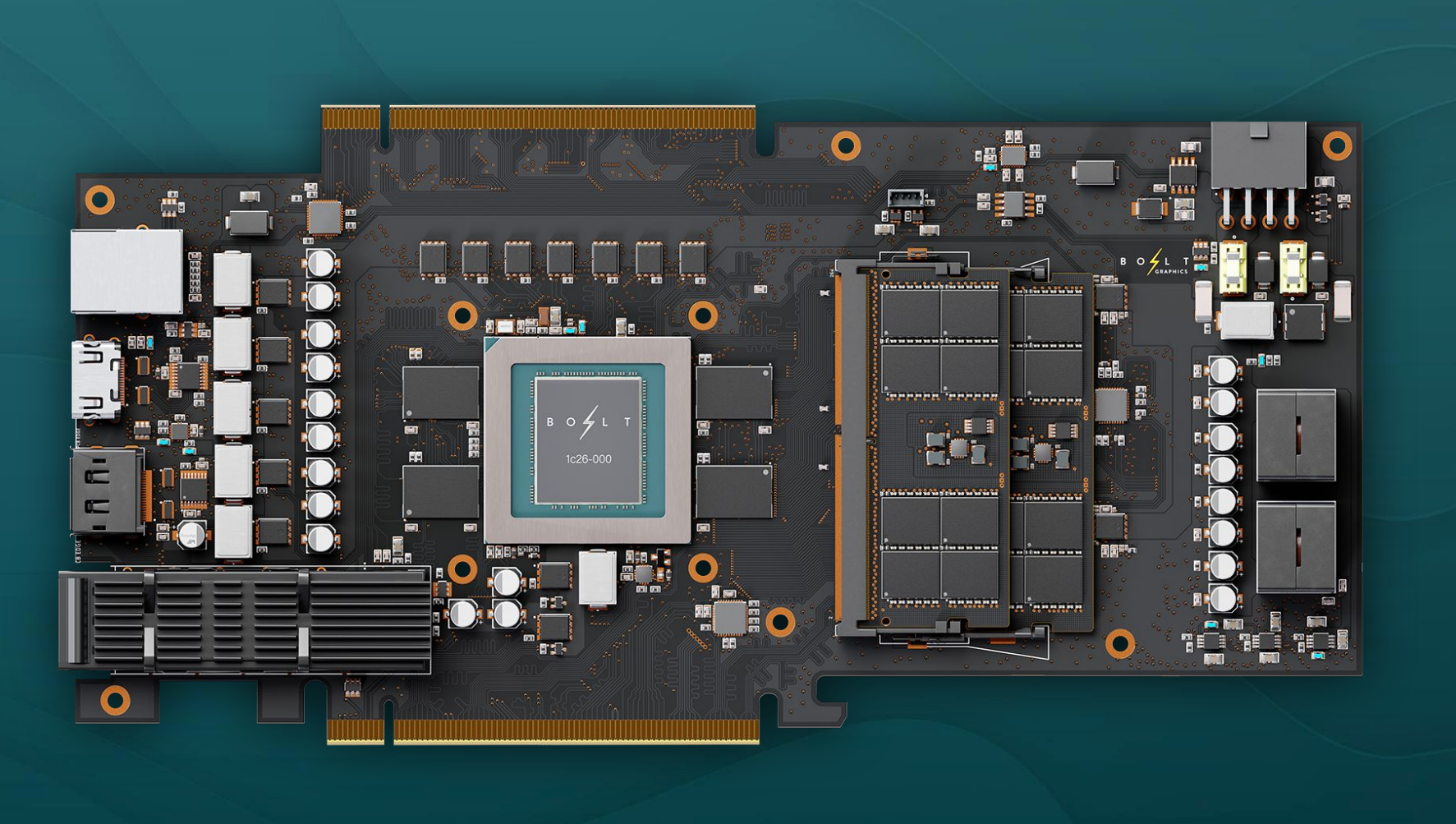

The Chip Shortage Isn’t Just a Problem—It’s a Crisis

At the heart of the issue lies TSMC, the foundry responsible for 90-95% of the world’s most advanced chips. Nvidia CEO Jensen Huang has publicly pressed TSMC to accelerate production, acknowledging that even the industry’s most efficient manufacturer is operating near maximum capacity. New facilities, like TSMC’s Arizona expansion, are in the works, but analysts question whether they’ll arrive in time to meet the surging needs of AI training and inference workloads.

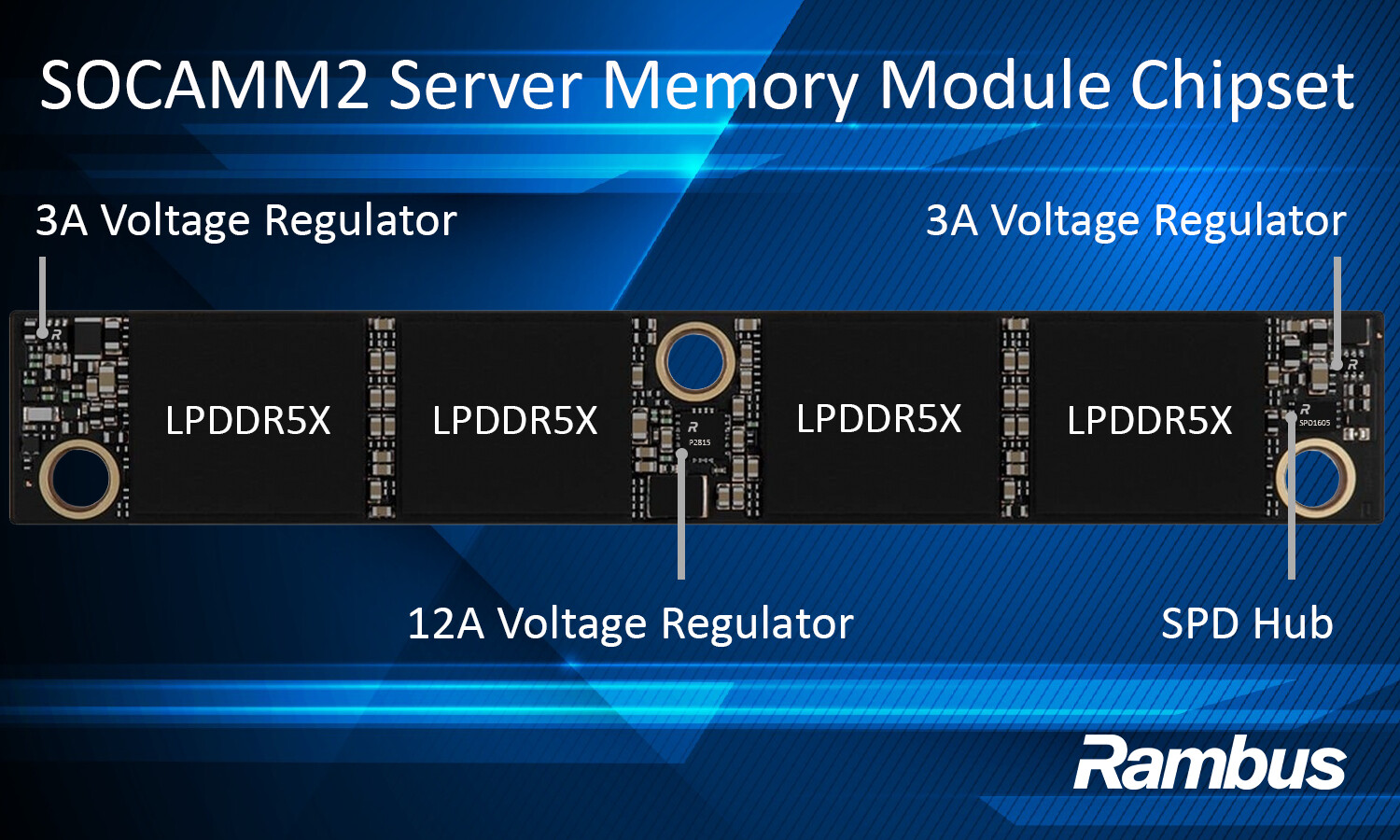

Meanwhile, the DRAM market remains in disarray. AI’s voracious appetite for memory has sent prices soaring, creating a ripple effect that has pushed up costs for everything from laptops to gaming consoles. Unlike traditional hardware shortages, this one isn’t easing—it’s being exacerbated by the relentless growth of AI models that demand ever-larger pools of RAM and storage.

AI’s Growth Isn’t Just Limited by Code—It’s Limited by Supply Chains

The implications extend beyond hardware shortages. Industry observers argue that AI’s expansion is no longer just a software story—it’s a supply chain story. Even as companies like Meta deploy temporary data centers (literally housed in tents), the fundamental constraint remains: there’s only so much silicon, power, and cooling infrastructure to go around.

This reality could have unintended consequences. A recent report by Citrini Research painted a grim scenario where unchecked AI adoption leads to economic instability, triggering protests and market disruptions. While the report’s doomsday predictions may be extreme, it raises a critical question: if even Google is hitting compute limits, how long until the bottleneck slows down innovation for everyone?

For now, the bottleneck isn’t just a technical challenge—it’s a market signal. The pace at which AI transforms industries may be dictated less by algorithmic breakthroughs and more by the physical capacity to run them. Without major efficiency gains in software or a sudden surge in chip production, the gap will continue to widen, acting as an invisible brake on progress.

In an era where tech giants promise to redefine entire sectors, the compute bottleneck serves as a reminder: the future of AI isn’t just about what’s possible—it’s about what’s feasible.