Small businesses and data centers looking for memory that can handle AI workloads now have a new option. A recently introduced DDR5 RDIMM module is built with reliability and bandwidth in mind, promising faster data processing and better compatibility with modern server hardware.

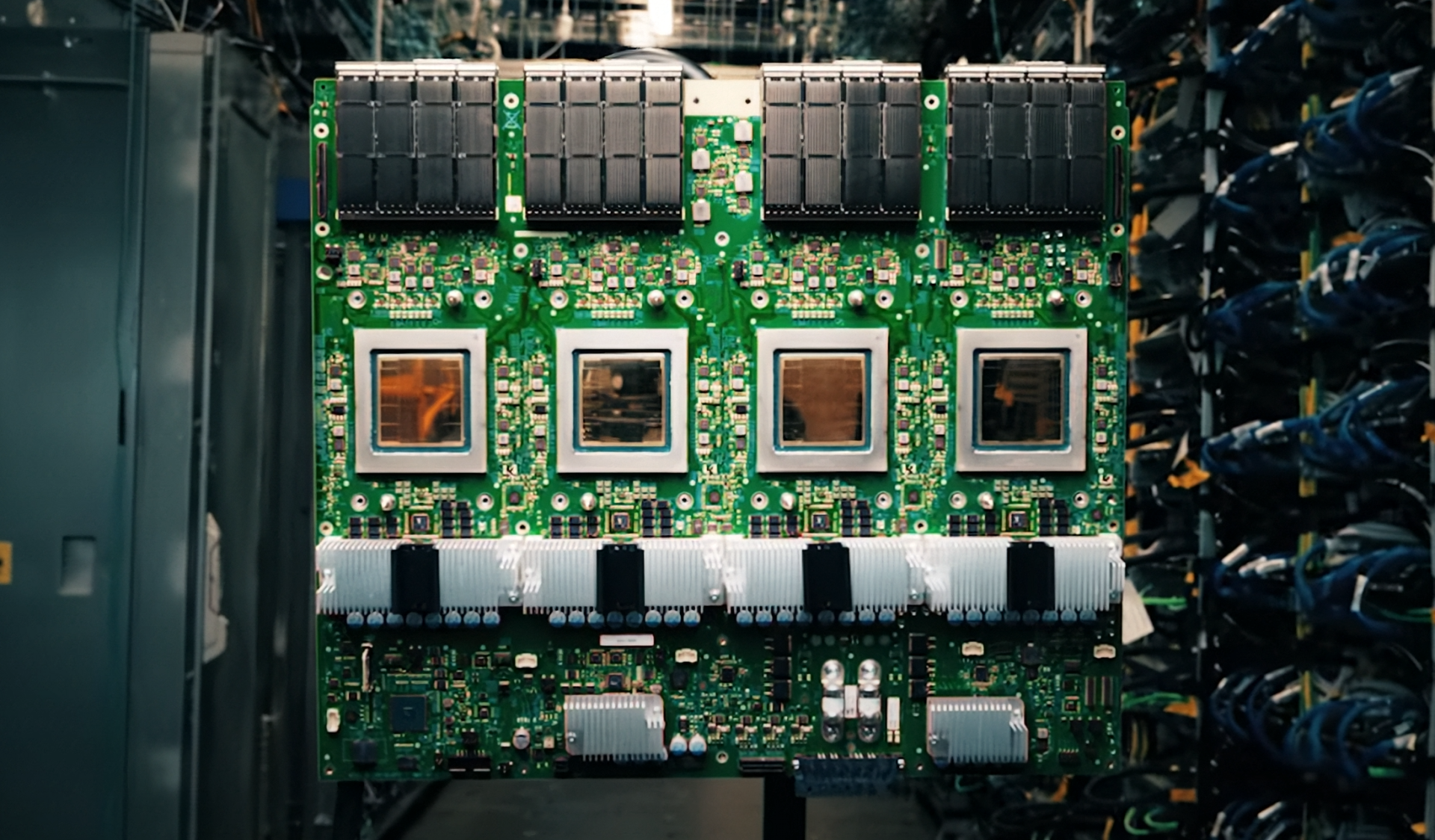

The module features 32 internal banks across eight groups, which the manufacturer says maximizes throughput for next-generation servers. It supports speeds of up to 6400 MT/s, a significant jump from earlier DDR5 standards, allowing enterprises to push more data through their systems without bottlenecks.

Reliability is a key focus here. The design includes On-Die ECC technology and a Registering Clock Driver (RCD), which the company claims improves fault tolerance compared to standard ECC UDIMMs. This means less risk of data corruption in mission-critical environments where uptime is non-negotiable.

Compatibility is another strong point. The module has been tested with mainstream Intel and AMD server platforms, so businesses won’t have to worry about whether it will fit into their existing infrastructure. It also operates at a low 1.1 V, which helps with power efficiency—a big deal for data centers looking to cut costs.

Available in 16 GB, 32 GB, and 64 GB capacities, the module is designed for scalability. Businesses can start small and expand as their needs grow without having to switch out entire memory systems. A limited lifetime warranty backs it up, giving long-term confidence for those investing in AI-driven workloads.

For now, pricing isn’t confirmed, but given current market trends—especially with DRAM shortages still affecting supply—costs are likely to be higher than standard DDR5 modules. If you’re running or planning an AI-heavy server setup, this could be worth watching as demand for high-bandwidth memory continues to rise.