The sequel to Deus Ex was supposed to set a new standard for immersive simulation. Instead, it became a cautionary tale about technical debt, creative compromise, and the hidden costs of shared development pipelines.

At its core, Deus Ex: Invisible War was a game that wanted to be dense, dynamic, and expansive—full of environmental details, reactive lighting, and seamless transitions between spaces. But the foundation it was built on couldn’t support those ambitions. The decision to share an engine with Thief: Deadly Shadows, a game designed for smaller, more controlled environments, proved disastrous. The resulting tech stack was ill-suited to Invisible War’s vision, forcing developers to strip assets, compartmentalize levels, and constantly fight for performance.

Performance vs. Vision

The engine, originally intended for Thief, struggled with the sheer scale of Invisible War’s world. Environmental details—objects, textures, lighting—brought frame rates crashing down. The team had to remove or simplify assets just to keep the game running smoothly. This wasn’t a minor tweak; it was a structural limitation that reshaped the game’s design from the ground up.

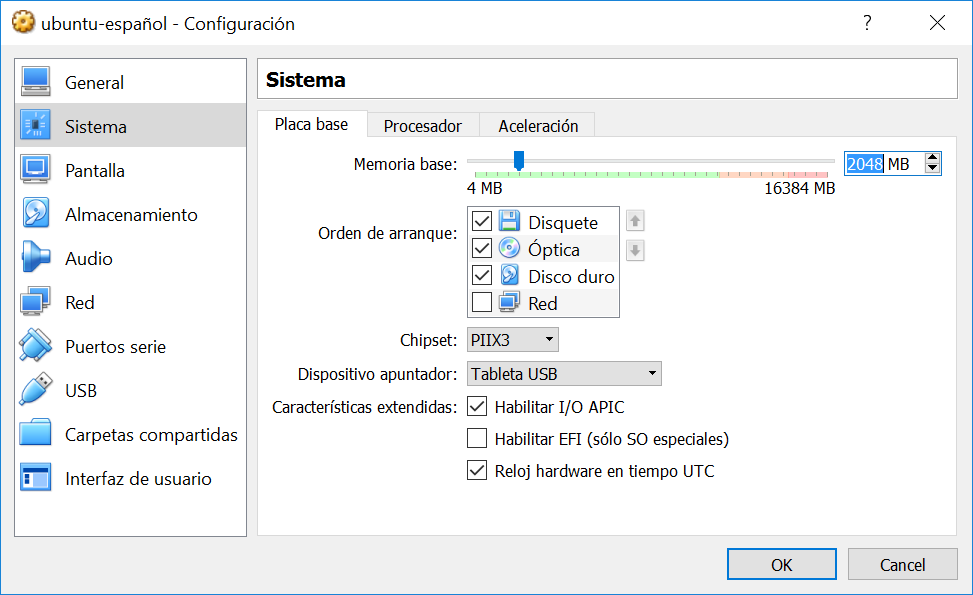

- Engine: Modified Unreal Engine 2 (focused on Thief’s needs, not Deus Ex)

- Key issue: Object density and reactive lighting caused severe performance drops

- Result: Heavy reliance on loading screens to maintain playability

The original game’s designer, Harvey Smith, later reflected that the engine choice was a critical misstep. The point of our game was to be object-dense, he said. Using that engine meant we had to compartmentalize. The abundance of loading screens became a defining—and criticized—feature, breaking immersion in a way no one intended.

Corporate Pressure and Platform Shifts

The technical challenges were compounded by external pressures. Eidos, the game’s publisher, began pushing for a console version targeting Microsoft’s new Xbox platform. The decision was driven by a misguided belief that first-person shooters didn’t sell on PC—a theory that clashed with both market trends and developer instincts.

Adapting to the Xbox’s fixed specifications made an already strained engine even more problematic. Memory constraints forced further compromises, while the team struggled to balance performance across platforms. The result was a game that felt buggy and uneven on console, angering fans who had followed the original’s success closely.

- Platforms: PC and Xbox (console version exacerbated existing issues)

- Key constraint: Xbox’s fixed spec limited memory and performance flexibility

- Outcome: A game that felt unfinished and inconsistent on both platforms

A Legacy of Missed Potential

Invisible War’s final form was a compromise between what the team wanted to build and what the engine—and later, Eidos—allowed. The creative vision was stifled by technical limitations, leaving behind a game that was functional but lacked the ambition of its predecessor.

For Smith, the experience was deeply personal. He carried regret over decisions made as project director, acknowledging that the fallout from Invisible War’s reception was painful. Yet, the long-term impact was more positive: the dissatisfaction with Eidos led to a shift in talent and creativity. Smith, along with lead designer Ricardo Bare and others, left the company in 2004, later contributing to groundbreaking immersive sims like Dishonored and Prey.

The story of Invisible War serves as a reminder that even the most talented teams can be derailed by poor technical choices. It also underscores how corporate decisions—no matter how misguided—can reshape a game’s trajectory, leaving behind a product that feels like it was built against its own potential.

For IT teams and developers today, the lesson is clear: engine selection isn’t just about performance benchmarks or feature sets. It’s about long-term roadmap compatibility and creative flexibility. A wrong choice can turn an ambitious project into a series of compromises, no matter how skilled the team behind it.